Optimization and Mobile Deployment for Anthropocene Neural Style Transfer

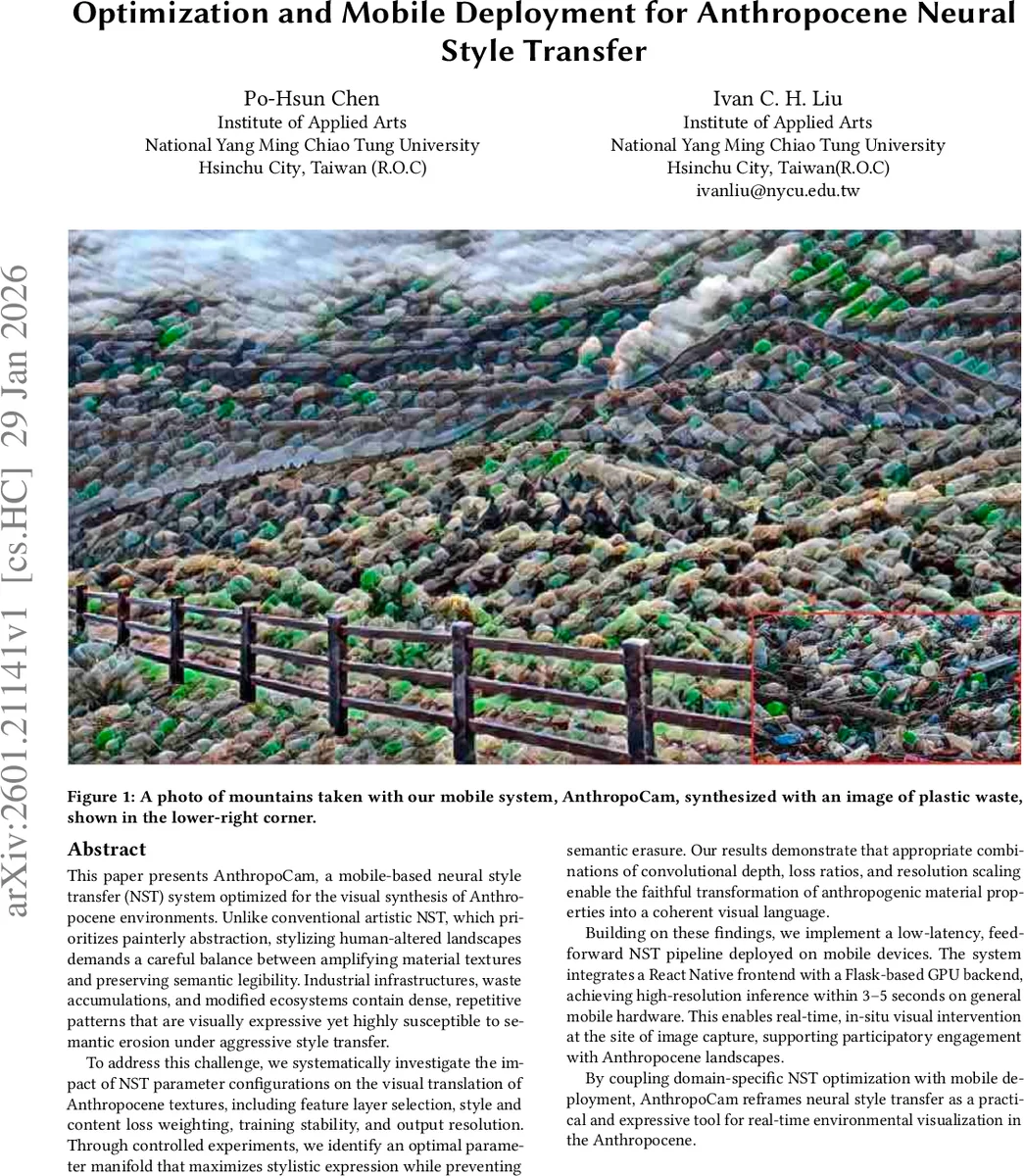

This paper presents AnthropoCam, a mobile-based neural style transfer (NST) system optimized for the visual synthesis of Anthropocene environments. Unlike conventional artistic NST, which prioritizes painterly abstraction, stylizing human-altered landscapes demands a careful balance between amplifying material textures and preserving semantic legibility. Industrial infrastructures, waste accumulations, and modified ecosystems contain dense, repetitive patterns that are visually expressive yet highly susceptible to semantic erosion under aggressive style transfer. To address this challenge, we systematically investigate the impact of NST parameter configurations on the visual translation of Anthropocene textures, including feature layer selection, style and content loss weighting, training stability, and output resolution. Through controlled experiments, we identify an optimal parameter manifold that maximizes stylistic expression while preventing semantic erasure. Our results demonstrate that appropriate combinations of convolutional depth, loss ratios, and resolution scaling enable the faithful transformation of anthropogenic material properties into a coherent visual language. Building on these findings, we implement a low-latency, feed-forward NST pipeline deployed on mobile devices. The system integrates a React Native frontend with a Flask-based GPU backend, achieving high-resolution inference within 3-5 seconds on general mobile hardware. This enables real-time, in-situ visual intervention at the site of image capture, supporting participatory engagement with Anthropocene landscapes. By coupling domain-specific NST optimization with mobile deployment, AnthropoCam reframes neural style transfer as a practical and expressive tool for real-time environmental visualization in the Anthropocene.

💡 Research Summary

The paper introduces AnthropoCam, a mobile‑focused neural style transfer (NST) system designed to visualize Anthropocene environments—landscapes dominated by industrial infrastructure, waste accumulations, and altered ecosystems. Unlike conventional artistic NST, which emphasizes painterly abstraction, Anthropocene imagery requires a delicate balance between amplifying material textures and preserving semantic legibility. To achieve this, the authors conduct a systematic exploration of NST parameters: feature‑layer selection, style‑to‑content loss weighting, total‑variation regularization, and output resolution. Using a VGG‑16 backbone, they fix content features at conv3_3 and experiment with style Gram‑matrices from shallow (conv2_2, conv3_1) to deep layers (conv4_2, conv4_3). Shallow layers retain fine‑grained details for filamentous patterns, while deeper layers capture the modular structure of large industrial forms.

Loss weighting experiments reveal that a content‑to‑style ratio of roughly 1:5 yields the best trade‑off: lower style weights produce weak visual impact, higher weights cause semantic erosion. The TV regularizer (γ) mitigates high‑frequency noise typical of mobile‑captured images. Dataset consistency is also critical; training on stylistically homogeneous images prevents averaging effects that dilute texture strength. Additional techniques such as leveraging transparent style regions and localized style cropping further enhance control over haze effects and color/shape transfer without overwhelming the underlying scene.

For deployment, the authors replace iterative optimization with a feed‑forward transformation network trained on specific Anthropocene styles. The inference pipeline runs on a GPU‑enabled Flask server, while a React Native front‑end handles image capture, style selection, and communication via a REST API. The system delivers high‑resolution stylized outputs in 3–5 seconds on typical smartphones, meeting real‑time, low‑latency requirements.

Overall, the work provides a comprehensive framework that couples domain‑specific NST optimization with practical mobile deployment, turning neural style transfer into an accessible tool for participatory environmental visualization and discourse in the Anthropocene era.

Comments & Academic Discussion

Loading comments...

Leave a Comment