WheelArm-Sim: A Manipulation and Navigation Combined Multimodal Synthetic Data Generation Simulator for Unified Control in Assistive Robotics

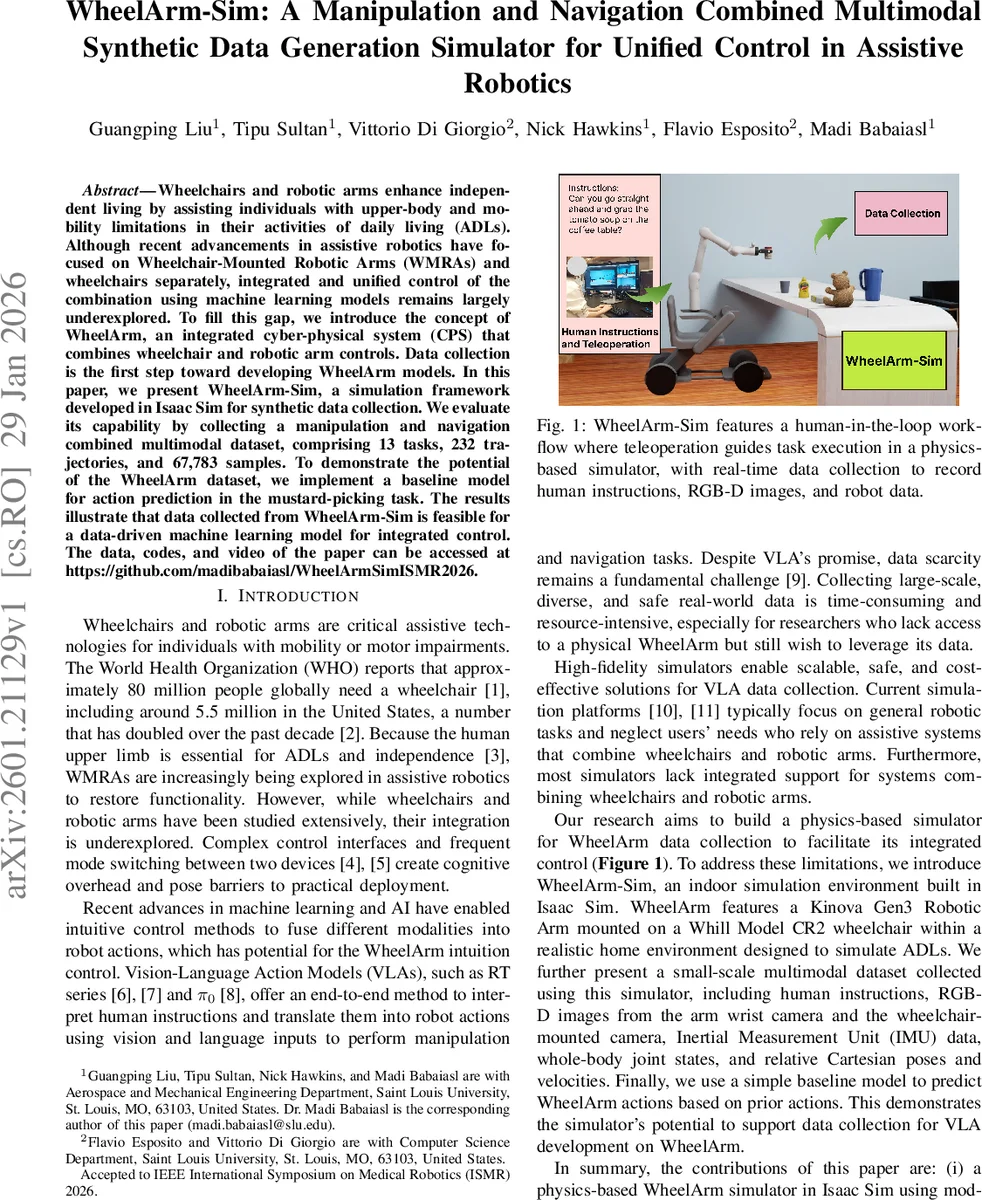

Wheelchairs and robotic arms enhance independent living by assisting individuals with upper-body and mobility limitations in their activities of daily living (ADLs). Although recent advancements in assistive robotics have focused on Wheelchair-Mounted Robotic Arms (WMRAs) and wheelchairs separately, integrated and unified control of the combination using machine learning models remains largely underexplored. To fill this gap, we introduce the concept of WheelArm, an integrated cyber-physical system (CPS) that combines wheelchair and robotic arm controls. Data collection is the first step toward developing WheelArm models. In this paper, we present WheelArm-Sim, a simulation framework developed in Isaac Sim for synthetic data collection. We evaluate its capability by collecting a manipulation and navigation combined multimodal dataset, comprising 13 tasks, 232 trajectories, and 67,783 samples. To demonstrate the potential of the WheelArm dataset, we implement a baseline model for action prediction in the mustard-picking task. The results illustrate that data collected from WheelArm-Sim is feasible for a data-driven machine learning model for integrated control.

💡 Research Summary

The paper introduces WheelArm‑Sim, a high‑fidelity simulation framework built on NVIDIA Isaac Sim for generating synthetic multimodal data to enable unified control of a wheelchair‑mounted robotic arm (WheelArm). Recognizing that assistive robotics research has largely treated wheelchairs and robotic arms as separate entities, the authors propose the WheelArm concept—a cyber‑physical system that integrates the control of both devices. To support data‑driven machine‑learning models for such integrated control, they develop a physics‑based indoor environment that includes a Whill CR2 powered wheelchair and a Kinova Gen3 7‑DOF arm, equipped with two RGB‑D cameras (one on the arm wrist, one on the wheelchair), an IMU, and full joint state reporting.

Data collection is performed via a human‑in‑the‑loop teleoperation workflow. Keyboard commands (through ROS2’s teleop_twist_keyboard) drive differential wheelchair motion and issue target poses for the arm; a custom ROS2 node computes inverse kinematics using a screw‑theory based Newton‑Raphson algorithm to convert end‑effector goals into joint angles. All sensor streams (images, depth, IMU, joint positions/velocities, Cartesian poses) are timestamped, synchronized, and saved in compressed HDF5 files. The authors also provide a GUI within Isaac Sim for labeling files, entering natural‑language instructions, and controlling start/stop of recordings.

The resulting dataset comprises 13 ADL‑oriented tasks split into two activity categories (Organization and Serve‑Yourself), covering 232 distinct trajectories and a total of 67,783 samples. Tasks include navigation to a target location, picking and placing various objects (knife, cup, bottle, teddy bear), opening a drawer, and selecting food or drink items (mustard, crackers, soup, etc.). Objects are a mix of rigid and deformable items, with physical properties (e.g., Young’s modulus, friction) tuned to emulate realistic grasping behavior.

To demonstrate the utility of the dataset, the authors implement a baseline multimodal sequence model for the mustard‑picking task. The architecture encodes RGB images with a pre‑trained ResNet‑18 (minus the final fully‑connected layer), depth images with a shallow CNN, textual instructions with a Word2Vec embedding fine‑tuned during training, and joint/pose data with a linear network. Features are fused via a multilayer perceptron (output dimension 128) and fed into a single‑layer LSTM (hidden size 128) that predicts the next robot pose (Cartesian position, quaternion orientation, and gripper angle). Training uses Adam (lr = 1e‑4, weight decay = 1e‑4), MSE loss, batch size 16, and early stopping. On a split of 20 training/validation experiments and 4 test runs, the model achieves low prediction error, indicating that synthetic data from WheelArm‑Sim can support learning of integrated control policies.

The paper’s contributions are fourfold: (i) a physics‑accurate WheelArm simulator integrating wheelchair and robotic arm dynamics; (ii) a ROS2‑based teleoperation and data‑logging pipeline with a user‑friendly GUI; (iii) a multimodal dataset that uniquely combines navigation and manipulation for assistive tasks; and (iv) an empirical baseline showing feasibility of data‑driven unified control. Limitations include the absence of a human avatar (thus no assistive hand‑over or feeding tasks) and the inevitable simulation‑to‑real gap, which the authors acknowledge as future work. Prospective directions involve coupling the simulator with real WheelArm hardware, expanding the language‑action repertoire, applying reinforcement learning for policy optimization, and developing personalized, low‑cognitive‑load interfaces for end‑users.

Comments & Academic Discussion

Loading comments...

Leave a Comment