PathWise: Planning through World Model for Automated Heuristic Design via Self-Evolving LLMs

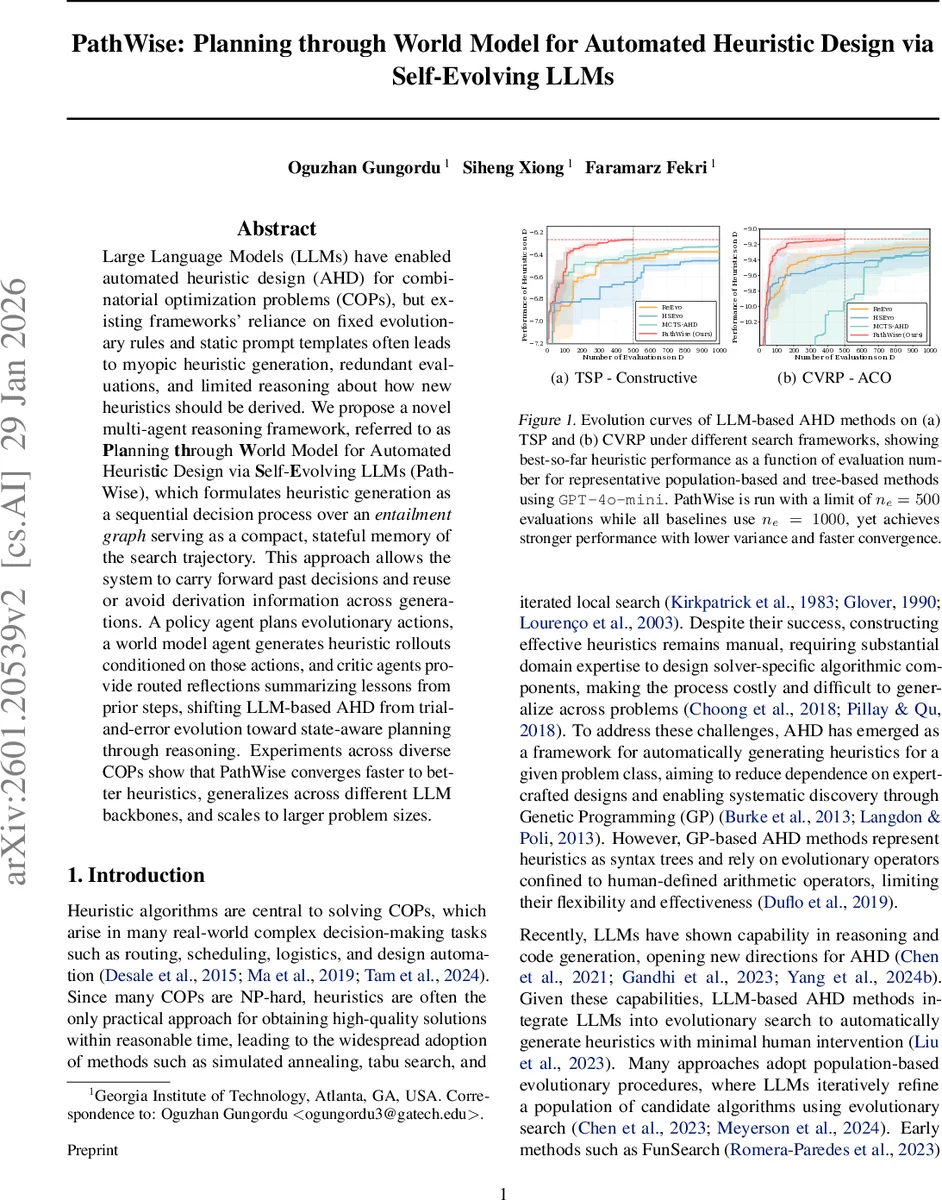

Large Language Models (LLMs) have enabled automated heuristic design (AHD) for combinatorial optimization problems (COPs), but existing frameworks’ reliance on fixed evolutionary rules and static prompt templates often leads to myopic heuristic generation, redundant evaluations, and limited reasoning about how new heuristics should be derived. We propose a novel multi-agent reasoning framework, referred to as Planning through World Model for Automated Heuristic Design via Self-Evolving LLMs (PathWise), which formulates heuristic generation as a sequential decision process over an entailment graph serving as a compact, stateful memory of the search trajectory. This approach allows the system to carry forward past decisions and reuse or avoid derivation information across generations. A policy agent plans evolutionary actions, a world model agent generates heuristic rollouts conditioned on those actions, and critic agents provide routed reflections summarizing lessons from prior steps, shifting LLM-based AHD from trial-and-error evolution toward state-aware planning through reasoning. Experiments across diverse COPs show that PathWise converges faster to better heuristics, generalizes across different LLM backbones, and scales to larger problem sizes.

💡 Research Summary

The paper addresses the growing interest in using large language models (LLMs) to automate the design of heuristics for combinatorial optimization problems (COPs). Existing LLM‑based Automated Heuristic Design (AHD) approaches typically rely on fixed evolutionary operators, static prompt templates, and either population‑based or tree‑based search structures. These designs treat each heuristic generation step as an isolated trial‑and‑error event, leading to redundant evaluations, limited reasoning about why a particular transformation succeeded, and inefficient use of costly LLM calls.

To overcome these limitations, the authors propose PathWise, a multi‑agent reasoning framework that treats heuristic discovery as a sequential decision‑making process over an entailment graph. The entailment graph is a compact, stateful memory that records every generated heuristic as a node containing (i) the executable code, (ii) a natural‑language derivation rationale (κ), (iii) an algorithmic description, (iv) its performance on a validation set, and (v) compressed parent metadata. Directed edges encode how a child heuristic is derived from a selected parent set under a specific rationale, thereby preserving the logical lineage of all transformations.

PathWise operates on two timescales. An outer loop maintains a population of root nodes (size Nₚ) that serve as the current “frontier.” Within each outer iteration, an inner loop incrementally expands the entailment graph by performing entailment steps: a Policy Agent (πₚ) observes the current graph and, guided by feedback from a Policy Critic, samples Nₐ candidate actions. Each action consists of a parent set S and a natural‑language directive κ that specifies how the selected heuristics should be modified or combined. This high‑level planning replaces rigid operator sets with flexible, language‑driven transformations, allowing the system to invent new operators on the fly.

For each candidate action, a World Model Agent (π_wm) generates N_w code rollouts conditioned on the selected parents, the directive κ, and feedback from a World Model Critic. The rollouts are evaluated on a problem instance distribution D, and the best‑performing code is inserted into the graph as a new node. The graph is then updated: the new node is added, the used parents are pruned (except for the global best node), and the state remains compact. This update rule balances exploration (new derivations), exploitation (retaining the best heuristic), and complexity control.

A key innovation is the diversity mechanism. When the policy and world model begin to produce homogeneous outputs, the critics deliberately perturb the prompt templates (Prompt Perturbation) and adjust sampling temperatures to force exploration of new regions of the search space. This prevents premature convergence and encourages the discovery of novel heuristic structures.

The authors evaluate PathWise on a suite of COPs—including the Traveling Salesman Problem (TSP), Capacitated Vehicle Routing Problem (CVRP), and various scheduling and batch‑processing tasks—using multiple LLM backbones (GPT‑4o‑mini, GPT‑4‑Turbo, etc.). With an evaluation budget of 500 calls (half the 1000 calls used by baselines), PathWise consistently achieves higher expected negative cost (i.e., better solution quality) than state‑of‑the‑art population‑based methods (FunSearch, HSEvo) and tree‑based MCTS‑AHD. Convergence is 1.8–2.3× faster, and performance gains range from 5 % to 12 % across problem types. Moreover, when scaling to larger instances (500–1000 nodes), the graph‑based memory dramatically reduces redundant evaluations, enabling the framework to maintain its advantage.

In summary, PathWise introduces four major contributions: (1) a hybrid graph‑population formulation that encodes derivation rationale and parent performance as a shared state; (2) a coordinated multi‑agent system (policy, world model, and critics) that enables self‑evolving, state‑aware heuristic generation; (3) prompt‑level diversity techniques that expand exploration without altering the underlying graph topology; and (4) extensive empirical validation demonstrating faster convergence, higher solution quality, and better scalability compared to existing LLM‑based AHD approaches. By reframing heuristic design as a planning problem over a structured memory, PathWise significantly advances the efficiency and generality of automated heuristic synthesis.

Comments & Academic Discussion

Loading comments...

Leave a Comment