MobileBench-OL: A Comprehensive Chinese Benchmark for Evaluating Mobile GUI Agents in Real-World Environment

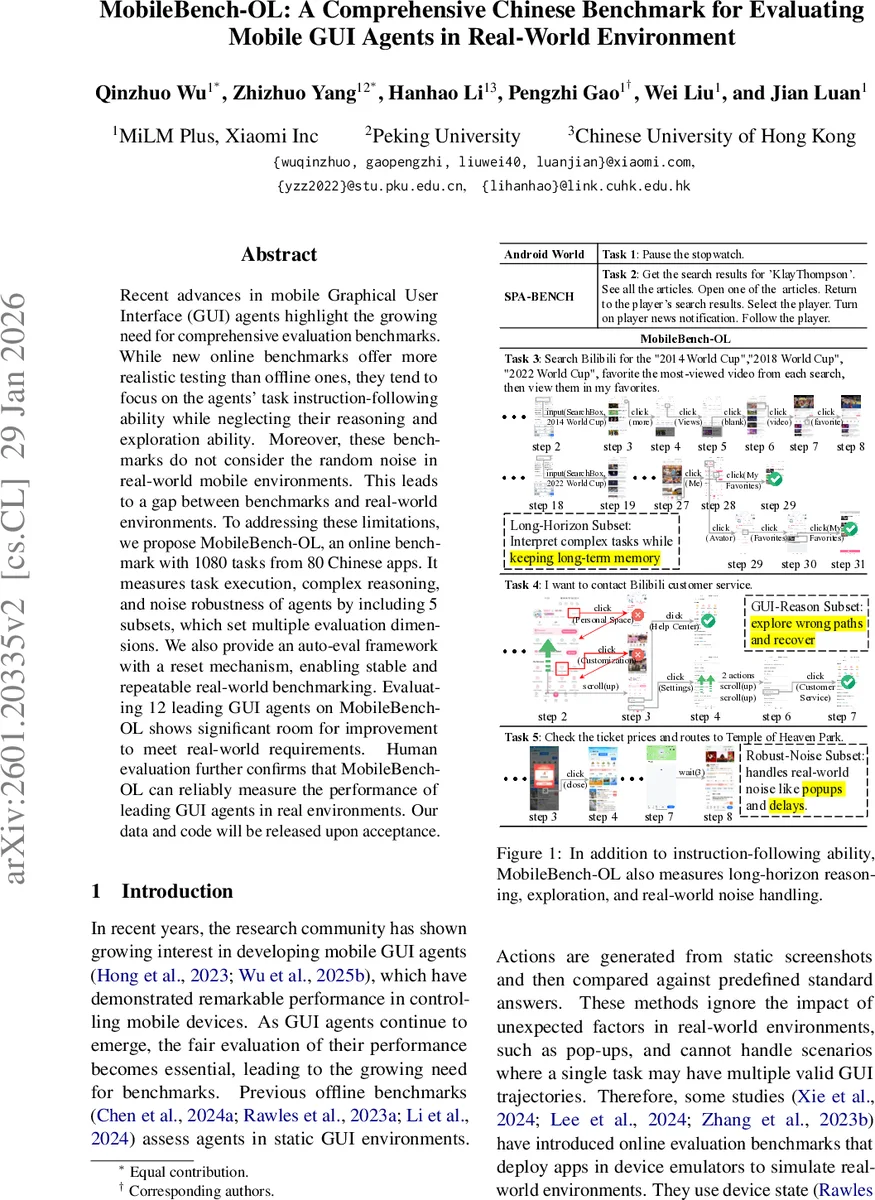

Recent advances in mobile Graphical User Interface (GUI) agents highlight the growing need for comprehensive evaluation benchmarks. While new online benchmarks offer more realistic testing than offline ones, they tend to focus on the agents’ task instruction-following ability while neglecting their reasoning and exploration ability. Moreover, these benchmarks do not consider the random noise in real-world mobile environments. This leads to a gap between benchmarks and real-world environments. To addressing these limitations, we propose MobileBench-OL, an online benchmark with 1080 tasks from 80 Chinese apps. It measures task execution, complex reasoning, and noise robustness of agents by including 5 subsets, which set multiple evaluation dimensions. We also provide an auto-eval framework with a reset mechanism, enabling stable and repeatable real-world benchmarking. Evaluating 12 leading GUI agents on MobileBench-OL shows significant room for improvement to meet real-world requirements. Human evaluation further confirms that MobileBench-OL can reliably measure the performance of leading GUI agents in real environments. Our data and code will be released upon acceptance.

💡 Research Summary

Mobile GUI agents have shown great promise for automating smartphone interactions, yet evaluating their real‑world capabilities remains challenging. Existing offline benchmarks rely on static screenshots and predefined trajectories, which fail to capture the dynamic nature of mobile interfaces. Recent online benchmarks improve realism by deploying apps on emulators, but they still focus mainly on simple instruction‑following tasks, neglecting complex reasoning, autonomous exploration, and robustness to environmental noise such as pop‑ups, delays, or failed executions.

To bridge this gap, the authors introduce MobileBench‑OL, a large‑scale online benchmark that comprises 1080 tasks drawn from 80 Chinese mobile applications. The benchmark is organized into five subsets, each targeting a distinct evaluation dimension:

- Base – a collection of popular apps covering a wide range of functional points, assessing basic interaction skills.

- Long‑Tail – less common apps (68 in total) that test the agent’s ability to generalize to unfamiliar UI designs.

- Long‑Horizon – tasks requiring ≥20 steps, measuring the agent’s capacity to maintain a high‑level goal, decompose it into subtasks, and keep track of progress over extended sequences.

- GUI‑Reasoning – open‑ended tasks that demand active exploration, including icon understanding, hidden‑function discovery, and hierarchical navigation. Difficulty is quantified by an exploration‑weight scheme (0.5 for icons, 1 for hidden functions, 2 for hierarchical navigation).

- Noise‑Robust – tasks where four types of artificial noise are injected with a 20 % probability at each step: Repeat, Unexecuted, Delay, and Pop‑up. These simulate real‑world disturbances such as accidental double‑clicks, network latency, or unexpected dialogs.

Task difficulty is defined in two ways. For the first, third, fourth, and fifth subsets, the number of “golden steps” (the minimal number of UI pages a human needs) determines Easy (<8 steps), Medium (8‑19 steps), and Hard (≥20 steps). For GUI‑Reasoning, the sum of exploration weights determines difficulty levels. This dual definition allows multiple valid trajectories for the same goal, reflecting realistic user behavior.

The data construction pipeline follows six stages: (1) app selection, (2) task authoring, (3) golden trajectory definition, (4) success‑condition specification, (5) trajectory sampling, and (6) Auto‑Eval and Reset mechanisms. The Auto‑Eval framework automatically compares the agent’s actions against UI element metadata extracted from real device screenshots, eliminating the need for manual labeling. The Reset mechanism restores the device to its initial state after each task, guaranteeing stability and repeatability across runs.

Experiments evaluate twelve state‑of‑the‑art GUI agents (e.g., AITW, AITZ, AndroidControl, MobileAgentBench, etc.) on MobileBench‑OL. Overall success rates hover around 30 %, with particularly low performance on the Noise‑Robust (≈10 %) and GUI‑Reasoning (≈15 %) subsets. Long‑Horizon tasks also see sub‑20 % success, indicating that current agents struggle with maintaining long‑term plans. Human evaluators were asked to judge a random sample of agent runs; their judgments correlate strongly (ρ = 0.87) with the automatic scores, confirming the reliability of the Auto‑Eval pipeline.

The paper’s contributions are fourfold:

- A comprehensive real‑world benchmark covering a broad spectrum of apps and tasks, including long‑tail and high‑memory applications that require login.

- Multi‑dimensional evaluation via five carefully designed subsets that test basic interaction, long‑term reasoning, autonomous exploration, and robustness to stochastic noise.

- An automated evaluation framework with a reset mechanism that ensures reproducible experiments on physical devices.

- Empirical insights revealing substantial gaps between current agent capabilities and the demands of real‑world mobile usage.

The authors acknowledge limitations such as the current focus on Chinese apps and the synthetic nature of noise injection. Future work will expand the benchmark to other languages and regions, and will derive noise patterns from real user logs to further narrow the simulation‑reality gap. Overall, MobileBench‑OL sets a new standard for evaluating mobile GUI agents in realistic, noisy environments and provides a valuable testbed for guiding the next generation of more robust, reasoning‑capable agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment