GR3EN: Generative Relighting for 3D Environments

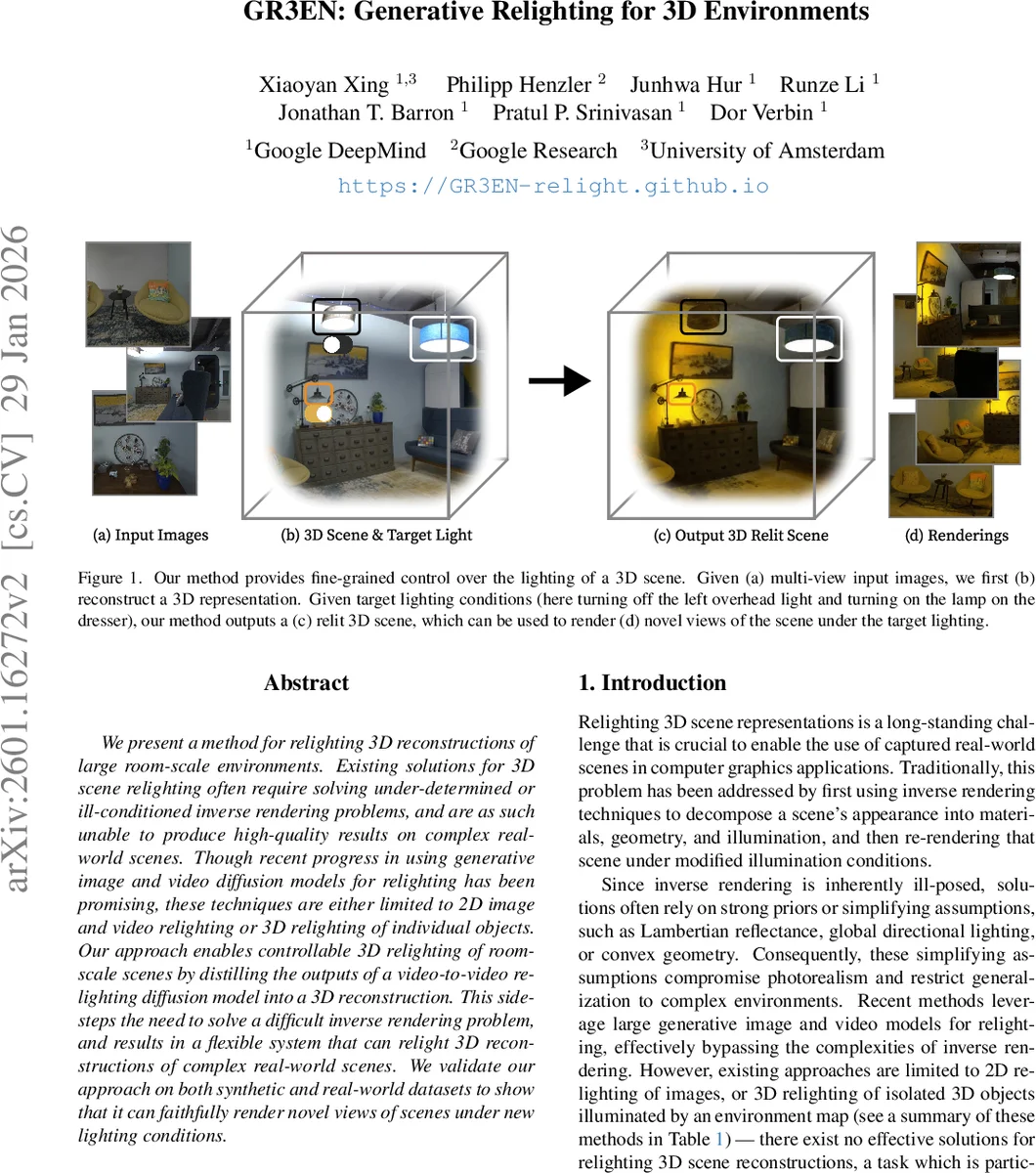

We present a method for relighting 3D reconstructions of large room-scale environments. Existing solutions for 3D scene relighting often require solving under-determined or ill-conditioned inverse rendering problems, and are as such unable to produce high-quality results on complex real-world scenes. Though recent progress in using generative image and video diffusion models for relighting has been promising, these techniques are either limited to 2D image and video relighting or 3D relighting of individual objects. Our approach enables controllable 3D relighting of room-scale scenes by distilling the outputs of a video-to-video relighting diffusion model into a 3D reconstruction. This side-steps the need to solve a difficult inverse rendering problem, and results in a flexible system that can relight 3D reconstructions of complex real-world scenes. We validate our approach on both synthetic and real-world datasets to show that it can faithfully render novel views of scenes under new lighting conditions.

💡 Research Summary

GR3EN (Generative Relighting for 3D Environments) introduces a novel pipeline that enables controllable relighting of room‑scale 3D reconstructions without solving the ill‑posed inverse‑rendering problem. Traditional 3D relighting methods rely on decomposing a scene into geometry, material, and illumination, which forces strong priors (Lambertian reflectance, simple directional lighting, convex geometry) and limits photorealism in complex indoor spaces. Recent diffusion‑based 2D image or video relighting approaches bypass this decomposition but cannot produce a consistent 3D representation suitable for novel view synthesis.

The proposed system bridges this gap in three stages. First, a high‑quality 3D representation of the scene is built from multi‑view photographs using state‑of‑the‑art neural radiance fields (NeRF) or 3D Gaussian splatting. Second, the reconstructed scene is rendered into a video along a wide‑field fisheye camera trajectory. This video, together with a “lighting conditioning video” that encodes per‑pixel target light color and intensity (RGB values, with (‑1,‑1,‑1) marking unchanged pixels) and a scalar triplet for external sunlight, is fed into a fine‑tuned video‑to‑video diffusion model. The base model is the WAN 2.2 TI2V‑5B architecture; the authors adapt it for relighting by concatenating the input, conditioning, and output latents along the temporal dimension and applying rotary positional encodings, which proved superior to channel‑wise or simple temporal concatenation. The diffusion model learns to keep the scene content (geometry, texture, camera motion) intact while altering illumination according to the conditioning. Training uses a heavily biased noise schedule that emphasizes high‑noise timesteps, encouraging the network to first capture global illumination consistency before refining high‑frequency details.

Third, the relit video is “distilled” back into the 3D representation. The authors fine‑tune the original NeRF (specifically Zip‑NeRF for its high fidelity) so that its rendered output matches the relit video frames. Because the video already contains the desired lighting, the distillation step does not require explicit material or normal supervision; it simply aligns the radiance field’s output colors with the diffusion‑generated frames. The resulting relit 3D model can then be rendered from arbitrary viewpoints, supporting novel view synthesis under user‑specified lighting.

To train the diffusion relighting model, the authors construct a large synthetic dataset using Infinigen. They render 300 indoor scenes under 30 distinct lighting setups, producing 108 000 videos (81 frames each, 720×1280 resolution). For each camera pose they generate “one‑light‑at‑a‑time” (OLAT) images, where each light source is isolated. Target lighting conditions are synthesized on‑the‑fly by linearly combining these OLAT images with random per‑light intensities and colors (including black‑body temperature sampling). This strategy provides dense supervision for arbitrary lighting while keeping the training pipeline fully differentiable.

Quantitative evaluations on synthetic benchmarks show that GR3EN outperforms prior 2D diffusion relighting (e.g., LightLab, UniRelight) and object‑level 3D relighting methods (Neural Gaffer, IllumNeRF) in PSNR, SSIM, and LPIPS. Qualitative results on real captured rooms demonstrate realistic shadows, inter‑reflections, and color shifts when turning individual lamps on/off, changing lamp hues, or removing sunlight through windows. Importantly, the relit scenes remain temporally coherent across novel viewpoints, a problem that plagues methods that relight each view independently.

Limitations include the need to render a video for each relighting request, which constrains real‑time interactivity, and the reliance on a fisheye trajectory to cover the scene due to the diffusion model’s limited frame capacity. Future work could explore hierarchical or streaming diffusion models to enable on‑the‑fly relighting of arbitrary camera paths and integrate user‑friendly UI for interactive light editing.

In summary, GR3EN presents a breakthrough approach that sidesteps inverse rendering by leveraging a video diffusion model for illumination transformation and then re‑injecting the result into a neural 3D representation. This enables high‑fidelity, controllable relighting of entire indoor environments and opens new possibilities for VR/AR content creation, interior design visualization, and photorealistic simulation where lighting flexibility is essential.

Comments & Academic Discussion

Loading comments...

Leave a Comment