RAVE: Rate-Adaptive Visual Encoding for 3D Gaussian Splatting

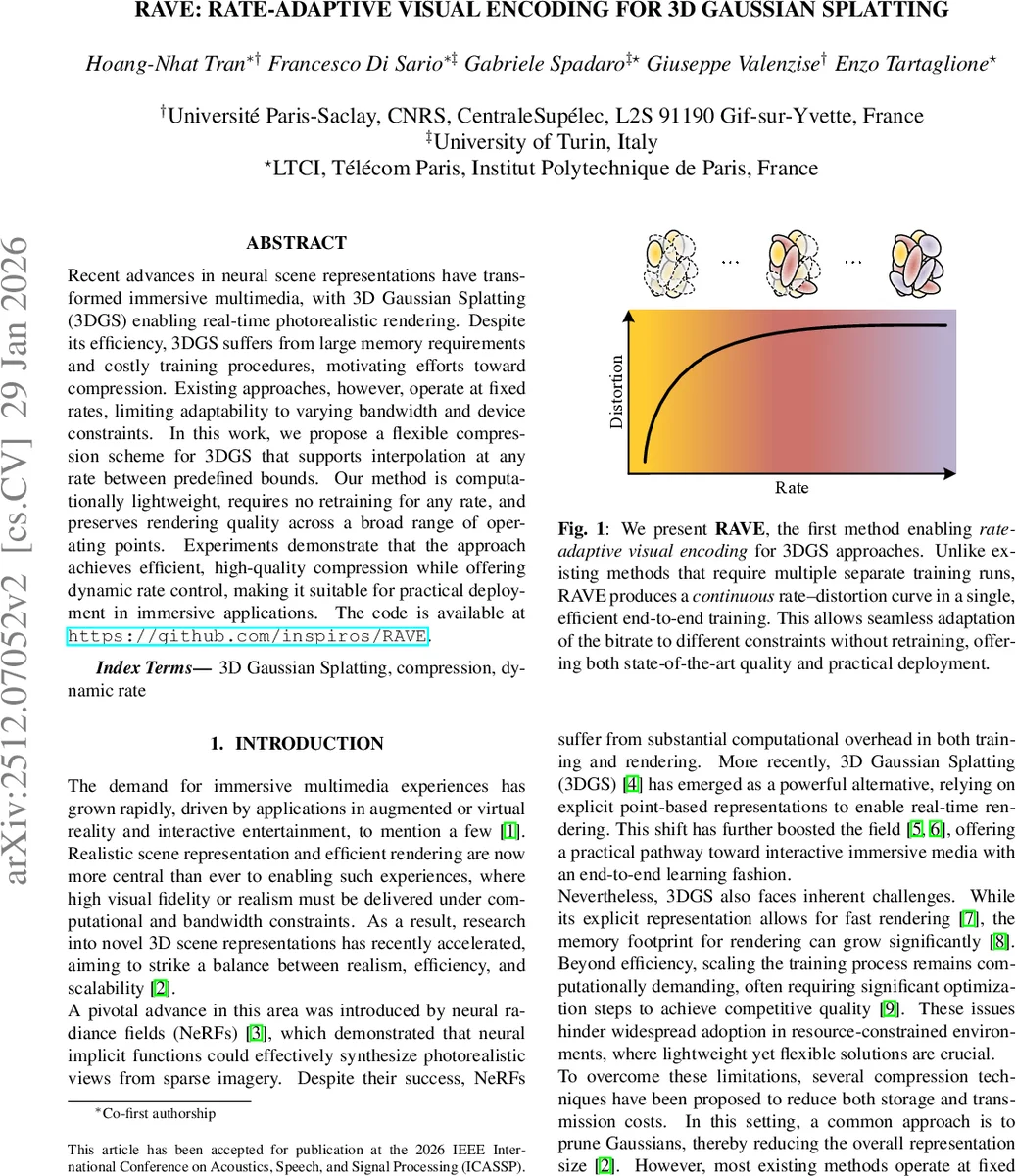

Recent advances in neural scene representations have transformed immersive multimedia, with 3D Gaussian Splatting (3DGS) enabling real-time photorealistic rendering. Despite its efficiency, 3DGS suffers from large memory requirements and costly training procedures, motivating efforts toward compression. Existing approaches, however, operate at fixed rates, limiting adaptability to varying bandwidth and device constraints. In this work, we propose a flexible compression scheme for 3DGS that supports interpolation at any rate between predefined bounds. Our method is computationally lightweight, requires no retraining for any rate, and preserves rendering quality across a broad range of operating points. Experiments demonstrate that the approach achieves efficient, high-quality compression while offering dynamic rate control, making it suitable for practical deployment in immersive applications. The code is available at https://github.com/inspiros/RAVE.

💡 Research Summary

The paper introduces RAVE (Rate‑Adaptive Visual Encoding), a novel compression framework for 3D Gaussian Splatting (3DGS) that enables continuous, on‑the‑fly adjustment of the bitrate without requiring additional training. 3DGS has become a leading method for real‑time photorealistic view synthesis, representing a scene as a collection of volumetric Gaussians each carrying position, covariance, opacity and spherical‑harmonics (SH) coefficients. While this explicit representation yields millisecond‑level rendering, it also incurs a large memory footprint (often millions of Gaussians) and a costly training pipeline. Existing compression approaches mitigate these issues by pruning or quantizing Gaussians, but they operate at a fixed compression rate; changing the bitrate forces a full retraining of the model, which is impractical for dynamic scenarios such as streaming to mobile or AR/VR devices with fluctuating bandwidth.

RAVE tackles this limitation by defining a small set of anchor points (L≈4‑6) along the rate‑distortion curve. Each anchor l corresponds to a Gaussian subset (G_l) that represents the scene at a particular bitrate. The key technical contribution is a gradient‑based importance score computed once for each anchor’s local context (C_{l+1}). Specifically, after a standard 3DGS training run, the authors perform a single forward‑backward pass over the entire training image set and compute the magnitude of the loss gradient with respect to each Gaussian’s parameters (\theta_i): \

Comments & Academic Discussion

Loading comments...

Leave a Comment