CASTELLA: Long Audio Dataset with Captions and Temporal Boundaries

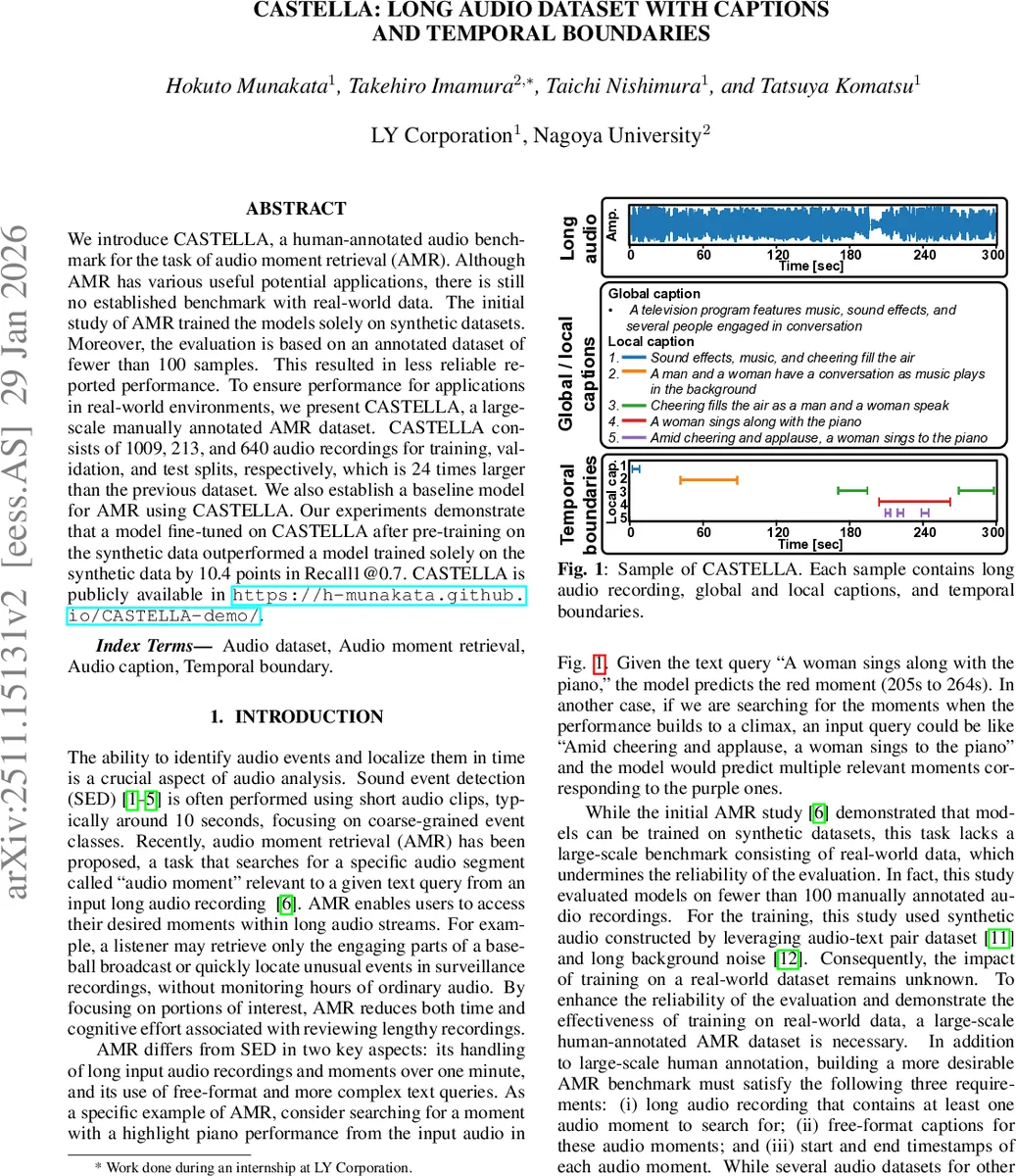

We introduce CASTELLA, a human-annotated audio benchmark for the task of audio moment retrieval (AMR). Although AMR has various useful potential applications, there is still no established benchmark with real-world data. The initial study of AMR trained the models solely on synthetic datasets. Moreover, the evaluation is based on an annotated dataset of fewer than 100 samples. This resulted in less reliable reported performance. To ensure performance for applications in real-world environments, we present CASTELLA, a large-scale manually annotated AMR dataset. CASTELLA consists of 1009, 213, and 640 audio recordings for training, validation, and test splits, respectively, which is 24 times larger than the previous dataset. We also establish a baseline model for AMR using CASTELLA. Our experiments demonstrate that a model fine-tuned on CASTELLA after pre-training on the synthetic data outperformed a model trained solely on the synthetic data by 10.4 points in Recall1@0.7. CASTELLA is publicly available in https://h-munakata.github.io/CASTELLA-demo/.

💡 Research Summary

The paper addresses a critical gap in the audio moment retrieval (AMR) field: the lack of a large‑scale, real‑world benchmark. Existing AMR work has relied on synthetic datasets and evaluated on fewer than one hundred manually annotated recordings, limiting the reliability of reported results. To remedy this, the authors introduce CASTELLA, a human‑annotated dataset specifically designed for AMR. CASTELLA comprises 1,862 long audio recordings (each 1–5 minutes long) collected from YouTube, together with two types of free‑form captions: a global caption summarizing the entire clip and multiple local captions describing salient moments. Each local caption is paired with start and end timestamps at a one‑second resolution, yielding a total of 11,308 temporal boundaries. The dataset is split into 1,009 training, 213 validation, and 640 test recordings, amounting to over 120 hours of audio. Compared with prior resources (AudioSet, MAESTRO Real, AudioCaps, TAcos, Clotho‑Moment, UnAV‑100), CASTELLA uniquely satisfies three essential AMR requirements: long audio, free‑format textual descriptions, and precise temporal boundaries, and it is 24 × larger than the previous manually annotated AMR set.

The annotation pipeline used crowd‑sourcing. Annotators first watched the associated videos to locate up to five salient moments, then described each moment in Japanese without relying on visual cues, and finally produced a global summary. Captions were translated into English by professional translators aided by GPT‑4 and a Japanese LLM, ensuring high‑quality bilingual data. A second reviewer verified each entry, and the authors performed an additional audio‑only audit to remove captions that could not be confirmed from sound alone. This rigorous process resulted in an average of 2.1 local captions per recording, each with about 2.9 timestamps; the vocabulary size is roughly 1,762 words, with average lengths of 7.8 words (local) and 13.7 words (global).

For baseline evaluation, the authors adopted the AM‑DETR architecture from prior AMR work, which combines a CLAP audio‑text encoder (MS‑CLAP) with Detection Transformer (DETR) heads. They tested three DETR‑based variants—QD‑DETR, Moment‑DETR, and UVCOM—using the same hyper‑parameters as the original papers. Models were first pre‑trained on the synthetic Clotho‑Moment dataset and then fine‑tuned on CASTELLA. Evaluation metrics included Recall@0.5, Recall@0.7 (which measures whether the most confident predicted moment overlaps the ground truth above a given IoU), and mean average precision (mAP) at multiple IoU thresholds.

Results show that fine‑tuning on CASTELLA after synthetic pre‑training yields a substantial gain: Recall@0.7 reaches 16.2 %, outperforming a model trained solely on synthetic data by 10.4 % and a model trained only on CASTELLA by 6.5 %. Among architectures, UVCOM achieves the highest Recall@0.7 (20.3 %), confirming that advances in video moment retrieval transfer well to audio. However, performance degrades sharply for short moments (<10 seconds), mirroring findings in video retrieval and highlighting a key challenge for future research.

The authors conclude that CASTELLA provides a robust, publicly available benchmark that enables more realistic assessment of AMR systems. Their experiments demonstrate that a two‑stage training strategy—synthetic pre‑training followed by real‑world fine‑tuning—is effective. They also outline future directions, such as simultaneous localization and captioning, multimodal alignment with video, and real‑time applications like automatic highlight extraction in sports broadcasts. By releasing the dataset and training recipes, the paper aims to catalyze further advances in audio‑centric retrieval and broader multimodal AI research.

Comments & Academic Discussion

Loading comments...

Leave a Comment