ViSurf: Visual Supervised-and-Reinforcement Fine-Tuning for Large Vision-and-Language Models

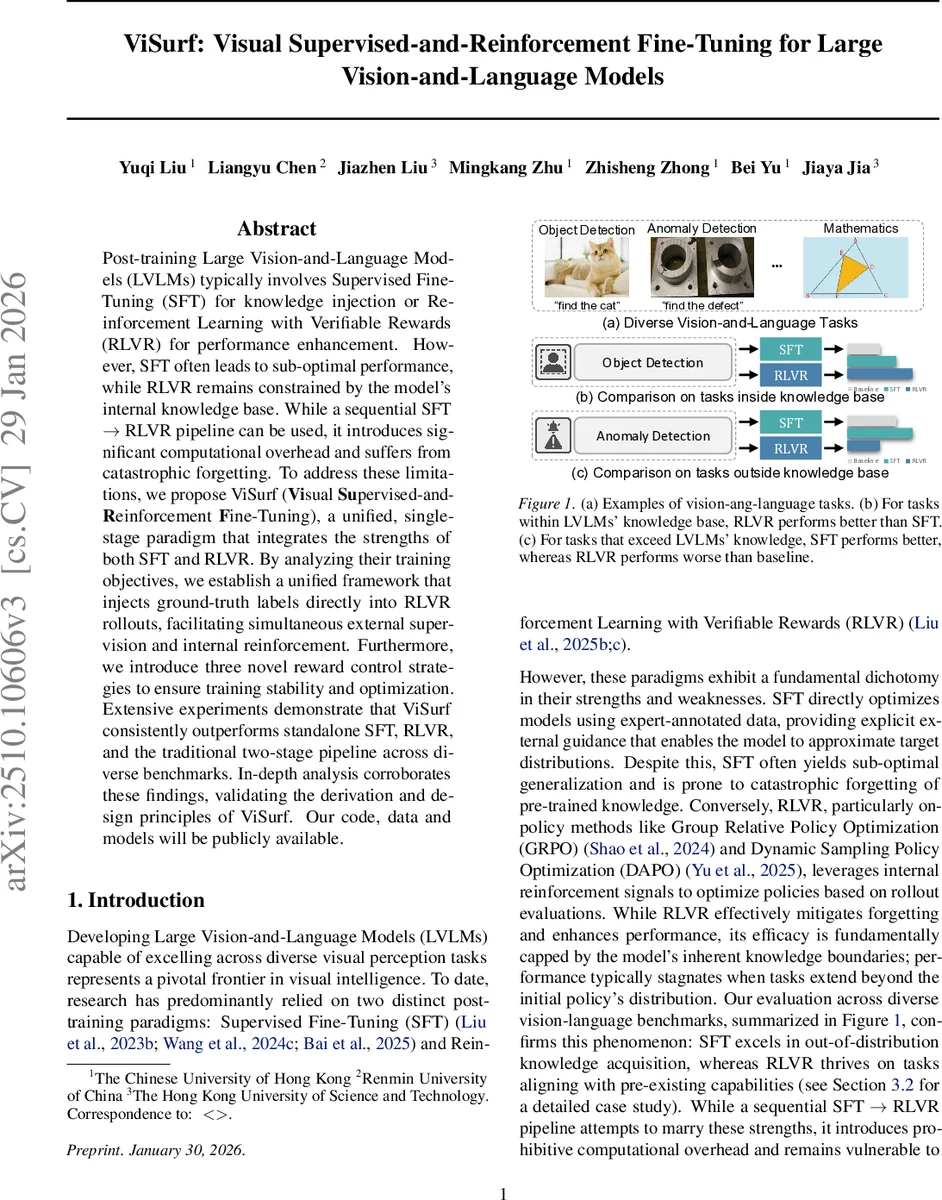

Post-training Large Vision-and-Language Models (LVLMs) typically involves Supervised Fine-Tuning (SFT) for knowledge injection or Reinforcement Learning with Verifiable Rewards (RLVR) for performance enhancement. However, SFT often leads to sub-optimal performance, while RLVR remains constrained by the model’s internal knowledge base. While a sequential SFT $\rightarrow$ RLVR pipeline can be used, it introduces significant computational overhead and suffers from catastrophic forgetting. To address these limitations, we propose ViSurf (\textbf{Vi}sual \textbf{Su}pervised-and-\textbf{R}einforcement \textbf{F}ine-Tuning), a unified, single-stage paradigm that integrates the strengths of both SFT and RLVR. By analyzing their training objectives, we establish a unified framework that injects ground-truth labels directly into RLVR rollouts, facilitating simultaneous external supervision and internal reinforcement. Furthermore, we introduce three novel reward control strategies to ensure training stability and optimization. Extensive experiments demonstrate that ViSurf consistently outperforms standalone SFT, RLVR, and the traditional two-stage pipeline across diverse benchmarks. In-depth analysis corroborates these findings, validating the derivation and design principles of ViSurf.

💡 Research Summary

ViSurf introduces a unified, single‑stage fine‑tuning paradigm that simultaneously leverages supervised fine‑tuning (SFT) and reinforcement learning with verifiable rewards (RLVR) for large vision‑and‑language models (LVLMs). The authors begin by highlighting the complementary strengths and inherent weaknesses of the two dominant post‑training approaches. SFT excels at injecting external, expert‑annotated knowledge but often suffers from sub‑optimal generalization and catastrophic forgetting of pre‑trained capabilities. RLVR, on the other hand, uses on‑policy rollouts evaluated by a verification function to reinforce policies, preserving existing knowledge and improving performance on tasks that lie within the model’s original knowledge base. However, RLVR’s performance plateaus when tasks extend beyond that knowledge, because the reward function can only assess what the model already knows.

A straightforward sequential pipeline (first SFT, then RLVR) can combine the advantages but incurs significant computational overhead and still experiences forgetting during the initial SFT phase. To overcome these limitations, ViSurf proposes a theoretically grounded integration of the two objectives. By analyzing the gradients of SFT (∇θ log πθ(y|v,t)) and RLVR (∇θ log πθ(o_j|v,t) weighted by advantage Â_j), the authors observe that both share the same log‑derivative form, differing only in the guidance signal (ground‑truth label versus sampled rollouts) and scalar coefficient. This insight enables them to construct a single loss that naturally incorporates both signals.

The key design choice is to treat the ground‑truth label y as an additional high‑reward rollout within the RLVR framework. During each optimization step, the rollout set is augmented to {y} ∪ {o_j}_{j=1}^G. The verification function r(·) assigns a reward to y (typically high because it matches the reference) and to each rollout. Advantages are computed relative to the combined set, yielding Â_j for each rollout and Â_y for the label. The unified objective (Equation 8) combines the clipped policy‑ratio terms for both rollouts and the label, each multiplied by its respective advantage. Gradient derivation shows that the resulting update is a weighted sum of the SFT and RLVR gradients, achieving simultaneous knowledge injection and policy reinforcement.

Directly inserting the label as a high‑reward sample, however, can cause two pathological behaviors: (1) “reward hacking,” where the model over‑relies on the label and stops exploring, and (2) suppression of the relative advantage of the self‑generated rollouts, preventing the model from learning to correct its own mistakes. To mitigate these issues, ViSurf introduces three novel reward‑control mechanisms:

- Preference Alignment – scales the label advantage to align with the policy’s preferences, ensuring the label does not dominate the learning signal.

- Excluding “Thinking” Rewards for Static Labels – disables any auxiliary “thinking” rewards for the label, treating it as a pure ground‑truth signal rather than a dynamic output.

- Reward Smoothing – applies exponential moving average and clipping to the label reward, preventing sudden spikes that could destabilize training.

These controls keep the entropy of the policy stable, avoid collapse, and preserve the beneficial exploratory behavior of RLVR while still benefitting from the external supervision of SFT.

The authors evaluate ViSurf across a broad suite of vision‑language benchmarks, including DocVQA, gRefCOCO (referring expression segmentation), MathVista (math reasoning), ISIC 2018 (medical image classification), OmniACT (action‑condition‑task), and RealIAD (real‑world image‑answer dialogue). Across all tasks, ViSurf consistently outperforms three baselines: pure SFT, pure RLVR, and the two‑stage SFT→RLVR pipeline. Notably, in non‑object referring expression scenarios where the query refers to a non‑existent object, pure RLVR tends to generate spurious masks because it lacks a corrective signal, while SFT correctly predicts “no object” but with lower overall IoU. ViSurf achieves the best of both worlds: high IoU on valid objects and accurate detection of absent objects, thanks to the integrated label advantage.

A detailed ablation study confirms the importance of each reward‑control component. Removing reward smoothing leads to rapid entropy decay and performance drops early in training; disabling preference alignment causes the model to over‑fit to the label and ignore rollout feedback; omitting the exclusion of thinking rewards results in the model treating the label as another stochastic output, again reducing exploration. The study also shows that ViSurf eliminates catastrophic forgetting: performance on VQA and other knowledge‑base tasks remains stable or even improves after training, whereas SFT alone suffers noticeable degradation.

From an implementation perspective, ViSurf requires only minor modifications to existing RLVR pipelines: the label is sampled as an additional rollout, and the three control strategies are applied to its reward. No extra human preference data or separate loss weighting heuristics are needed, making the approach computationally efficient and scalable to very large LVLMs.

The paper concludes by emphasizing that ViSurf provides a principled, efficient, and empirically validated solution to the long‑standing trade‑off between external supervision and internal reinforcement in LVLM post‑training. Future work may explore richer verification functions, integration with other RL algorithms such as PPO or DPO, and deployment in real‑time multimodal systems where latency and resource constraints are critical.

Comments & Academic Discussion

Loading comments...

Leave a Comment