GraphGhost: Tracing Structures Behind Large Language Models

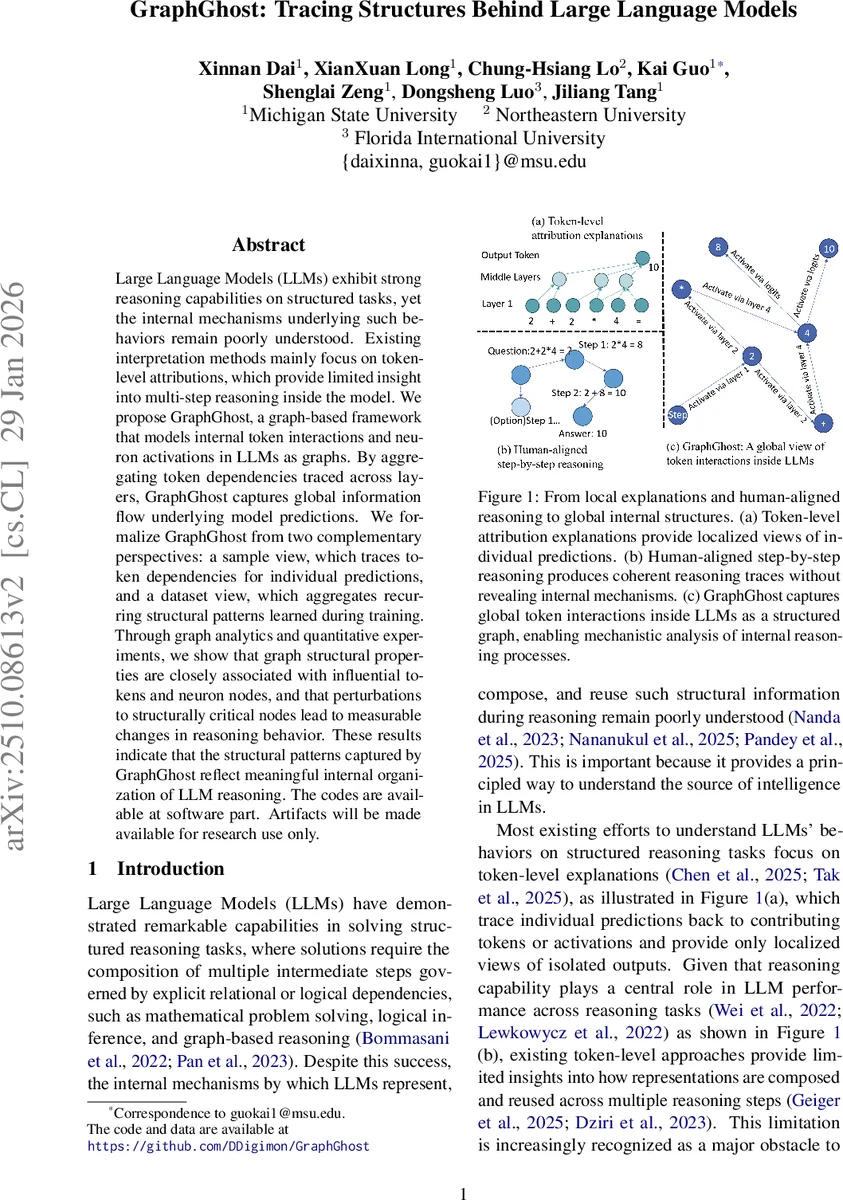

Large Language Models (LLMs) exhibit strong reasoning capabilities on structured tasks, yet the internal mechanisms underlying such behaviors remain poorly understood. Existing interpretation methods mainly focus on token-level attributions, which provide limited insight into multi-step reasoning inside the model. We propose GraphGhost, a graph-based framework that models internal token interactions and neuron activations in LLMs as graphs. By aggregating token dependencies traced across layers, GraphGhost captures global information flow underlying model predictions. We formalize GraphGhost from two complementary perspectives: a sample view, which traces token dependencies for individual predictions, and a dataset view, which aggregates recurring structural patterns learned during training. Through graph analytics and quantitative experiments, we show that graph structural properties are closely associated with influential tokens and neuron nodes, and that perturbations to structurally critical nodes lead to measurable changes in reasoning behavior. These results indicate that the structural patterns captured by GraphGhost reflect meaningful internal organization of LLM reasoning. The codes are available at software part. Artifacts will be made available for research use only.

💡 Research Summary

GraphGhost introduces a novel graph‑based framework for probing the internal reasoning mechanisms of large language models (LLMs). Unlike traditional token‑level attribution methods that only highlight which input tokens contributed to a particular output, GraphGhost captures the full network of token‑token and neuron‑neuron interactions across all transformer layers, representing them as directed graphs. The authors formalize two complementary perspectives.

In the sample view, for a given input‑output pair, the method starts from the final answer token and recursively traces back through the model’s internal computations using circuit‑tracing and logit‑based attribution. Each step yields a local attribution graph whose nodes are token‑layer pairs and whose edges encode contribution strengths (accumulated in a local weight map W_local). By merging these local graphs, an unweighted structural graph G_sample is produced that reveals the exact chain of token dependencies that the model followed to arrive at its prediction.

The dataset view aggregates the sample‑level graphs from many examples into a unified graph G_data. Node sets are united across samples, while edge weights are summed over all occurrences and then row‑stochastic normalized, yielding a global importance matrix. This graph captures recurring reasoning pathways that the model has internalized during training, highlighting both shared primitives (e.g., punctuation, discourse markers) and domain‑specific patterns (e.g., arithmetic operators in math datasets).

Graph analysis shows that classic graph metrics—degree, betweenness centrality, clustering coefficient—strongly correlate with tokens and neurons identified as influential by existing attribution techniques. High‑centrality nodes often correspond to tokens such as “=”, “+”, or “So”, which appear repeatedly across layers and datasets.

To test causal relevance, the authors perform targeted interventions: masking or injecting noise into the top‑5 % most central nodes leads to substantial drops in accuracy (≈12 % absolute) and disrupts logical coherence of chain‑of‑thought reasoning. These perturbation results demonstrate that the graph structure is not merely a visualization tool but reflects genuine causal pathways governing model behavior.

A toy experiment on shortest‑path graph reasoning with a 5‑layer GPT‑2 decoder illustrates the method in a controlled setting. Circuit tracing reveals that the model does not explicitly retrieve relational edges as a human would; instead, it repeatedly merges token‑level associations across layers, forming a composite representation that drives the next token prediction.

Overall, GraphGhost provides (1) fine‑grained, step‑by‑step insight into how LLMs compose and reuse intermediate token representations, and (2) a macro‑level view of reusable reasoning motifs learned from large corpora. The framework bridges the gap between local explanations and human‑aligned reasoning traces, offering a principled avenue for interpretability, debugging, and potentially guiding more faithful prompting strategies. The code and data are released for research use, inviting further exploration of neuron‑level extensions, scalability to larger models, and quantitative alignment with human logical processes.

Comments & Academic Discussion

Loading comments...

Leave a Comment