SPADE: Structured Pruning and Adaptive Distillation for Efficient LLM-TTS

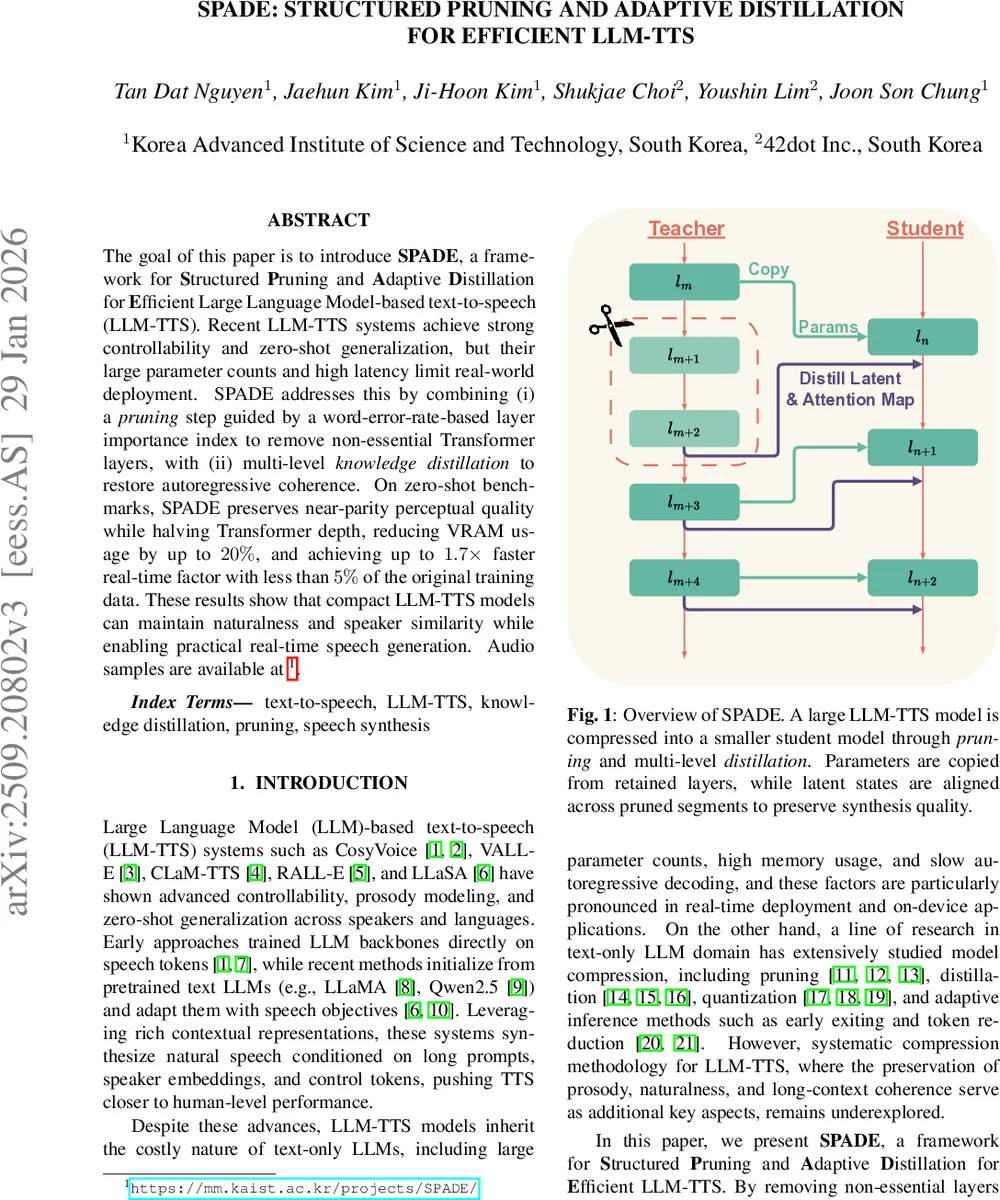

The goal of this paper is to introduce SPADE, a framework for Structured Pruning and Adaptive Distillation for Efficient Large Language Model-based text-to-speech (LLM-TTS). Recent LLM-TTS systems achieve strong controllability and zero-shot generalization, but their large parameter counts and high latency limit real-world deployment. SPADE addresses this by combining (i) a pruning step guided by a word-error-rate-based layer importance index to remove non-essential Transformer layers, with (ii) multi-level knowledge distillation to restore autoregressive coherence. On zero-shot benchmarks, SPADE preserves near-parity perceptual quality while halving Transformer depth, reducing VRAM usage by up to 20%, and achieving up to 1.7x faster real-time factor with less than 5% of the original training data. These results show that compact LLM-TTS models can maintain naturalness and speaker similarity while enabling practical real-time speech generation. Audio samples are available at https://mm.kaist.ac.kr/projects/SPADE/.

💡 Research Summary

The paper tackles the pressing problem of deploying large‑scale language‑model‑based text‑to‑speech (LLM‑TTS) systems in real‑time or on‑device scenarios. While recent LLM‑TTS models such as CosyVoice, VALL‑E, CLaM‑TTS, RALL‑E, and LLaSA demonstrate impressive zero‑shot generalization, controllability, and prosody modeling, they inherit the massive parameter counts and slow autoregressive decoding of their text‑only counterparts, making them impractical for latency‑sensitive applications.

To address this, the authors introduce SPADE, a two‑stage compression framework that (1) prunes redundant Transformer layers using a novel importance metric called Word‑Error‑Rate‑based Layer Importance (WLI) and (2) restores the pruned model’s performance through multi‑level knowledge distillation.

WLI‑guided pruning

Traditional model compression for text LLMs often relies on cosine similarity between a layer’s input and output (CLI) to estimate its contribution. The authors argue that for speech synthesis the ultimate quality is measured by the semantic fidelity of the generated audio, which can be quantified by word error rate (WER). They therefore compute WLI for each layer as the expected increase in WER when that layer is removed, using a lightweight Whisper‑based ASR on a held‑out evaluation set. Layers with low WLI are deemed non‑essential and are removed. Empirical analysis shows that early, middle, and final layers consistently have high WLI, while many intermediate layers contribute little, indicating substantial redundancy.

Adaptive multi‑level distillation

After pruning, the student model suffers from broken information flow. SPADE treats the original unpruned model as a teacher and trains the student with a composite loss:

- Cross‑entropy (CE) for supervised token prediction.

- Logit alignment using a Skew KL divergence to match output distributions.

- Embedding reconstruction loss (L_e).

- Latent alignment loss (L_l) and attention alignment loss (L_a), both computed as mean‑squared error between teacher and student representations.

Crucially, L_l and L_a are applied adaptively: for each retained student layer, the target is taken from the teacher’s layer immediately preceding the next retained teacher layer (i.e., teacher layer l_m + 2). This dynamic mapping allows the student to inherit the refinement performed by the removed layers without adding any extra parameters. The loss weighting α is set to 0.25, deliberately emphasizing the supervised component.

Experimental setup

The framework is evaluated on two publicly available LLM‑TTS backbones: CosyVoice 2 (24‑layer Transformer) and LLaSA‑1B (16‑layer). For each model, the authors prune to 12 or 9 layers (CosyVoice) and to 8 layers (LLaSA), effectively halving the depth. Fine‑tuning is performed on only 25 % of LibriHeavy (English) for LLaSA and 25 % of LibriTTS for CosyVoice 2, amounting to less than 5 % of the original pre‑training corpus.

Results

- CosyVoice 2 (12 layers): Parameter count drops by 39.7 %, real‑time factor (RTF) improves by 42.6 %, and VRAM usage falls by 14 %. WER increases by only 0.68 % on a challenging Seed‑TTS benchmark, while NMOS declines by 0.11 points—an almost negligible perceptual loss.

- CosyVoice 2 (9 layers): Parameters reduced by 49.2 %, RTF improved by 45.9 %, VRAM reduced by 17 %. WER rises by ~2 %, but speaker similarity, UTMOS, and NMOS remain within acceptable ranges, demonstrating a flexible efficiency‑quality trade‑off.

- LLaSA‑1B (8 layers): Parameters cut by 23.5 %, RTF gains 29.3 % (1.41× speed‑up), VRAM down 20 %. Quality metrics show modest degradation, reflecting that LLaSA’s layers are more uniformly important (higher overall WLI).

Ablation studies confirm that (a) cosine‑based pruning leads to substantial WER and CER degradation, validating the superiority of WLI, and (b) removing the adaptive target‑layer selection in distillation harms NMOS, SS, and UTMOS, underscoring the importance of the proposed dynamic alignment.

Discussion and limitations

While SPADE achieves impressive compression with minimal quality loss, computing WLI requires an auxiliary ASR model, adding preprocessing overhead. The current evaluation focuses on English datasets; extending WLI to multilingual or multi‑speaker scenarios may reveal different layer importance patterns. Moreover, the distillation losses rely on simple MSE; more expressive similarity measures (e.g., contrastive losses) could capture subtler representation differences.

Conclusion

SPADE demonstrates that structured pruning guided by a task‑specific importance metric, combined with adaptive multi‑level knowledge distillation, can dramatically reduce the computational footprint of LLM‑TTS systems while preserving intelligibility and naturalness. The framework reduces parameters by up to 40 %, cuts VRAM usage by up to 20 %, and accelerates inference by up to 1.7×, all with less than 5 % of the original training data. Future work may explore lightweight WLI estimation, multilingual extensions, and integration with quantization for further efficiency gains.

Comments & Academic Discussion

Loading comments...

Leave a Comment