Impact of Phonetics on Speaker Identity in Adversarial Voice Attack

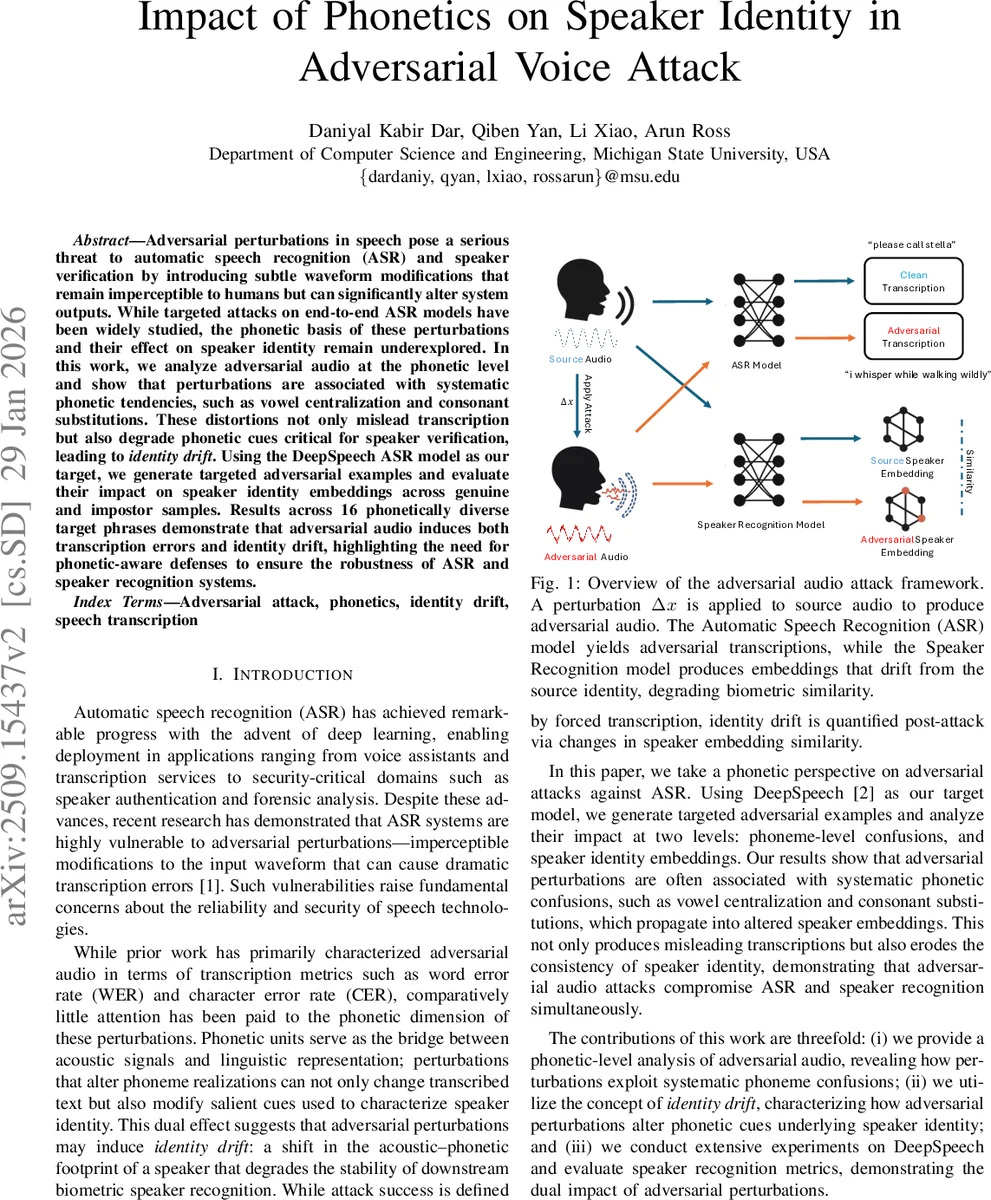

Adversarial perturbations in speech pose a serious threat to automatic speech recognition (ASR) and speaker verification by introducing subtle waveform modifications that remain imperceptible to humans but can significantly alter system outputs. While targeted attacks on end-to-end ASR models have been widely studied, the phonetic basis of these perturbations and their effect on speaker identity remain underexplored. In this work, we analyze adversarial audio at the phonetic level and show that perturbations exploit systematic confusions such as vowel centralization and consonant substitutions. These distortions not only mislead transcription but also degrade phonetic cues critical for speaker verification, leading to identity drift. Using DeepSpeech as our ASR target, we generate targeted adversarial examples and evaluate their impact on speaker embeddings across genuine and impostor samples. Results across 16 phonetically diverse target phrases demonstrate that adversarial audio induces both transcription errors and identity drift, highlighting the need for phonetic-aware defenses to ensure the robustness of ASR and speaker recognition systems.

💡 Research Summary

The paper investigates how adversarial perturbations crafted against an end‑to‑end speech‑recognition model (Mozilla DeepSpeech) affect not only transcription accuracy but also speaker‑verification systems. The authors introduce the notion of “identity drift” to describe the shift of a speaker’s embedding toward impostor space after an attack.

Methodology

A white‑box targeted attack is performed using the Carlini‑Wagner style optimization that minimizes an L2 norm of the perturbation while forcing the CTC‑Loss to produce a chosen target phrase (yₜ). Perturbations are constrained by signal‑to‑noise ratio (SNR) to remain imperceptible (≈40 dB or higher). Sixteen target sentences (T₁–T₁₆) are carefully designed to span a wide range of phonetic phenomena: short commands, medium‑length control phrases, and long pangram‑style sentences rich in vowels, fricatives, affricates, stops, nasals, and varying stress patterns.

The experiments use the VCTK corpus (109 English speakers). For each speaker a clean utterance is selected and transformed into each of the 16 targets, yielding up to 1,744 adversarial examples. Two state‑of‑the‑art speaker‑embedding models are evaluated: ECAPA‑TDNN and a ResNet‑50‑based backbone. Cosine similarity is used to compare embeddings of the original and adversarial utterances (genuine pairs) and of different speakers (impostor pairs). Performance is quantified by the discriminability index d′ and the true‑match rate (TMR) at a false‑match rate of 0.1 %.

Key Findings

-

Transcription Success – All attacks succeed in forcing DeepSpeech to output the exact target phrase, confirming the effectiveness of the white‑box CTC‑based optimization.

-

Identity Drift Varies with Phonetic Content – Simple, vowel‑rich commands (T₁ “yes”, T₂ “open the door”) retain near‑perfect TMR (≈100 %) and high d′ (≈9–10). Phrases heavy in fricatives/affricates (T₅, T₁₀, T₁₄) show moderate degradation (TMR 84–99 %, d′ 4–5). The longest pangrams (T₁₂–T₁₆) suffer severe drift (TMR 44–75 %, d′ ≈3), indicating that phoneme clusters and length amplify the effect.

-

Phoneme‑Specific Vulnerability – Fricatives such as /ʃ, s, z/ and affricates are especially susceptible; small spectral changes in these high‑frequency regions cause large embedding shifts. In contrast, vowel‑dense utterances are more robust because fundamental frequency and formant structures remain relatively stable under low‑amplitude perturbations.

-

Length Amplifies Drift – Longer utterances contain more phonemes, providing more opportunities for the perturbation to accumulate and distort the speaker’s spectral signature, leading to a monotonic decline in TMR and d′ with increasing sentence length.

-

SNR‑Dependent Effects – Even at high SNR (≈40 dB) where humans cannot detect the noise, complex phrases already exhibit noticeable drift. As SNR drops to 30 dB and below, drift becomes catastrophic, with TMR falling below 50 % for the longest sentences.

-

Model‑Agnostic Phenomenon – Both ECAPA‑TDNN and ResNet‑50 display almost identical trends, suggesting that identity drift is a property of the acoustic‑phonetic distortion rather than a quirk of a particular embedding architecture.

Implications

The work reveals a dual‑front vulnerability: adversarial audio can simultaneously sabotage transcription and biometric verification while remaining inaudible. Existing defenses focus on robustness of ASR (e.g., adversarial training, spectral masking) but ignore the downstream impact on speaker embeddings. The authors argue for phonetic‑aware defenses, such as targeted spectral masking of fricative/affricate bands, phoneme‑level self‑monitoring, or multi‑task models that jointly verify transcription and speaker identity.

Limitations and Future Directions

The study is confined to a white‑box, offline setting; over‑the‑air attacks and black‑box scenarios remain to be explored. Moreover, only English speech is examined; languages with different phonotactic inventories may exhibit distinct vulnerability patterns. Extending the analysis to other ASR architectures (e.g., transformer‑based models) and to multimodal authentication pipelines would further solidify the findings.

Conclusion

By systematically linking phonetic structure, utterance length, and perturbation strength to speaker‑embedding degradation, the paper establishes “identity drift” as a critical metric for evaluating adversarial audio attacks. The results underscore that security assessments of speech systems must consider both semantic (transcription) and biometric (speaker identity) dimensions, and that phonetic‑aware countermeasures are essential for robust, real‑world deployments.

Comments & Academic Discussion

Loading comments...

Leave a Comment