BIR-Adapter: A parameter-efficient diffusion adapter for blind image restoration

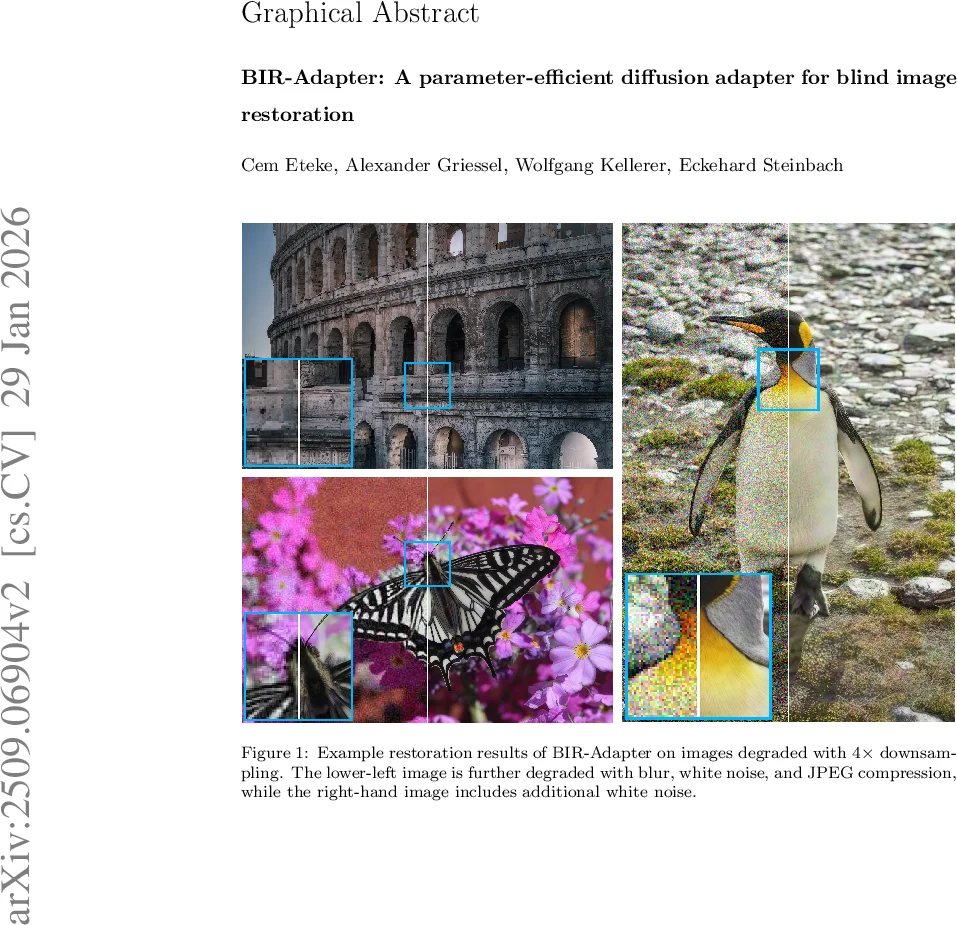

We introduce the BIR-Adapter, a parameter-efficient diffusion adapter for blind image restoration. Diffusion-based restoration methods have demonstrated promising performance in addressing this fundamental problem in computer vision, typically relying on auxiliary feature extractors or extensive fine-tuning of pre-trained models. Motivated by the observation that large-scale pretrained diffusion models can retain informative representations under common image degradations, BIR-Adapter introduces a parameter-efficient, plug-and-play attention mechanism that substantially reduces the number of trained parameters. To further improve reliability, we propose a sampling guidance mechanism that mitigates hallucinations during the restoration process. Experiments on synthetic and real-world degradations demonstrate that BIR-Adapter achieves competitive, and in several settings superior, performance compared to state-of-the-art methods while requiring up to 36x fewer trained parameters. Moreover, the adapter-based design enables seamless integration into existing models. We validate this generality by extending a super-resolution-only diffusion model to handle additional unknown degradations, highlighting the adaptability of our approach for broader image restoration tasks.

💡 Research Summary

The paper introduces BIR‑Adapter, a lightweight, parameter‑efficient adapter designed to enable blind image restoration using large pretrained diffusion models without the need for auxiliary feature extractors. The authors first demonstrate that latent representations of a frozen latent diffusion model (LDM) remain surprisingly similar when fed clean versus degraded inputs, even under common degradations such as down‑sampling, blur, additive white noise, and JPEG compression. This observation motivates the core idea: the diffusion model itself can provide the necessary conditioning information for restoration.

BIR‑Adapter consists of two main components. The first is a “restoring attention” module inserted into each attention block of the diffusion U‑Net. For a given diffusion timestep t, the clean latent features produced by the denoising network (zₖᵗ) serve as queries, while the corresponding degraded latent features (\tilde{z}ₖ) generated in parallel act as keys and values. By computing attention between these two streams, the adapter refines the degraded representation toward the clean one, using only a small set of linear projection matrices (W_O, W′_O). Because the backbone diffusion model remains frozen, the total number of trainable parameters is reduced dramatically—typically around 0.1 M, which is up to 36× fewer than prior ControlNet‑style approaches.

The second component is a sampling‑guidance mechanism applied during the reverse diffusion process. It adds a lightweight L2 consistency loss between the current latent estimate and the degraded latent at selected timesteps, and it emphasizes low‑frequency components to suppress hallucinations that often appear in blind restoration. This guidance stabilizes generation and improves perceptual fidelity, especially when the degradation is severe or highly ambiguous.

Experiments are conducted on both synthetic benchmarks (e.g., DIV2K‑Synthetic with mixed degradations) and real‑world datasets (RealSR, DPED). BIR‑Adapter consistently matches or exceeds state‑of‑the‑art methods such as ControlNet‑SR, DiBIR, StableSR, and the Diffusion Restoration Adapter in PSNR/SSIM while using far fewer parameters and less GPU memory. Ablation studies confirm that both restoring attention and the guidance term are essential: removing attention degrades performance by ~0.2 dB, and disabling guidance leads to noticeable hallucinations in low‑frequency regions.

A notable demonstration of generality is the extension of a super‑resolution‑only diffusion model to handle additional unknown degradations (blur, noise, compression) simply by plugging in BIR‑Adapter. The adapted model achieves comparable quality to dedicated blind‑restoration networks, highlighting the plug‑and‑play nature of the design.

The paper also discusses limitations. The per‑layer attention operates locally and does not enforce global structural consistency, which could be problematic for large‑scale geometry. Moreover, operating in latent space still incurs memory costs for very high‑resolution images. Future work may explore multi‑scale attention, dynamic timestep scheduling, and integration with textual prompts to further broaden applicability.

In summary, BIR‑Adapter offers a new paradigm for blind image restoration: leveraging the intrinsic representations of large diffusion priors through a minimal adapter, achieving high restoration quality, strong parameter efficiency, and easy integration into existing diffusion pipelines. This work paves the way for more scalable, adaptable generative restoration systems across diverse imaging domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment