Parallels Between VLA Model Post-Training and Human Motor Learning: Progress, Challenges, and Trends

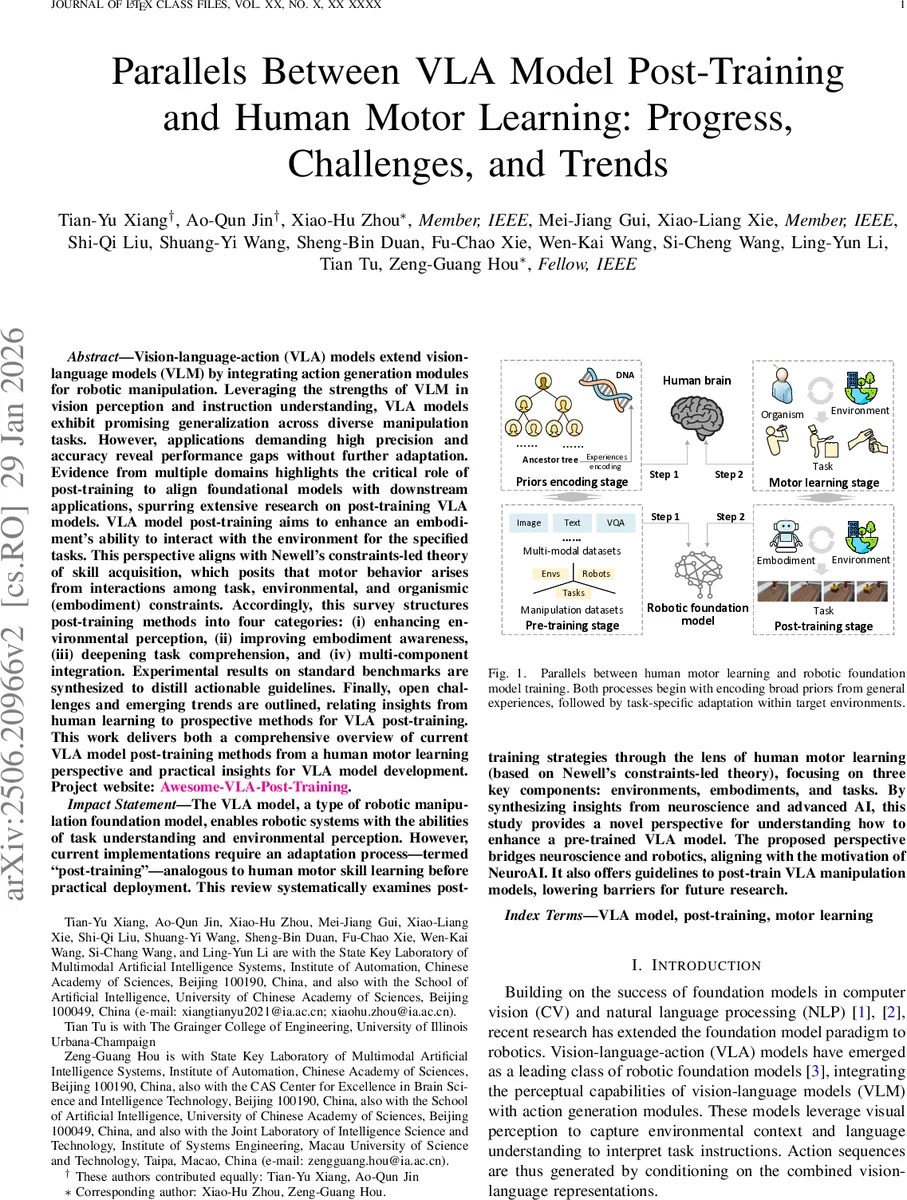

Vision-language-action (VLA) models extend vision-language models (VLM) by integrating action generation modules for robotic manipulation. Leveraging the strengths of VLM in vision perception and instruction understanding, VLA models exhibit promising generalization across diverse manipulation tasks. However, applications demanding high precision and accuracy reveal performance gaps without further adaptation. Evidence from multiple domains highlights the critical role of post-training to align foundational models with downstream applications, spurring extensive research on post-training VLA models. VLA model post-training aims to enhance an embodiment’s ability to interact with the environment for the specified tasks. This perspective aligns with Newell’s constraints-led theory of skill acquisition, which posits that motor behavior arises from interactions among task, environmental, and organismic (embodiment) constraints. Accordingly, this survey structures post-training methods into four categories: (i) enhancing environmental perception, (ii) improving embodiment awareness, (iii) deepening task comprehension, and (iv) multi-component integration. Experimental results on standard benchmarks are synthesized to distill actionable guidelines. Finally, open challenges and emerging trends are outlined, relating insights from human learning to prospective methods for VLA post-training. This work delivers both a comprehensive overview of current VLA model post-training methods from a human motor learning perspective and practical insights for VLA model development. Project website: https://github.com/AoqunJin/Awesome-VLA-Post-Training.

💡 Research Summary

The paper presents a comprehensive survey of post‑training techniques for Vision‑Language‑Action (VLA) models, positioning them within the framework of human motor learning, specifically Newell’s constraints‑led theory. VLA models extend large‑scale vision‑language foundations by adding action generation modules, enabling robots to interpret visual scenes and natural‑language instructions and to produce manipulation trajectories. While pre‑training on massive heterogeneous datasets yields impressive zero‑shot capabilities, real‑world deployment still suffers from precision, stability, and safety gaps. The authors argue that a dedicated post‑training (or fine‑tuning) phase is essential to bridge this gap, analogous to the skill refinement stage in human learning.

The survey first outlines the rapid expansion of robot manipulation datasets, highlighting the Open X‑Embodiment collection which has grown from a few hundred thousand episodes to over ten million, with a corresponding increase in multimodal richness (depth, tactile, proprioceptive signals). It then describes the two dominant VLA architectures: (a) separate vision and language encoders whose embeddings are fused before an action head, and (b) a unified multimodal tokenizer feeding a large language model (LLM) that outputs action tokens via a detokenizer. Typical backbones include DINOv2, CLIP, ResNet, Vision Transformers, and LLMs such as GPT‑style models. Auxiliary data (VQA, internet videos, general vision tasks) are often incorporated to improve representation robustness.

The core contribution is a taxonomy of post‑training methods aligned with Newell’s three constraint categories—environmental, organismic (embodiment), and task—plus a fourth “multi‑component integration” class that jointly optimizes the three.

-

Environmental perception enhancement focuses on improving robustness to lighting changes, occlusions, object texture variations, and depth ambiguities. Techniques include domain adaptation, visual style transfer, data augmentation, and contrastive pre‑training on environment‑specific data.

-

Embodiment awareness improvement targets the robot’s physical constraints: kinematics, dynamics, actuator limits, and sensor placement. Approaches involve proprioceptive fine‑tuning, dynamics‑aware loss functions, model‑based residual learning, and embedding embodiment descriptors directly into the network.

-

Task comprehension deepening injects task‑specific priors such as force limits, sequencing rules, or hierarchical sub‑task structures. Methods range from meta‑learning of task embeddings, prompt‑engineering for language models, to curriculum learning that gradually increases task complexity.

-

Multi‑component integration combines the above via multi‑task loss weighting, cross‑attention mechanisms that let perception, embodiment, and task streams interact, and reinforcement‑learning fine‑tuning that uses reward signals reflecting success, safety, and efficiency.

Empirical results on standard benchmarks (Meta‑World, RLBench, RoboSuite) demonstrate that each category yields measurable gains: environmental adaptation raises success rates by ~12 % under visual perturbations; embodiment‑specific fine‑tuning improves stability by ~8 %; task‑focused meta‑learning boosts complex multi‑step task success by >15 %; and integrated multi‑component training outperforms isolated methods by an additional 5‑7 % on aggregate metrics. Sim‑to‑real transfer experiments show that domain randomization combined with post‑training reduces the reality gap by roughly 30 %.

The authors discuss persistent challenges: (i) data bias and scarcity of high‑quality real‑world demonstrations, (ii) the sim‑to‑real discrepancy in dynamics, (iii) lack of standardized safety and ethical evaluation protocols for post‑trained policies, and (iv) the need for continual online adaptation akin to human practice and feedback loops. They propose future research directions inspired by human learning—incorporating explicit feedback, corrective rehearsal, meta‑curricula, and safety‑aware reinforcement signals—to create more resilient, adaptable VLA systems.

In conclusion, by mapping VLA post‑training onto the well‑studied structure of human motor skill acquisition, the paper offers a clear conceptual lens, a practical taxonomy, and actionable guidelines for researchers aiming to close the performance gap between foundation VLA models and real‑world robotic manipulation. It calls for tighter integration between neuroscience insights and AI engineering to advance the emerging Neuro‑AI paradigm.

Comments & Academic Discussion

Loading comments...

Leave a Comment