ASAP: Exploiting the Satisficing Generalization Edge in Neural Combinatorial Optimization

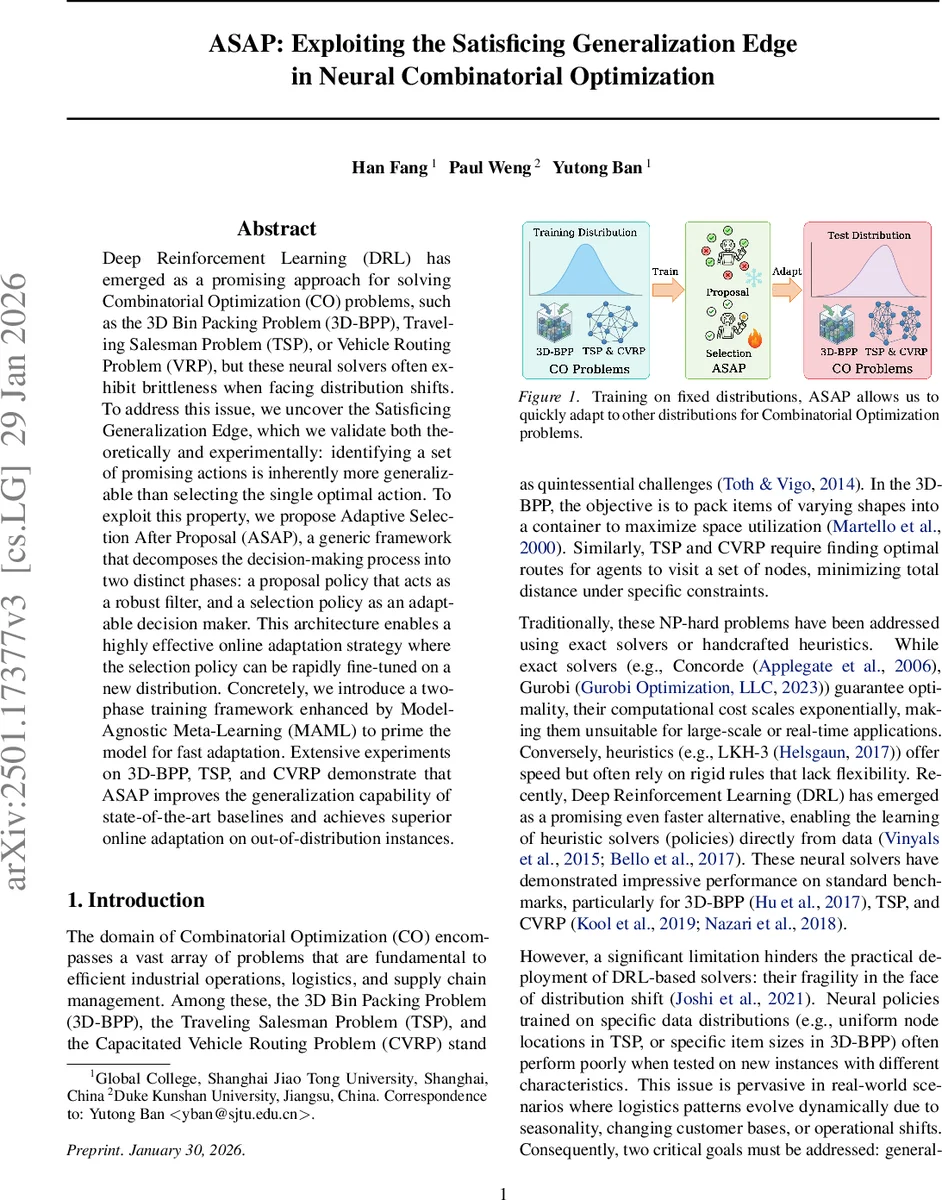

Deep Reinforcement Learning (DRL) has emerged as a promising approach for solving Combinatorial Optimization (CO) problems, such as the 3D Bin Packing Problem (3D-BPP), Traveling Salesman Problem (TSP), or Vehicle Routing Problem (VRP), but these neural solvers often exhibit brittleness when facing distribution shifts. To address this issue, we uncover the Satisficing Generalization Edge, which we validate both theoretically and experimentally: identifying a set of promising actions is inherently more generalizable than selecting the single optimal action. To exploit this property, we propose Adaptive Selection After Proposal (ASAP), a generic framework that decomposes the decision-making process into two distinct phases: a proposal policy that acts as a robust filter, and a selection policy as an adaptable decision maker. This architecture enables a highly effective online adaptation strategy where the selection policy can be rapidly fine-tuned on a new distribution. Concretely, we introduce a two-phase training framework enhanced by Model-Agnostic Meta-Learning (MAML) to prime the model for fast adaptation. Extensive experiments on 3D-BPP, TSP, and CVRP demonstrate that ASAP improves the generalization capability of state-of-the-art baselines and achieves superior online adaptation on out-of-distribution instances.

💡 Research Summary

The paper tackles the well‑known brittleness of deep reinforcement‑learning (DRL) solvers for combinatorial optimization (CO) when faced with distribution shifts. It introduces the “Satisficing Generalization Edge” – the observation that identifying a set of promising actions generalizes far better than pinpointing a single optimal action. The authors formalize this insight with two theorems: (1) a policy that assigns a sufficiently high probability to the optimal action will, with a modest top‑k proposal, include that action with high probability; (2) a two‑stage decision process (proposal followed by selection) yields a strictly higher probability of picking the optimal action than a one‑stage process, provided the optimal action’s probability exceeds 1/(n‑1). Empirical studies on 3D bin packing, TSP, and CVRP confirm that even a tiny proposal set (e.g., top‑3) captures the optimal move in the majority of out‑of‑distribution instances.

Building on this theory, the authors propose ASAP (Adaptive Selection After Proposal), a generic framework that separates the solver into a robust Proposal Policy and a lightweight, rapidly adaptable Selection Policy. The Proposal Policy filters the huge action space into a small candidate set, while the Selection Policy makes the final decision within that set. To enable fast online adaptation, ASAP is trained in two phases: (i) pre‑training on a fixed distribution to learn both policies, and (ii) meta‑learning using Model‑Agnostic Meta‑Learning (MAML) to produce an initialization that can be fine‑tuned with only a few gradient steps on a new distribution.

Extensive experiments demonstrate that ASAP consistently outperforms state‑of‑the‑art baselines (Pointer‑Network, Attention‑Model, POMO, etc.) in terms of generalization to unseen distributions, achieving 8–12 % better objective values on average. Moreover, when the selection policy is fine‑tuned for as few as 5–10 updates on a new test set, performance gaps disappear, whereas conventional methods degrade sharply. In 3D‑BPP, the proposal set of size three already contains the optimal placement in over 70 % of cross‑distribution cases, and coupling it with Monte‑Carlo Tree Search yields near‑optimal packing efficiency.

The work highlights that decoupling “promising region identification” from “fine‑grained choice” can reconcile the competing demands of robustness and adaptability in neural CO solvers. The MAML‑enhanced training further shows that a small amount of online data suffices for rapid specialization, making ASAP a practical candidate for real‑world logistics and manufacturing systems where demand patterns shift constantly. Future directions include extending the two‑stage paradigm to multi‑agent settings, irregular 3D objects, and large‑scale multitask learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment