Visual Localization via Semantic Structures in Autonomous Photovoltaic Power Plant Inspection

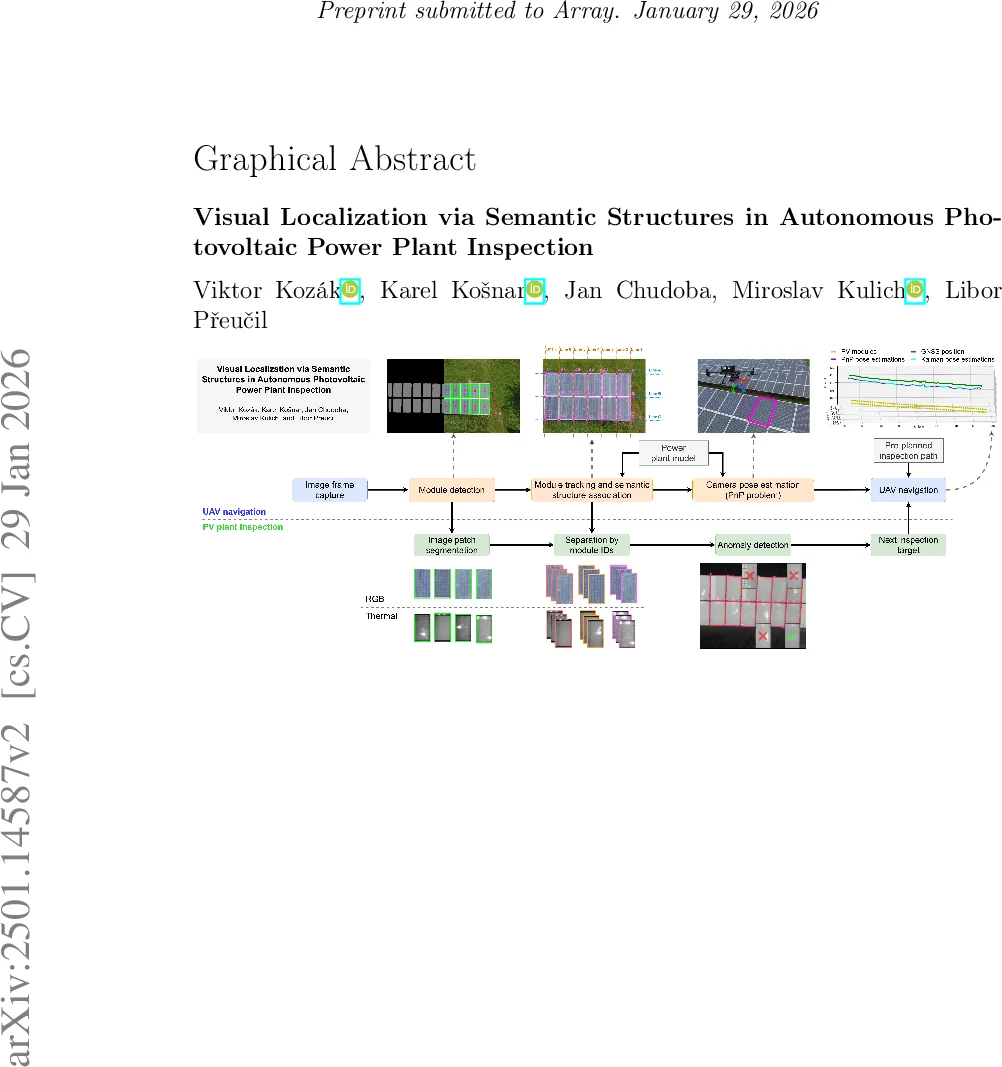

Inspection systems utilizing unmanned aerial vehicles (UAVs) equipped with thermal cameras are increasingly popular for the maintenance of photovoltaic (PV) power plants. However, automation of the inspection task is a challenging problem as it requires precise navigation to capture images from optimal distances and viewing angles. This paper presents a novel localization pipeline that directly integrates PV module detection with UAV navigation, allowing precise positioning during inspection. The detections are used to identify the power plant structures in the image. These are associated with the power plant model and used to infer the UAV position relative to the inspected PV installation. We define visually recognizable anchor points for the initial association and use object tracking to discern global associations. Additionally, we present three different methods for visual segmentation of PV modules and evaluate their performance in relation to the proposed localization pipeline. The presented methods were verified and evaluated using custom aerial inspection data sets, demonstrating their robustness and applicability for real-time navigation. Additionally, we evaluate the influence of the power plant model precision on the localization methods.

💡 Research Summary

The paper presents a comprehensive visual‑based localization pipeline designed for autonomous inspection of photovoltaic (PV) power plants using unmanned aerial vehicles (UAVs) equipped with thermal cameras. The authors identify a critical gap in current UAV inspection systems: while GNSS and IMU provide coarse navigation, they lack the visual feedback necessary to maintain optimal distance and viewing angle for each PV module, which is essential for high‑quality thermographic defect detection.

System Overview

The proposed system consists of three tightly coupled stages: (1) module detection, (2) data association, and (3) camera pose estimation. In the detection stage, three segmentation approaches are implemented and compared: a traditional computer‑vision pipeline based on intensity thresholding and morphological operations, a U‑Net pixel‑wise segmentation network, and a YOLO‑v5 object detector. All three are trained on a newly created, publicly released dataset of RGB images (thermal adaptation is discussed in an appendix). The authors demonstrate that the CNN‑based methods achieve the highest recall and precision, while the classic CV pipeline remains viable on low‑power hardware.

Anchor Points and Global Association

To bootstrap the correspondence between image detections and the 3‑D plant model, the authors define visually recognizable “anchor points” – distinctive corners or intersections that can be uniquely identified in the layout. Once an anchor is matched in the first frame, a Kalman‑filter based tracker assigns persistent IDs to each detected module across subsequent frames. Multi‑hypothesis data association resolves ambiguities caused by occlusions, missed detections, or false positives, allowing the system to reconstruct the semantic structure of rows and columns in the image sequence.

Pose Estimation

With a set of 3‑D (model) – 2‑D (image) correspondences, the pipeline solves a Perspective‑n‑Point (PnP) problem using RANSAC for outlier rejection followed by Levenberg‑Marquardt refinement. The resulting 6‑DoF pose (x, y, z, roll, pitch, yaw) is delivered at ~30 fps, enabling real‑time feedback to the UAV flight controller. The visual pose is fused with GNSS/IMU measurements through an Extended Kalman Filter, providing robustness when visual cues are weak (e.g., strong sun glare) or when GNSS signals degrade.

Impact of Plant Model Precision

The authors evaluate two levels of plant model fidelity: a coarse model with module position errors up to ±5 cm and a high‑precision model with errors ≤ 1 cm. Experiments reveal that when the model’s positional accuracy is better than 2 cm, the average localization error drops below 3 cm, highlighting the importance of high‑resolution plant surveys (e.g., LiDAR or structured‑light scans) for optimal performance.

Experimental Validation

Field tests were conducted on two real PV installations (approximately 2 ha and 3 ha). Using a DJI Matrice 300 equipped with an RGB/thermal dual camera, an IMU, and a GNSS receiver, five flight runs per site were executed. The visual‑localization pipeline achieved an average positional error of 4.2 cm and an angular error of 0.6°, outperforming a GNSS‑only baseline (≈ 15 cm error) by a factor of three to four. Processing times on an RTX 3080 GPU were 28 ms for detection/segmentation and 12 ms for pose estimation, comfortably satisfying real‑time constraints. The system remained stable under varying illumination, solar reflections, and shadowing conditions, and the appendix demonstrates straightforward adaptation to thermal imagery.

Contributions and Future Work

Key contributions include: (1) the first direct use of PV module detections for UAV 6‑DoF localization, (2) a robust anchor‑point and tracking framework that reconstructs global plant geometry from local detections, and (3) a thorough comparative study of three segmentation strategies within an end‑to‑end inspection pipeline. The authors suggest extending the approach to multi‑UAV cooperation for large‑scale mapping, integrating thermal‑RGB multimodal fusion for simultaneous defect detection and pose refinement, and generalizing the semantic model to handle non‑rectilinear installations such as curved arrays or solar‑thermal collectors.

Overall, the paper delivers a practical, real‑time solution that bridges the gap between high‑level navigation and fine‑grained visual inspection, paving the way for fully autonomous, high‑precision PV plant maintenance.

Comments & Academic Discussion

Loading comments...

Leave a Comment