GORAG: Graph-based Online Retrieval Augmented Generation for Dynamic Few-shot Social Media Text Classification

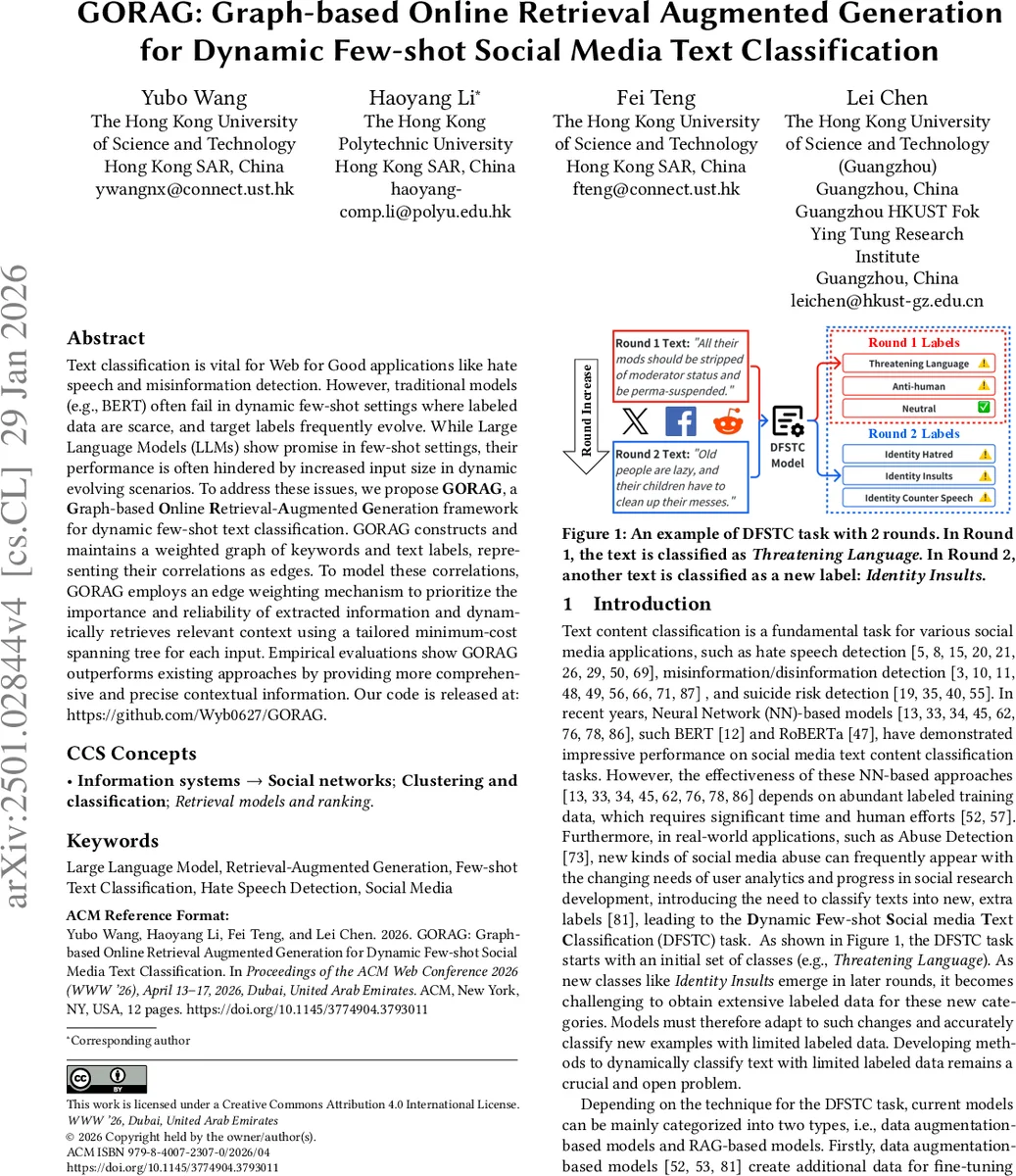

Text classification is vital for Web for Good applications like hate speech and misinformation detection. However, traditional models (e.g., BERT) often fail in dynamic few-shot settings where labeled data are scarce, and target labels frequently evolve. While Large Language Models (LLMs) show promise in few-shot settings, their performance is often hindered by increased input size in dynamic evolving scenarios. To address these issues, we propose GORAG, a Graph-based Online Retrieval-Augmented Generation framework for dynamic few-shot text classification. GORAG constructs and maintains a weighted graph of keywords and text labels, representing their correlations as edges. To model these correlations, GORAG employs an edge weighting mechanism to prioritize the importance and reliability of extracted information and dynamically retrieves relevant context using a tailored minimum-cost spanning tree for each input. Empirical evaluations show GORAG outperforms existing approaches by providing more comprehensive and precise contextual information. Our code is released at: https://github.com/Wyb0627/GORAG.

💡 Research Summary

The paper tackles the challenge of classifying social‑media texts in a dynamic few‑shot setting, where new categories continuously appear and only a handful of labeled examples are available for each class. Traditional fine‑tuned models such as BERT require large annotated corpora and struggle to adapt to evolving label spaces, while large language models (LLMs) excel at few‑shot inference but suffer from hallucinations and token‑budget issues when fed long contextual information. Existing Retrieval‑Augmented Generation (RAG) approaches either augment data indiscriminately, use long‑context retrieval that inflates input size, or employ graph‑based retrieval that treats all graph edges uniformly and relies on a fixed global threshold for relevance. These limitations lead to sub‑optimal performance in dynamic few‑shot social‑media text classification (DFSTC).

To overcome these problems, the authors propose GORAG (Graph‑based Online Retrieval‑Augmented Generation). GORAG consists of three tightly coupled components: (1) Online Graph Indexing, (2) Graph Retrieval, and (3) Classification with Online Graph Updating. In each round, labeled examples are processed to extract salient keywords. Each keyword is connected to its class label via a weighted edge. Edge weights are computed by combining (i) keyword importance measured by TF‑IDF, (ii) semantic similarity between keyword and label embeddings, and (iii) confidence scores from the keyword extraction model. This results in a weighted bipartite graph that captures nuanced keyword‑label relationships and is incrementally merged with graphs from previous rounds, yielding a cumulative graph that evolves as new data arrive.

When a new, unlabeled query text arrives, its keywords are extracted and mapped onto the cumulative graph. GORAG then constructs a Minimum‑Cost Spanning Tree (MST) that spans all query keywords; the MST is obtained via a greedy approximation to the NP‑hard Steiner Tree problem. All label nodes present in this MST form a reduced candidate label set for the query. Because the MST is derived solely from the graph structure and the query’s keywords, the retrieval process is adaptive—no hand‑tuned thresholds are needed, and the retrieved context is tightly focused on the most relevant labels.

The candidate labels and their short descriptions are fed to an LLM (e.g., GPT‑3.5‑Turbo) as a prompt. The LLM scores each candidate, and the label with the highest probability is selected. After classification, any new keywords discovered in the query are added to the graph with appropriately weighted edges, enabling online updating without retraining.

Experiments on two public benchmarks—HateXplain (hate‑speech detection) and a COVID‑19 fake‑news dataset—simulate DFSTC by introducing new labels across multiple rounds. GORAG is compared against (i) fine‑tuned BERT, (ii) data‑augmentation few‑shot methods, (iii) Long‑Context RAG that concatenates retrieved documents, and (iv) GraphRAG with uniform edge weighting. Results show that GORAG consistently outperforms baselines, achieving 4–7 percentage‑point gains in macro‑F1 while reducing the average input token count by roughly 30 %. Moreover, performance remains stable as the label set grows, demonstrating robustness to dynamic label expansion. Ablation studies confirm that both the edge‑weighting scheme and the MST‑based retrieval contribute significantly to the observed improvements.

The authors acknowledge limitations: the approach relies heavily on high‑quality keyword extraction; the graph can become large, potentially increasing MST computation time; and insufficient label descriptions may still cause LLM hallucinations. Future work includes integrating multimodal nodes (images, metadata), exploring graph compression or distributed storage techniques, and refining edge‑weight learning via end‑to‑end training.

In summary, GORAG introduces a novel, adaptive RAG framework that leverages a weighted keyword‑label graph and MST‑based retrieval to provide concise, high‑relevance context to LLMs. This design addresses the uniform‑indexing, non‑adaptive retrieval, and narrow‑source issues of prior methods, delivering superior accuracy and efficiency for dynamic few‑shot social‑media text classification—a critical capability for real‑world “Web for Good” applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment