2DMamba: Efficient State Space Model for Image Representation with Applications on Giga-Pixel Whole Slide Image Classification

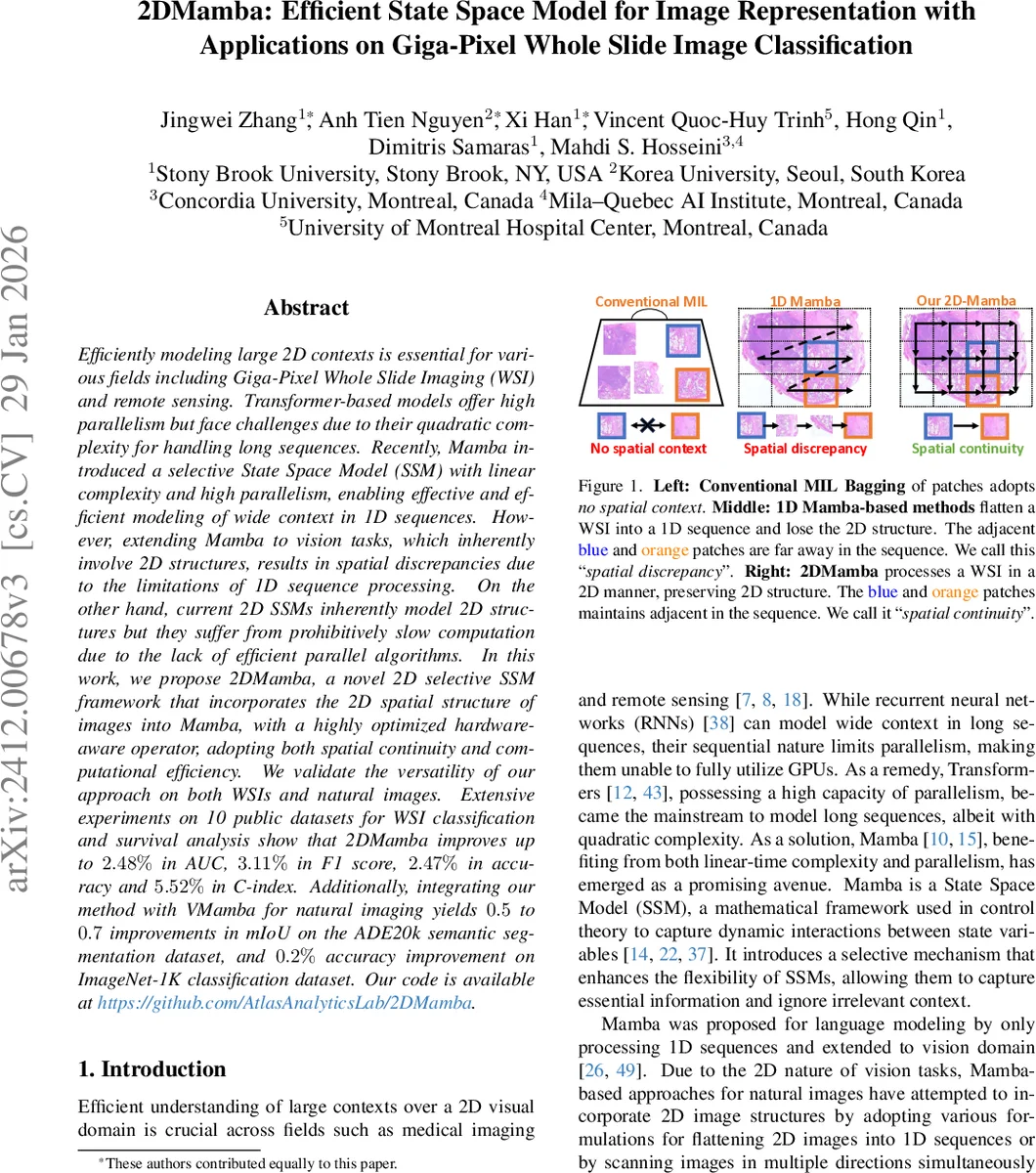

Efficiently modeling large 2D contexts is essential for various fields including Giga-Pixel Whole Slide Imaging (WSI) and remote sensing. Transformer-based models offer high parallelism but face challenges due to their quadratic complexity for handling long sequences. Recently, Mamba introduced a selective State Space Model (SSM) with linear complexity and high parallelism, enabling effective and efficient modeling of wide context in 1D sequences. However, extending Mamba to vision tasks, which inherently involve 2D structures, results in spatial discrepancies due to the limitations of 1D sequence processing. On the other hand, current 2D SSMs inherently model 2D structures but they suffer from prohibitively slow computation due to the lack of efficient parallel algorithms. In this work, we propose 2DMamba, a novel 2D selective SSM framework that incorporates the 2D spatial structure of images into Mamba, with a highly optimized hardware-aware operator, adopting both spatial continuity and computational efficiency. We validate the versatility of our approach on both WSIs and natural images. Extensive experiments on 10 public datasets for WSI classification and survival analysis show that 2DMamba improves up to 2.48% in AUC, 3.11% in F1 score, 2.47% in accuracy and 5.52% in C-index. Additionally, integrating our method with VMamba for natural imaging yields 0.5 to 0.7 improvements in mIoU on the ADE20k semantic segmentation dataset, and 0.2% accuracy improvement on ImageNet-1K classification dataset. Our code is available at https://github.com/AtlasAnalyticsLab/2DMamba.

💡 Research Summary

The paper introduces 2DMamba, a novel two‑dimensional selective state‑space model (SSM) that extends the Mamba architecture—originally designed for one‑dimensional sequences—to directly process 2D image data while preserving spatial continuity and maintaining linear computational complexity.

Motivation and Problem Statement

Transformers excel at modeling long sequences but suffer from quadratic memory and time costs, which become prohibitive for gigapixel whole‑slide images (WSIs) and high‑resolution remote‑sensing data. Mamba mitigates this issue by employing a selective SSM with linear‑time complexity and high parallelism, yet it operates on flattened 1D sequences. Flattening a 2D image destroys the inherent spatial relationships, leading to what the authors term “spatial discrepancy”: adjacent patches in the image become distant in the sequence, impairing the model’s ability to capture contextual interactions. Existing 2D SSMs retain spatial structure but lack efficient parallel implementations, rendering them impractically slow.

Core Contribution – 2D Selective Scan

2DMamba solves both issues by redefining the selective scan as a 2D operation. The algorithm proceeds in two stages for each state dimension (d):

- Horizontal Scan – Each row is processed independently with a standard 1D selective scan, producing intermediate hidden states (h^{\text{hor}}_{i,j}).

- Vertical Scan – Each column is then scanned using the same selective parameters, taking the horizontal hidden states as inputs and yielding the final hidden state (h_{i,j}).

Mathematically, the hidden state can be expressed as

\

Comments & Academic Discussion

Loading comments...

Leave a Comment