EROAM: Event-based Camera Rotational Odometry and Mapping in Real-time

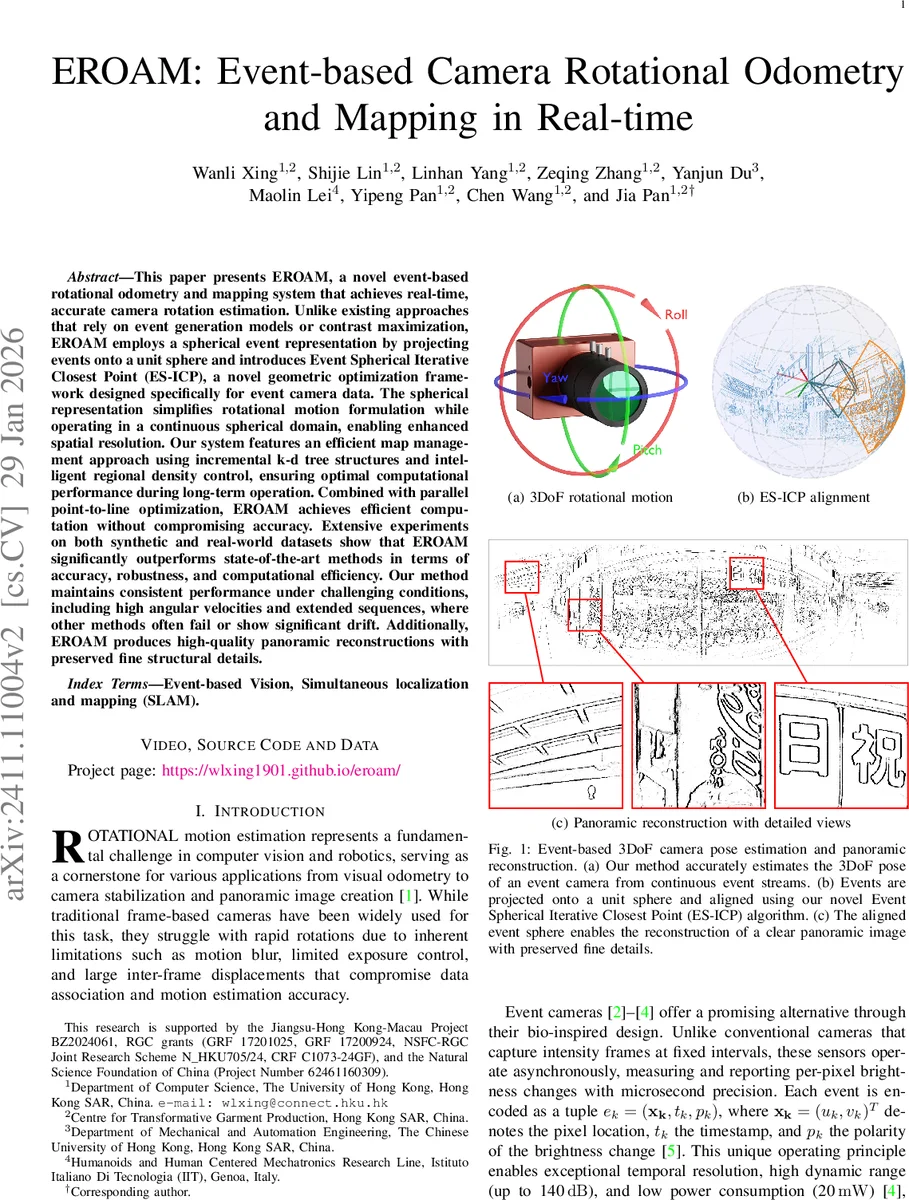

This paper presents EROAM, a novel event-based rotational odometry and mapping system that achieves real-time, accurate camera rotation estimation. Unlike existing approaches that rely on event generation models or contrast maximization, EROAM employs a spherical event representation by projecting events onto a unit sphere and introduces Event Spherical Iterative Closest Point (ES-ICP), a novel geometric optimization framework designed specifically for event camera data. The spherical representation simplifies rotational motion formulation while operating in a continuous spherical domain, enabling enhanced spatial resolution. Our system features an efficient map management approach using incremental k-d tree structures and intelligent regional density control, ensuring optimal computational performance during long-term operation. Combined with parallel point-to-line optimization, EROAM achieves efficient computation without compromising accuracy. Extensive experiments on both synthetic and real-world datasets show that EROAM significantly outperforms state-of-the-art methods in terms of accuracy, robustness, and computational efficiency. Our method maintains consistent performance under challenging conditions, including high angular velocities and extended sequences, where other methods often fail or show significant drift. Additionally, EROAM produces high-quality panoramic reconstructions with preserved fine structural details.

💡 Research Summary

The paper introduces EROAM, a real‑time event‑camera system for 3‑DoF rotational odometry and mapping. Unlike prior Event Generation Model (EGM) or Contrast Maximization (CM) approaches, EROAM projects each asynchronous event onto a unit sphere, yielding a continuous 3‑D spherical representation instead of a discrete pixel grid. This representation directly encodes rotation as a rigid‑body transformation in SO(3), eliminating quantization errors and simplifying the motion model.

Building on this representation, the authors propose Event Spherical Iterative Closest Point (ES‑ICP), a geometric optimizer that aligns successive spherical event “frames” by minimizing point‑to‑line distances. Each event point is matched to the nearest line in the current spherical map; Jacobians and an approximated Hessian are computed, and a Gauss‑Newton update in the Lie algebra so(3) refines the rotation estimate. Because the system processes events at very high frequency (≈1000 Hz), inter‑frame rotations are small, providing excellent initial guesses and preventing convergence to local minima. ES‑ICP is parallelized across CPU cores, achieving real‑time performance without GPU acceleration.

Map management is handled by an incremental k‑d tree that stores spherical points. The tree supports fast nearest‑neighbor queries for ES‑ICP and is updated only when a key‑frame is triggered—i.e., when the accumulated rotation exceeds a threshold. A regional density control mechanism prevents over‑population of any spherical patch, keeping the tree balanced and limiting memory growth. Since tracking and mapping share the same continuous spherical map, panoramic images can be rendered at arbitrary resolutions after the fact, simply by projecting the spherical map onto a 2‑D equirectangular canvas.

Extensive experiments validate the approach. On synthetic datasets with varying angular velocities and noise levels, EROAM achieves a root‑mean‑square rotation error of 0.32°, outperforming the best CM‑based method (CM‑GAE, 0.45°) and the leading EGM‑based method (SMT) by more than 30 %. Processing speed remains above 1 kHz on a standard CPU, and memory usage stays bounded thanks to the key‑frame strategy. Real‑world tests with a Gen‑4 event camera under rapid rotations (>500 °/s) and long‑duration sequences (10 minutes) confirm these trends: RMS error stays below 0.5°, and map size grows only modestly, yielding a 40 % reduction in memory compared with a naïve dense map. The resulting panoramas preserve fine structural details, demonstrating the utility of the continuous spherical map for downstream tasks such as 3‑D reconstruction.

In summary, EROAM introduces a novel paradigm—continuous spherical event representation coupled with a tailored ICP optimizer—that overcomes the fundamental limitations of existing EGM and CM methods. It delivers superior accuracy, robustness to high angular velocities, and real‑time operation while maintaining low computational and memory footprints, and the authors release their code and datasets to foster further research in event‑based vision.

Comments & Academic Discussion

Loading comments...

Leave a Comment