A Pre-trained EEG-to-MEG Generative Framework for Enhancing BCI Decoding

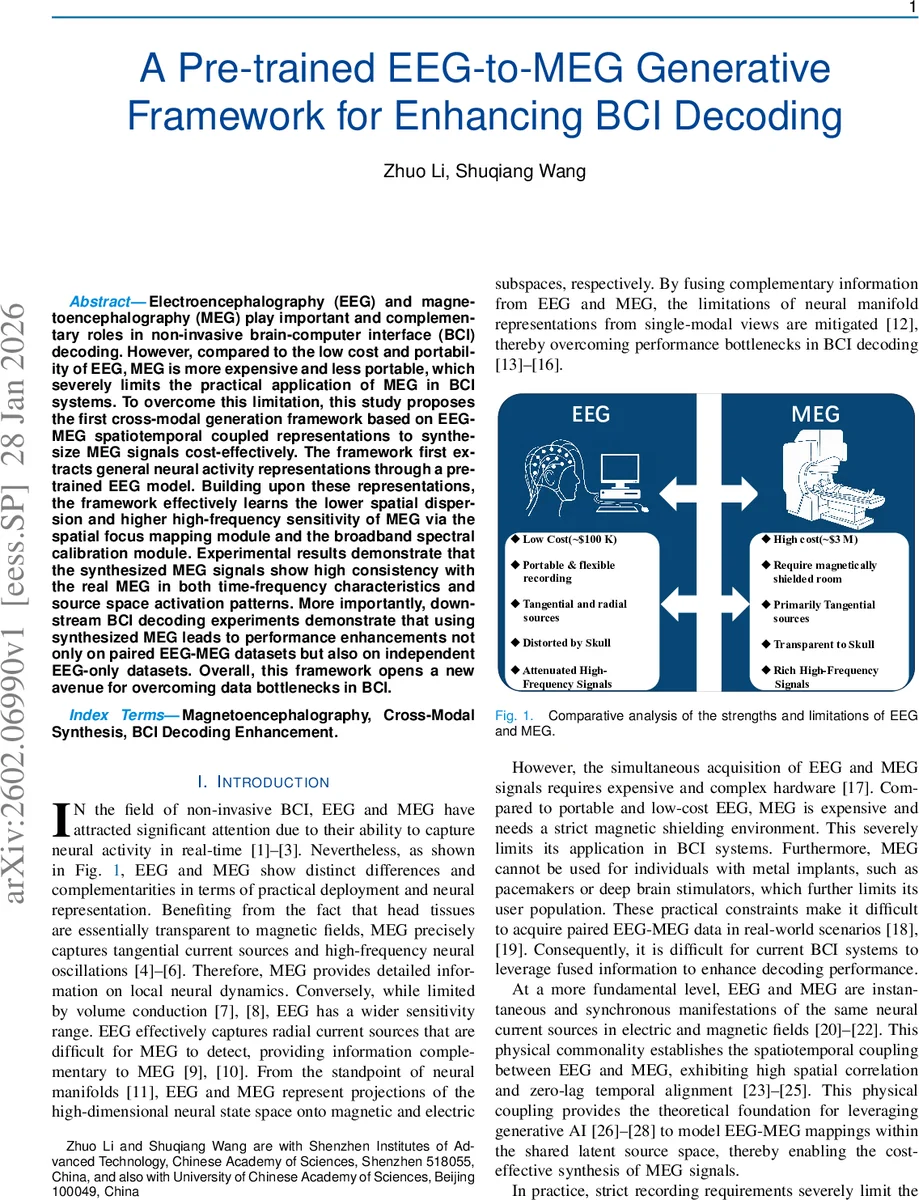

Electroencephalography (EEG) and magnetoencephalography (MEG) play important and complementary roles in non-invasive brain-computer interface (BCI) decoding. However, compared to the low cost and portability of EEG, MEG is more expensive and less portable, which severely limits the practical application of MEG in BCI systems. To overcome this limitation, this study proposes the first cross-modal generation framework based on EEG-MEG spatiotemporal coupled representations to synthesize MEG signals cost-effectively. The framework first extracts general neural activity representations through a pre-trained EEG model. Building upon these representations, the framework effectively learns the lower spatial dispersion and higher high-frequency sensitivity of MEG via the spatial focus mapping module and the broadband spectral calibration module. Experimental results demonstrate that the synthesized MEG signals show high consistency with the real MEG in both time-frequency characteristics and source space activation patterns. More importantly, downstream BCI decoding experiments demonstrate that using synthesized MEG leads to performance enhancements not only on paired EEG-MEG datasets but also on independent EEG-only datasets. Overall, this framework opens a new avenue for overcoming data bottlenecks in BCI.

💡 Research Summary

The paper introduces a novel cross‑modal generative framework that synthesizes magnetoencephalography (MEG) signals from electroencephalography (EEG) recordings, aiming to boost brain‑computer interface (BCI) decoding performance while avoiding the high cost and logistical constraints of MEG acquisition. The authors begin by emphasizing the complementary nature of EEG and MEG: EEG is inexpensive, portable, and sensitive to radial sources but suffers from spatial blurring and high‑frequency attenuation; MEG, in contrast, offers precise localization of tangential sources and rich broadband dynamics but requires expensive hardware and magnetically shielded rooms. Because both modalities capture the same underlying neural currents, they are tightly coupled in space and time, providing a theoretical basis for learning a mapping between them.

To overcome the scarcity of paired EEG‑MEG data, the framework leverages a large‑scale, self‑supervised EEG foundation model (LaBraM) as a frozen encoder. EEG recordings are first reshaped into channel‑wise univariate sequences, allowing the pre‑trained transformer to extract fine‑grained temporal features without premature spatial mixing. After tokenization and deep temporal encoding, the channel dimension is restored, yielding a representation H_enc that encodes general neural activity.

Two specialized modules then adapt this representation to the characteristics of MEG. The Spatial Focus Mapping module applies self‑attention across channels to suppress the diffuse background inherent to EEG and to concentrate the features into a spatial distribution that mirrors MEG’s lower spatial dispersion. Temporal attention follows, ensuring proper phase alignment across the whole time window. Finally, a vector‑quantization (VQ) codebook discretizes the fused features into a set of MEG‑specific prototypes, enforcing strict alignment with the MEG latent manifold.

The latent, quantized features serve as conditioning input to a conditional diffusion generator. In the forward diffusion process, Gaussian noise is gradually added to the true MEG latent code; the reverse process trains a denoising network to predict the added noise given the noisy state, the diffusion timestep, and the EEG‑derived condition. This probabilistic approach captures complex, non‑linear cross‑modal dependencies while mitigating over‑fitting on limited paired data.

Because MEG retains high‑frequency power that EEG typically attenuates, a Broadband Spectral Calibration module is added. A learnable complex frequency‑domain filter modulates the synthesized signal’s spectrum, while a multi‑band loss enforces waveform consistency in the canonical Delta, Theta, Alpha, Beta, and Gamma bands. Together with an L1 reconstruction loss, VQ loss, and diffusion loss, the total objective jointly optimizes temporal fidelity, spectral realism, latent distribution matching, and discrete alignment.

The framework is evaluated on two publicly available simultaneous EEG‑MEG datasets: a somatomotor task (median‑nerve stimulation) and a visual recognition task (faces). Quantitative analyses show that synthesized MEG closely matches real MEG in time‑frequency power spectra and source‑space activation patterns, especially preserving high‑frequency components. In downstream BCI decoding experiments (motor intention classification, visual category discrimination), augmenting the original EEG with synthesized MEG yields statistically significant improvements in accuracy and F1 scores. Importantly, the same performance boost is observed on independent EEG‑only datasets, demonstrating that the generated MEG can act as a virtual sensor complement even when no real MEG is available.

In summary, the contributions are threefold: (1) a paradigm that uses a pre‑trained EEG foundation model to generate MEG, expanding the observable neural manifold without additional hardware; (2) a spatial focus mapping module that bridges the divergent spatial projections of EEG and MEG via attention and vector quantization; (3) a broadband spectral calibration module that restores MEG’s characteristic high‑frequency content. The work opens a practical pathway to mitigate MEG’s data bottleneck, enabling cost‑effective, high‑fidelity multimodal BCI systems and suggesting future extensions to other modalities and real‑time deployment.

Comments & Academic Discussion

Loading comments...

Leave a Comment