Multi-task Code LLMs: Data Mix or Model Merge?

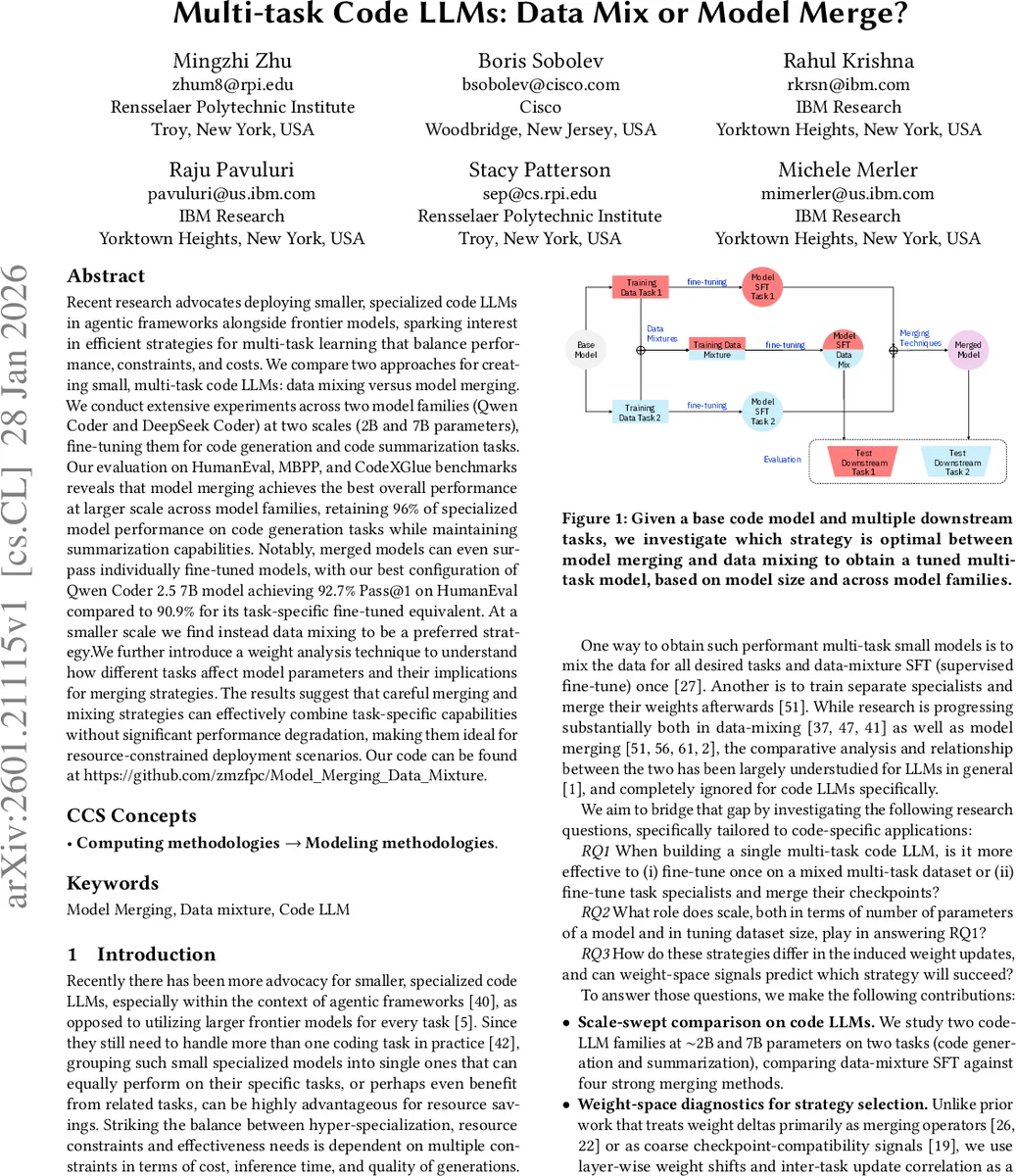

Recent research advocates deploying smaller, specialized code LLMs in agentic frameworks alongside frontier models, sparking interest in efficient strategies for multi-task learning that balance performance, constraints, and costs. We compare two approaches for creating small, multi-task code LLMs: data mixing versus model merging. We conduct extensive experiments across two model families (Qwen Coder and DeepSeek Coder) at two scales (2B and 7B parameters), fine-tuning them for code generation and code summarization tasks. Our evaluation on HumanEval, MBPP, and CodeXGlue benchmarks reveals that model merging achieves the best overall performance at larger scale across model families, retaining 96% of specialized model performance on code generation tasks while maintaining summarization capabilities. Notably, merged models can even surpass individually fine-tuned models, with our best configuration of Qwen Coder 2.5 7B model achieving 92.7% Pass@1 on HumanEval compared to 90.9% for its task-specific fine-tuned equivalent. At a smaller scale we find instead data mixing to be a preferred strategy. We further introduce a weight analysis technique to understand how different tasks affect model parameters and their implications for merging strategies. The results suggest that careful merging and mixing strategies can effectively combine task-specific capabilities without significant performance degradation, making them ideal for resource-constrained deployment scenarios.

💡 Research Summary

The paper investigates two competing strategies for building small, multi‑task code language models (LLMs) that can handle both code generation and code summarization: (1) data mixing, where the training data for all tasks are combined and the model is fine‑tuned once, and (2) model merging, where separate task‑specific fine‑tuned checkpoints are merged after training. The authors conduct a systematic, scale‑swept study using two open‑source code‑focused model families—Qwen 2.5‑Coder and DeepSeek‑Coder—each at approximately 2 B and 7 B parameters. For code generation they use the KodCode dataset (268 K problems) and evaluate on HumanEval, HumanEval+, MBPP, and MBPP+ with the Pass@1 metric. For summarization they use the CodeXGLUE code‑to‑text dataset (417 K pairs) and evaluate with BLEU‑4, chrF++, ROUGE‑L, and METEOR.

Training hyper‑parameters are kept constant across experiments (learning rates 1e‑5 for 2 B, 5e‑6 for 7 B; effective batch sizes 128/64; 2 epochs). In the data‑mix approach the two datasets are simply concatenated and shuffled before a single supervised fine‑tuning (SFT) run. In the model‑merge pipeline, each task is fine‑tuned separately, then four state‑of‑the‑art merging methods are applied via the MergeKit toolkit: Linear averaging, TIES (Trim‑Elect‑Sign), DARE (Drop‑and‑Rescale), and DELLA (Deep Linear Learning Alignment).

Results show a clear scale‑dependent trend. At the 7 B scale, merging consistently outperforms data mixing across both model families. The best configuration—DELLA‑merged Qwen 2.5‑Coder 7 B—achieves 92.7 % Pass@1 on HumanEval, surpassing the task‑specific fine‑tuned baseline (90.9 %). Across all merging methods, performance loss relative to the single‑task checkpoints is typically under 4 %, and in many cases the merged model retains >96 % of the specialized performance while also supporting summarization. Conversely, at the 2 B scale, the data‑mix SFT yields higher Pass@1 and summarization scores than any merged model, indicating that limited parameter budgets benefit more from joint training than from post‑hoc combination.

To understand why the strategies behave differently, the authors perform a weight‑space analysis. They compute layer‑wise L2 distances between each fine‑tuned or merged model and its base checkpoint. Data‑mix SFT exhibits the largest shifts (≈0.7–1.3 across layers), reflecting the need to accommodate heterogeneous training signals simultaneously. Individual task‑specific SFTs show moderate shifts, while merging methods produce more conservative changes (≈0.3–0.8). Among merging techniques, DARE and DELLA are the most restrained, dropping minor updates and rescaling the rest, which keeps most layers close to the base weights. TIES retains larger updates where both tasks agree and prunes conflicting ones. Interestingly, DELLA’s L2 profile sometimes approaches that of data mixing, suggesting that its alignment process can induce broader changes when the base model is less capable. The analysis also reveals that DeepSeek models undergo larger weight shifts than Qwen models at the same scale, likely because their pre‑training performance on the downstream tasks is lower, requiring more aggressive adaptation.

The paper contributes three main points: (1) a comprehensive empirical comparison of data mixing versus four modern merging algorithms across two model families and two scales; (2) a novel diagnostic based on layer‑wise weight shifts and inter‑task update correlation that can predict which strategy will succeed for a given model size and data budget; and (3) practical guidelines for practitioners: use data mixing for ≤2 B parameter models, and adopt model merging—preferably DARE or DELLA—for ≥7 B models to achieve multi‑task capability with minimal performance degradation. These findings are directly relevant to the design of resource‑constrained coding agents, on‑device code assistants, and any deployment scenario where small LLMs must support multiple coding tasks without incurring the cost of training large monolithic models.

Comments & Academic Discussion

Loading comments...

Leave a Comment