Deep Researcher with Sequential Plan Reflection and Candidates Crossover (Deep Researcher Reflect Evolve)

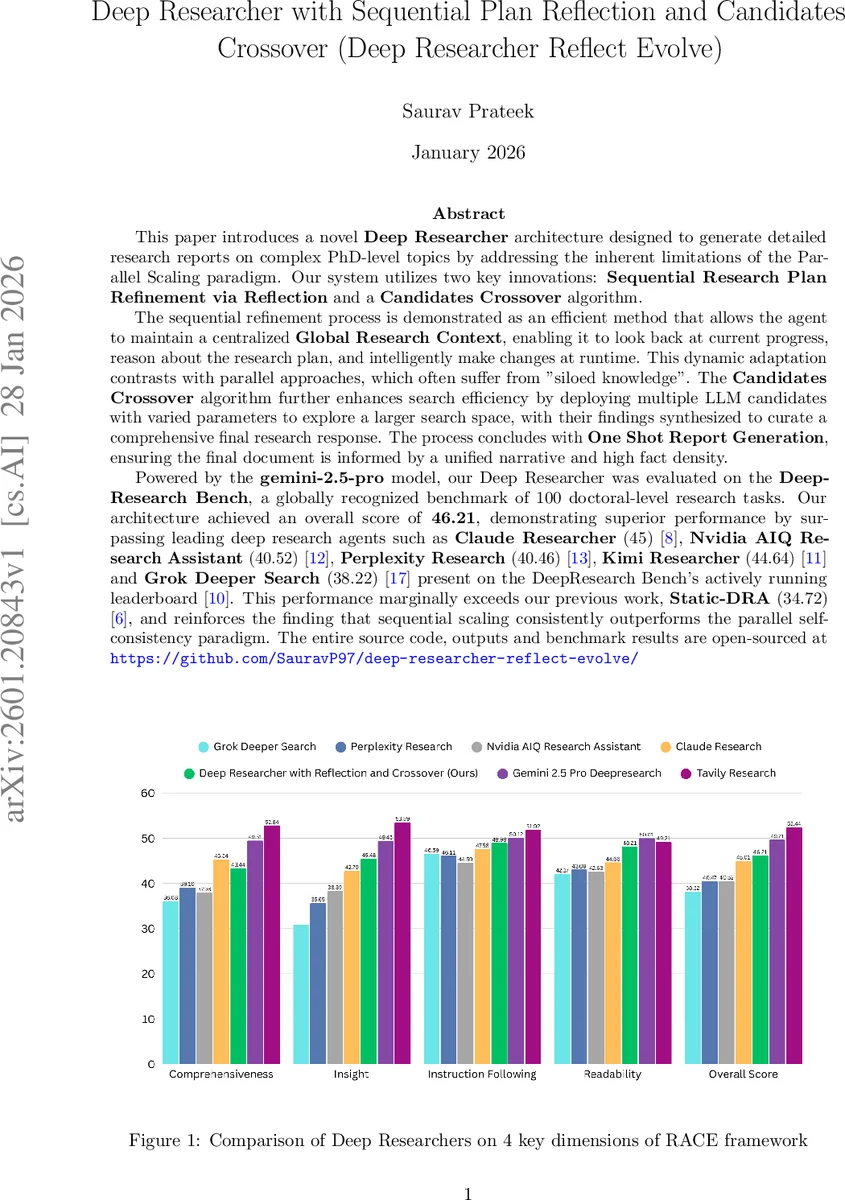

This paper introduces a novel Deep Researcher architecture designed to generate detailed research reports on complex PhD level topics by addressing the inherent limitations of the Parallel Scaling paradigm. Our system utilizes two key innovations: Sequential Research Plan Refinement via Reflection and a Candidates Crossover algorithm. The sequential refinement process is demonstrated as an efficient method that allows the agent to maintain a centralized Global Research Context, enabling it to look back at current progress, reason about the research plan, and intelligently make changes at runtime. This dynamic adaptation contrasts with parallel approaches, which often suffer from siloed knowledge. The Candidates Crossover algorithm further enhances search efficiency by deploying multiple LLM candidates with varied parameters to explore a larger search space, with their findings synthesized to curate a comprehensive final research response. The process concludes with One Shot Report Generation, ensuring the final document is informed by a unified narrative and high fact density. Powered by the Gemini 2.5 Pro model, our Deep Researcher was evaluated on the DeepResearch Bench, a globally recognized benchmark of 100 doctoral level research tasks. Our architecture achieved an overall score of 46.21, demonstrating superior performance by surpassing leading deep research agents such as Claude Researcher, Nvidia AIQ Research Assistant, Perplexity Research, Kimi Researcher and Grok Deeper Search present on the DeepResearch Bench actively running leaderboard. This performance marginally exceeds our previous work, Static DRA, and reinforces the finding that sequential scaling consistently outperforms the parallel self consistency paradigm.

💡 Research Summary

The paper presents “Deep Researcher Reflect Evolve,” a novel architecture for autonomous, PhD‑level research powered by Gemini 2.5 Pro. The authors identify a fundamental weakness of the prevailing Parallel Scaling paradigm: when a research topic is decomposed into many sub‑topics and processed concurrently, each sub‑agent operates in isolation, leading to siloed knowledge, redundant queries, and an inability to adapt the research plan in real time. To overcome this, the system introduces two complementary mechanisms: (1) Sequential Research Plan Refinement via Reflection, and (2) a Candidates Crossover algorithm.

Sequential Research Plan Refinement maintains a centralized Global Research Context that records every query, answer, and raw artifact gathered throughout the investigation. After each search cycle, a Planning Agent “looks back” at this context, evaluates progress, and decides whether to modify the plan, add new sub‑questions, or terminate the loop once a predefined progress threshold (90 %) is reached. This dynamic loop enables the agent to pivot its strategy based on newly discovered evidence, avoiding the static, pre‑defined pipelines typical of parallel systems.

The Candidates Crossover algorithm addresses the breadth of the search space. For each query, the system spawns multiple LLM candidates (n = 3 in the experiments) with diverse temperature and top‑k settings. Each candidate receives the same query plus filtered web results from the Tavily search tool (only results with relevance scores above 30 %). The candidates generate concise, fact‑rich answers, which are then merged in a crossover step that preserves the most reliable facts, numbers, and citations while discarding inconsistencies. Unlike self‑evolution pipelines that include iterative feedback and revision, the authors deliberately omit those stages to keep latency low; instead, plan‑level reflection compensates for any quality loss.

The overall pipeline consists of four modules: (i) Planning Agent (initial plan creation and ongoing refinement), (ii) Search Agent (query generation, web retrieval, candidate crossover), (iii) LLM‑as‑Judge (estimates research progress and triggers termination), and (iv) Report Writer (One‑Shot generation of the final research report). The One‑Shot approach contrasts with Google’s Test‑Time Diffusion (TTD‑DR) which performs multi‑step “report‑level denoising.” By feeding the Report Writer the entire Global Research Context and the final refined plan in a single inference pass, the system achieves high narrative coherence, dense factual content, and consistent citation style.

Evaluation is performed on the DeepResearch Bench, a benchmark of 100 doctoral‑level tasks spanning 22 academic fields in English and Chinese. Performance is measured using two frameworks: RA CE (Reference‑based Adaptive Criteria‑driven Evaluation) for qualitative assessment, and FACT (Factual Abundance and Citation Trustworthiness) for factual accuracy and citation quality. Deep Researcher Reflect Evolve scores 46.21 points, surpassing Claude Researcher (45), Kimi Researcher (44.64), Nvidia AIQ Research Assistant (40.52), Perplexity Research (40.46), and Grok Deeper Search (38.22). It also outperforms the authors’ prior Static‑DRA (34.72) by over 30 %. The results align with findings from “The Sequential Edge” (Chopra 2025), which reported that sequential scaling beats parallel self‑consistency in 95.6 % of configurations, delivering up to 46.7 % accuracy gains.

The paper acknowledges limitations: the optimal number of candidates and parameter configurations are chosen empirically; the omitted environmental feedback loop may reduce fine‑grained correction for particularly ambiguous queries; and the current system is limited to textual web retrieval, lacking multimodal (image, table) integration. Future work is suggested to explore adaptive candidate selection, meta‑reward‑driven feedback, and multimodal search to further boost both quality and efficiency.

In summary, the work demonstrates that a tightly coupled loop of sequential plan reflection and diversified candidate crossover can maintain a global, evolving knowledge base, dynamically steer research direction, and produce high‑quality, citation‑rich reports in a single generation step. This represents a significant step forward for autonomous deep research agents, positioning sequential scaling as a more effective paradigm than traditional parallel self‑consistency for complex, interdisciplinary investigations.

Comments & Academic Discussion

Loading comments...

Leave a Comment