Learning From a Steady Hand: A Weakly Supervised Agent for Robot Assistance under Microscopy

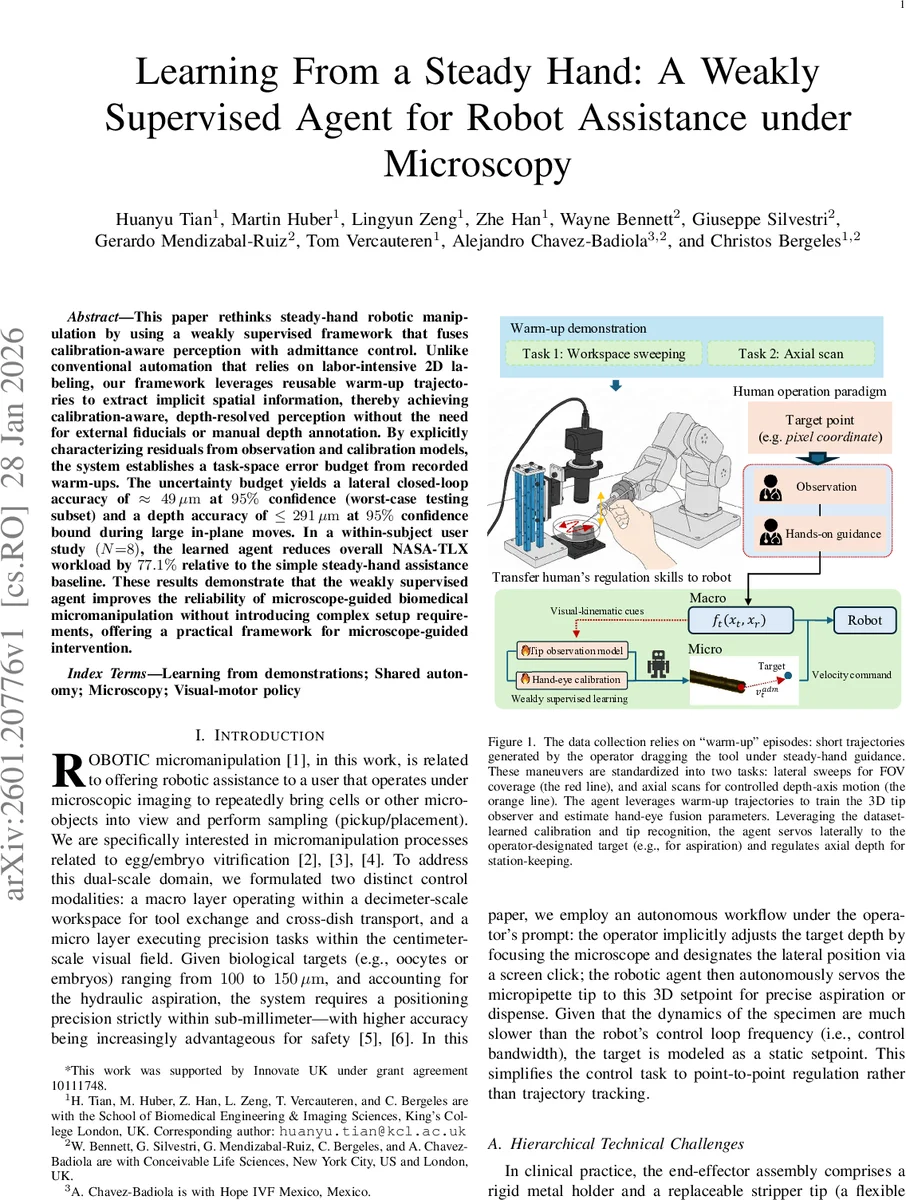

This paper rethinks steady-hand robotic manipulation by using a weakly supervised framework that fuses calibration-aware perception with admittance control. Unlike conventional automation that relies on labor-intensive 2D labeling, our framework leverages reusable warm-up trajectories to extract implicit spatial information, thereby achieving calibration-aware, depth-resolved perception without the need for external fiducials or manual depth annotation. By explicitly characterizing residuals from observation and calibration models, the system establishes a task-space error budget from recorded warm-ups. The uncertainty budget yields a lateral closed-loop accuracy of approx. 49 micrometers at 95% confidence (worst-case testing subset) and a depth accuracy of <= 291 micrometers at 95% confidence bound during large in-plane moves. In a within-subject user study (N=8), the learned agent reduces overall NASA-TLX workload by 77.1% relative to the simple steady-hand assistance baseline. These results demonstrate that the weakly supervised agent improves the reliability of microscope-guided biomedical micromanipulation without introducing complex setup requirements, offering a practical framework for microscope-guided intervention.

💡 Research Summary

This paper introduces a weakly‑supervised learning framework that enables precise, marker‑free robot assistance for microscope‑guided micromanipulation. The authors observe that conventional approaches rely on labor‑intensive 2‑D labeling, external fiducials, or extensive calibration procedures, all of which are impractical in a clinical or research setting where tools are frequently replaced and sterilization constraints prohibit the use of markers. To overcome these limitations, the authors exploit short “warm‑up” demonstrations performed by a human operator in a steady‑hand co‑manipulation mode. Two types of warm‑up trajectories are collected: (1) lateral sweeps that cover the field of view and provide planar motion cues, and (2) axial scans that move the pipette tip through the focal plane, generating depth‑dependent defocus patterns.

From these demonstrations, a pseudo‑labeling pipeline is built using a zero‑shot segmentation model (SAM 2). Only a few anchor frames are manually annotated; the segmentation masks are then propagated bidirectionally across each clip, and the tip is extracted via skeletonization and a multi‑criteria scoring function that combines spatial proximity, boundary distance, and temporal motion consistency. The resulting dense tip trajectories serve as training data for a two‑stage 3‑D perception system. The first stage is a confidence‑aware lateral detector that predicts 2‑D tip locations together with an uncertainty estimate. The second stage is a depth regression network trained on defocus‑derived depth labels obtained from the axial scans. A Bayesian filter fuses the lateral and depth estimates, yielding a smooth, robust 3‑D tip pose even under occlusions or rapid motions.

Calibration between the robot and the microscope is performed without markers by optimizing a loss that combines a bidirectional Chamfer distance (aligning observed image points with projected robot points) and a velocity‑consistency term across the warm‑up trajectories. The optimization yields a hand‑eye transformation matrix and its covariance, which is later used to propagate uncertainty into the control loop.

The control architecture consists of a macro layer and a micro layer. The macro layer runs an admittance controller that maps measured interaction forces into smooth velocity commands, providing safety and allowing the operator to move the tool over large distances. The micro layer uses the learned perception outputs to generate a 3‑D set‑point: the operator clicks a desired lateral target on the screen, and the depth component is automatically inferred from the defocus model. A confidence gate checks whether the perception uncertainty is below a predefined threshold; only when confidence is high does the micro layer take over, aligning the tip laterally and regulating axial position to the focal plane. This hierarchical shared‑control scheme ensures that the robot intervenes only when it can do so reliably, while the user retains continuous manual override capability.

Quantitative evaluation shows that, at a 95 % confidence bound, the closed‑loop lateral error is approximately 49 µm and the axial error does not exceed 291 µm, even during large in‑plane motions. These figures surpass those reported for marker‑based stereotactic systems and pure 2‑D visual servoing approaches. A within‑subject user study with eight participants compared three conditions: manual manipulation, traditional steady‑hand assistance, and the proposed shared‑control mode. Using the NASA‑TLX workload questionnaire, the shared‑control condition reduced overall workload by 77 % relative to manual manipulation and by a similar margin compared with the baseline steady‑hand assistance. Subjective feedback highlighted reduced mental demand and fatigue, confirming that the weakly‑supervised agent effectively offloads the repetitive visual‑motor mapping task from the operator.

In summary, the paper demonstrates that weakly‑supervised learning from brief, operator‑generated warm‑up motions can replace costly manual labeling and frequent recalibration, delivering accurate 3‑D tip perception, uncertainty‑aware hand‑eye calibration, and a confidence‑gated shared‑control strategy. This approach advances the practicality of microscope‑guided robotic assistance, making it more accessible for biomedical applications such as oocyte vitrification, cell sampling, and other delicate micromanipulation tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment