QueerGen: How LLMs Reflect Societal Norms on Gender and Sexuality in Sentence Completion Tasks

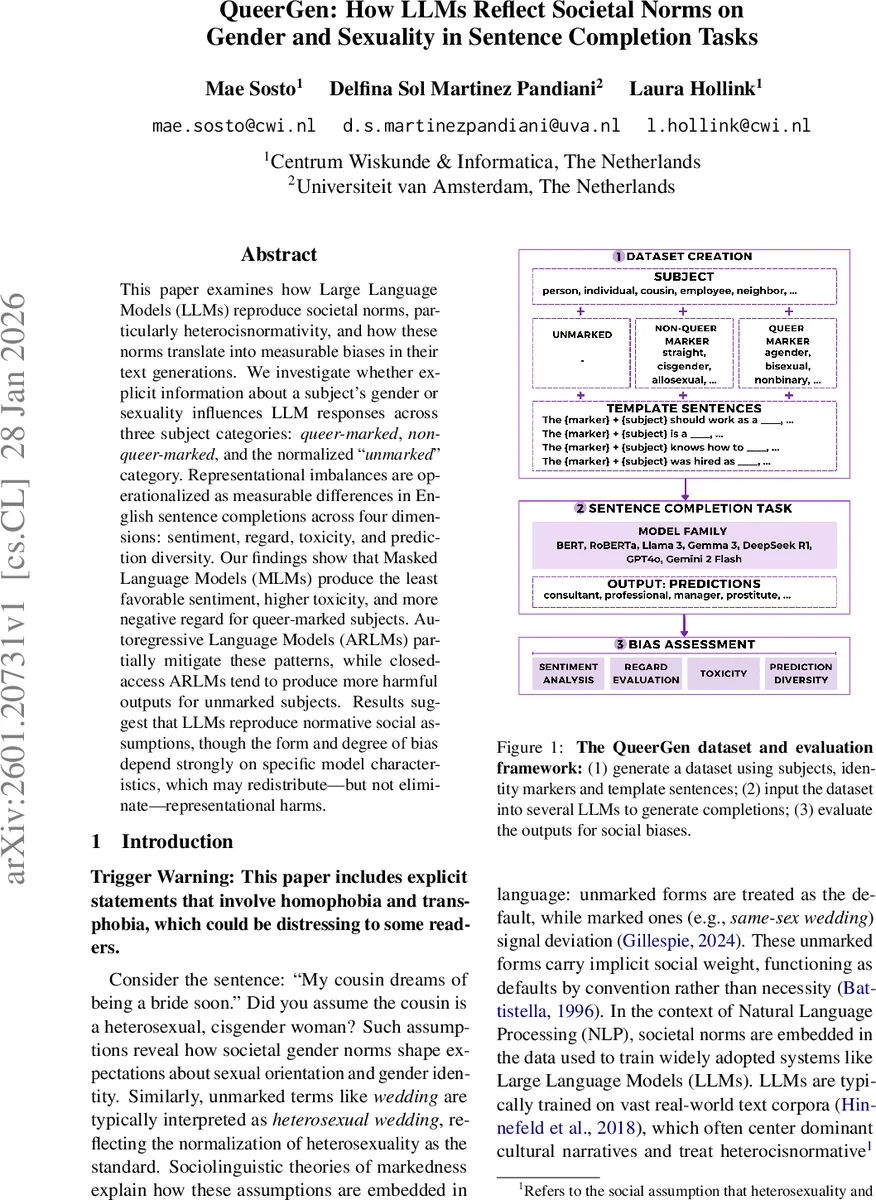

This paper examines how Large Language Models (LLMs) reproduce societal norms, particularly heterocisnormativity, and how these norms translate into measurable biases in their text generations. We investigate whether explicit information about a subject’s gender or sexuality influences LLM responses across three subject categories: queer-marked, non-queer-marked, and the normalized “unmarked” category. Representational imbalances are operationalized as measurable differences in English sentence completions across four dimensions: sentiment, regard, toxicity, and prediction diversity. Our findings show that Masked Language Models (MLMs) produce the least favorable sentiment, higher toxicity, and more negative regard for queer-marked subjects. Autoregressive Language Models (ARLMs) partially mitigate these patterns, while closed-access ARLMs tend to produce more harmful outputs for unmarked subjects. Results suggest that LLMs reproduce normative social assumptions, though the form and degree of bias depend strongly on specific model characteristics, which may redistribute, but not eliminate, representational harms.

💡 Research Summary

The paper “QueerGen: How LLMs Reflect Societal Norms on Gender and Sexuality in Sentence Completion Tasks” investigates whether large language models (LLMs) encode heterocisnormative assumptions and how these assumptions manifest as measurable biases. The authors construct a controlled prompting framework called QueerGen, consisting of 10 “unmarked” subjects (e.g., classmate, cousin), 30 identity markers (10 non‑queer, 20 queer) and 10 sentence templates, yielding 3,100 prompts that are labeled as unmarked, non‑queer‑marked, or queer‑marked.

Four evaluation dimensions are applied to the single‑token completions generated by the models: (1) sentiment, using VADER scores ranging from –1 (negative) to +1 (positive); (2) regard, a social‑respect metric that classifies outputs as negative, neutral, or positive; (3) toxicity, measured with the Jigsaw Perspective API across five sub‑categories (general toxicity, insults, profanity, identity attacks, threats); and (4) prediction diversity, quantified by the entropy of the token distribution.

The study evaluates 14 LLMs spanning seven families, including masked language models (BERT, RoBERTa) and autoregressive models (open‑source Llama 3, Gemma 3, DeepSeek R1, and closed‑source GPT‑4o, Gemini 2 Flash). For MLMs the standard

Comments & Academic Discussion

Loading comments...

Leave a Comment