Decoupling Perception and Calibration: Label-Efficient Image Quality Assessment Framework

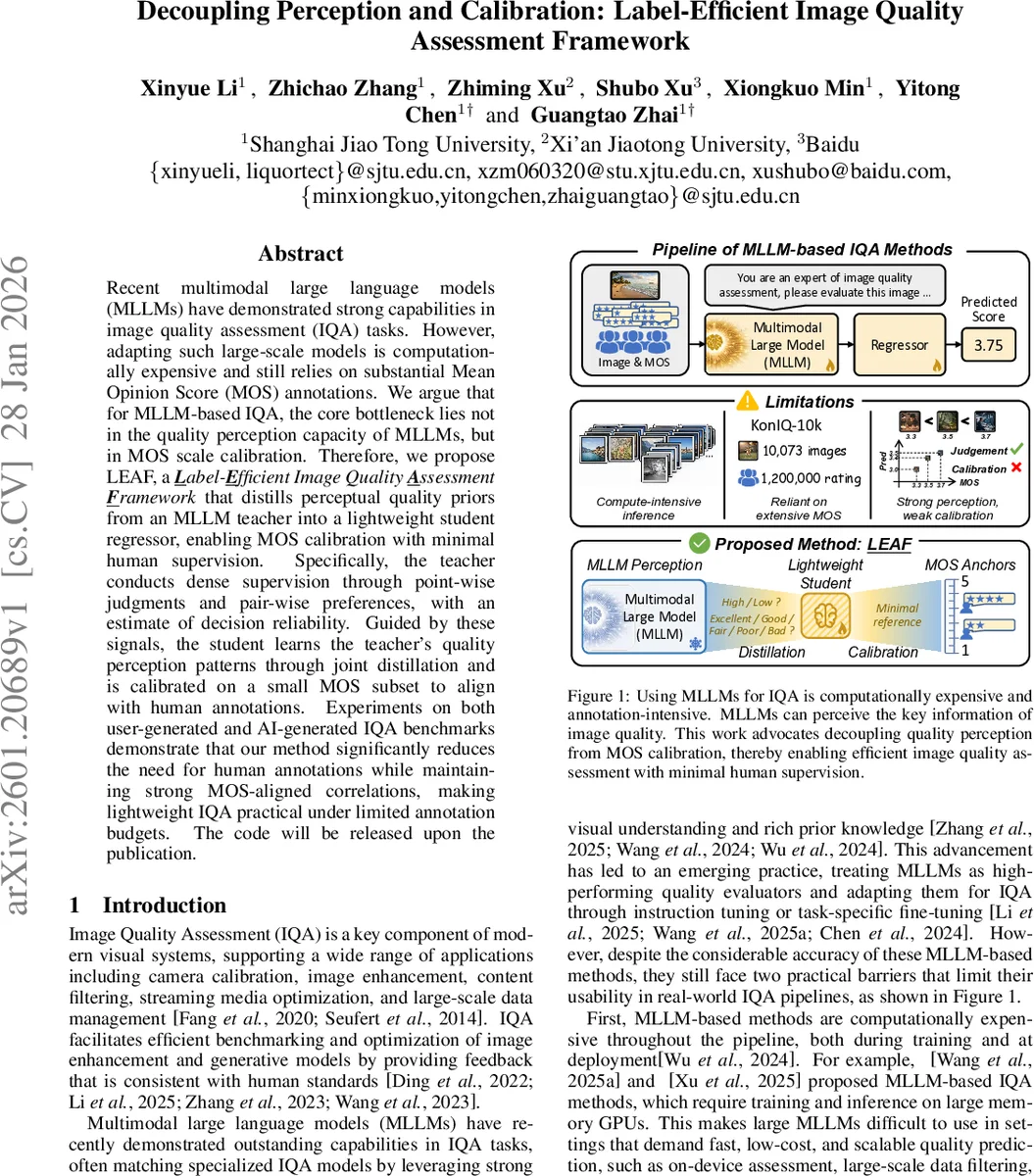

Recent multimodal large language models (MLLMs) have demonstrated strong capabilities in image quality assessment (IQA) tasks. However, adapting such large-scale models is computationally expensive and still relies on substantial Mean Opinion Score (MOS) annotations. We argue that for MLLM-based IQA, the core bottleneck lies not in the quality perception capacity of MLLMs, but in MOS scale calibration. Therefore, we propose LEAF, a Label-Efficient Image Quality Assessment Framework that distills perceptual quality priors from an MLLM teacher into a lightweight student regressor, enabling MOS calibration with minimal human supervision. Specifically, the teacher conducts dense supervision through point-wise judgments and pair-wise preferences, with an estimate of decision reliability. Guided by these signals, the student learns the teacher’s quality perception patterns through joint distillation and is calibrated on a small MOS subset to align with human annotations. Experiments on both user-generated and AI-generated IQA benchmarks demonstrate that our method significantly reduces the need for human annotations while maintaining strong MOS-aligned correlations, making lightweight IQA practical under limited annotation budgets.

💡 Research Summary

The paper addresses a practical bottleneck in modern image quality assessment (IQA) that arises when leveraging multimodal large language models (MLLMs). While recent works have shown that MLLMs can perceive image quality remarkably well—often matching or surpassing specialized IQA networks—their deployment is hampered by two intertwined issues: (1) the computational cost of running a massive model at inference time, and (2) the heavy reliance on large collections of Mean Opinion Score (MOS) annotations for calibrating the model’s output to the absolute rating scale used in benchmark datasets. The authors argue that the core limitation is not the perceptual capability of the MLLM but its inability to map its internal quality judgments onto the MOS scale required for real‑world applications.

To solve this, they propose LEAF (Label‑Efficient Image Quality Assessment Framework), a two‑stage teacher‑student system that decouples perception from calibration. In the first stage, a pretrained MLLM acts as a teacher and provides dense supervision on a large, unlabeled image pool. Two complementary signals are extracted: (i) point‑wise quality judgments obtained by prompting the model with a fixed set of five quality tokens (Excellent, Good, Fair, Poor, Bad). The model’s token‑level log‑likelihoods are converted into a probability distribution, which is then mapped to a continuous score via expectation over ordinal values (5–1). (ii) Pair‑wise preferences are generated by randomly sampling image pairs and asking the teacher which image is of higher quality. The softmax of the two token logits yields a binary preference and an associated confidence measure derived from the normalized entropy of the distribution. Low‑confidence pairs are filtered out.

A lightweight student regressor (s_{\theta}) is then trained jointly on both signals. A smooth L1 loss aligns the student’s scalar output with the teacher’s soft point‑wise scores, while a confidence‑weighted binary cross‑entropy loss enforces the teacher’s ranking on the sampled pairs. The total loss is a weighted sum of these two components, allowing the student to absorb both absolute and relative quality knowledge without any MOS labels.

In the second stage, a very small subset of images (typically less than 10 % of the full training set) that have human‑rated MOS values is used to fine‑tune the student. The fine‑tuning objective combines a standard mean‑squared error regression loss with a correlation‑based loss that directly maximizes Pearson Linear Correlation Coefficient (PLCC) between the student’s predictions and the human MOS. This calibration step aligns the student’s output distribution to the target MOS scale while preserving the perceptual knowledge distilled from the teacher.

Experiments are conducted on a mix of user‑generated content (UGC) benchmarks such as KonIQ‑10k and LIVE‑FB, as well as AI‑generated image quality benchmarks (AGIQA‑3K, IQA‑3K). The results show that LEAF, using only a fraction of MOS annotations, achieves SRCC and PLCC scores comparable to or better than fully fine‑tuned MLLM baselines and state‑of‑the‑art no‑reference IQA models. For example, on AGIQA‑3K, direct MLLM scoring yields SRCC ≈ 0.816 but PLCC ≈ 0.824 with a noticeable bias; after calibrating a lightweight head with just 10 % of MOS data, PLCC rises to 0.907 and the mean residual drops from 0.263 to 0.006. Moreover, the student model requires 5–10× fewer FLOPs and far less GPU memory than the full MLLM, making it suitable for real‑time or on‑device deployment.

The paper’s contributions are threefold: (1) it formalizes the perception‑calibration decoupling principle for label‑efficient IQA, (2) it introduces a joint point‑wise and pair‑wise distillation loss that leverages teacher confidence, and (3) it demonstrates that a small MOS‑labeled subset suffices to align a lightweight student with human judgments, dramatically reducing annotation cost. The authors also discuss limitations, such as the reliance on handcrafted prompts and a simple entropy‑based confidence metric, and suggest future directions including automated prompt optimization, Bayesian uncertainty estimation for teacher decisions, and hybrid self‑supervised pretraining to further diminish the need for any human labels.

In summary, LEAF provides a practical pathway to harness the strong perceptual abilities of large multimodal models while overcoming their computational and annotation burdens, delivering a compact, high‑performing IQA solution that is both cost‑effective and scalable to emerging image generation domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment