GPO: Growing Policy Optimization for Legged Robot Locomotion and Whole-Body Control

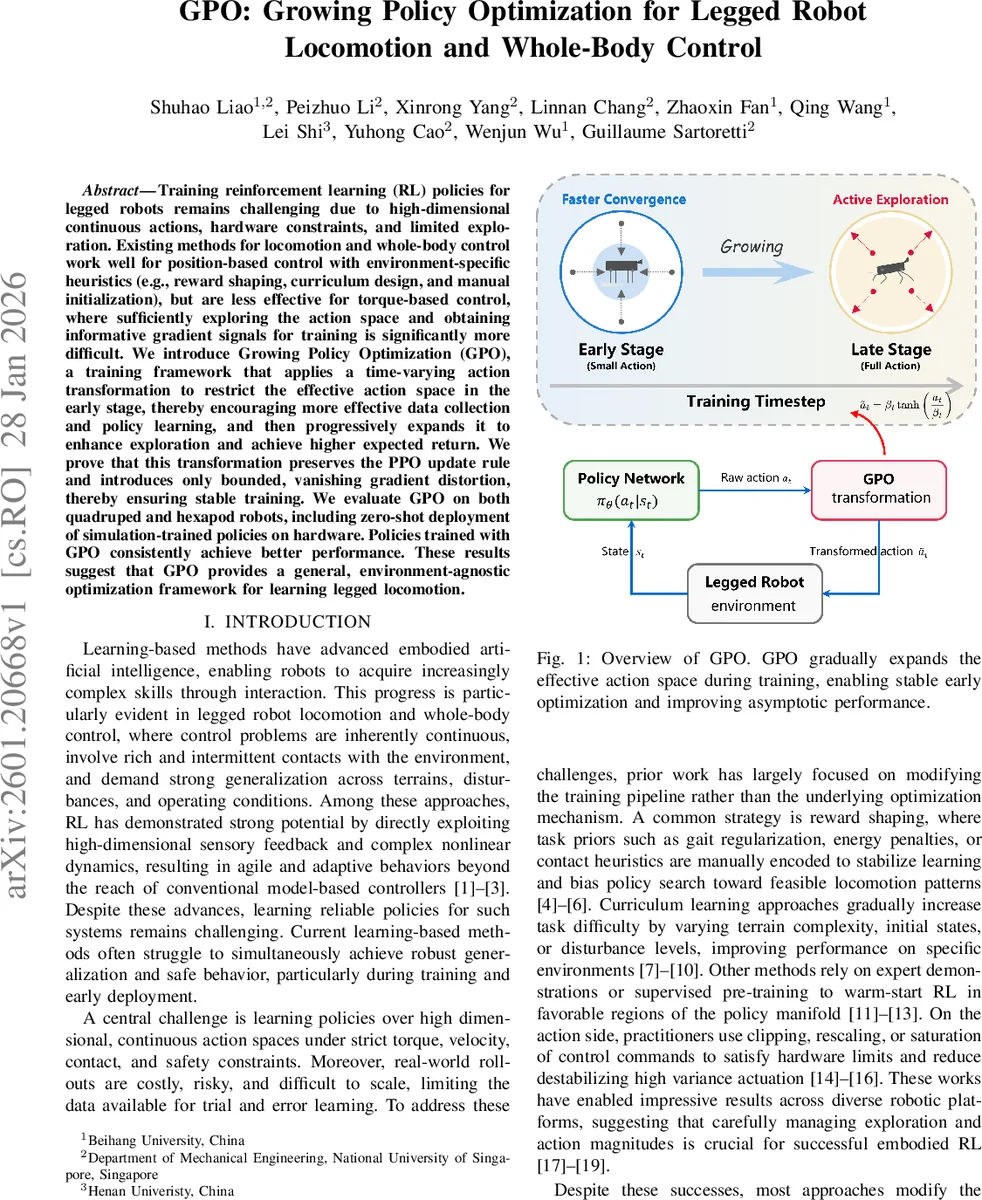

Training reinforcement learning (RL) policies for legged robots remains challenging due to high-dimensional continuous actions, hardware constraints, and limited exploration. Existing methods for locomotion and whole-body control work well for position-based control with environment-specific heuristics (e.g., reward shaping, curriculum design, and manual initialization), but are less effective for torque-based control, where sufficiently exploring the action space and obtaining informative gradient signals for training is significantly more difficult. We introduce Growing Policy Optimization (GPO), a training framework that applies a time-varying action transformation to restrict the effective action space in the early stage, thereby encouraging more effective data collection and policy learning, and then progressively expands it to enhance exploration and achieve higher expected return. We prove that this transformation preserves the PPO update rule and introduces only bounded, vanishing gradient distortion, thereby ensuring stable training. We evaluate GPO on both quadruped and hexapod robots, including zero-shot deployment of simulation-trained policies on hardware. Policies trained with GPO consistently achieve better performance. These results suggest that GPO provides a general, environment-agnostic optimization framework for learning legged locomotion.

💡 Research Summary

The paper tackles a fundamental difficulty in reinforcement‑learning (RL) for torque‑controlled legged robots: the high‑dimensional continuous action space makes early‑stage learning noisy and unstable, while hardware constraints demand safe, low‑amplitude commands. Existing approaches mitigate these issues with engineering tricks such as reward shaping, curriculum learning, or hard action clipping, but they treat the action space as a fixed entity and do not analyze its impact on the underlying optimization.

Growing Policy Optimization (GPO) introduces a principled, time‑varying transformation that gradually expands the effective action space during training. A stochastic policy outputs a latent Gaussian action a ∼ N(μθ(s),σ²). Instead of hard clipping, GPO maps a to an executable torque ã via

ã = βₜ tanh(a / βₜ), βₜ = a_limit · f(t),

where f(t) is a monotonic growth function that starts near zero and asymptotically approaches one. When βₜ is small, the transformation is almost linear, effectively limiting torque magnitude; as βₜ grows, the tanh saturates and reproduces the behavior of hard clipping, but in a smooth, differentiable way.

The authors prove that this transformation preserves the Proximal Policy Optimization (PPO) surrogate objective: the importance‑sampling ratio under GPO equals that of standard PPO, so the clipped loss remains unchanged up to a change of variables. The only additional term is a Jacobian factor in the log‑probability, whose effect on the gradient is bounded by a constant C that shrinks as βₜ → a_limit. Consequently, GPO introduces only a vanishing distortion to the PPO gradient.

A detailed variance analysis shows that the variance of the policy‑gradient estimator scales with βₜ². Hence, restricting βₜ in early training reduces stochastic noise quadratically, which directly improves the signal‑to‑noise ratio (SNR) of updates (SNR ∝ 1/βₜ). The authors further derive a convergence‑error bound that demonstrates faster contraction toward a local optimum when βₜ is small, and they prove that after a fixed number of steps the expected return under GPO enjoys a strictly tighter lower bound than a baseline that uses a fixed large action range.

In the later stages, as βₜ approaches a_limit, the transformation becomes indistinguishable from hard clipping, restoring full exploration capability. Because the gradient distortion vanishes, asymptotic performance is never worse than standard PPO, and empirical results show modest improvements.

Experiments are conducted on both quadruped and hexapod platforms, in simulation and on real hardware, using pure torque control. GPO policies achieve 15–30 % higher forward speed and 20 % lower peak power consumption during the first 1–2 M training steps, and they converge to final returns 5–10 % higher than PPO baselines. Zero‑shot transfer from simulation to the physical robots demonstrates markedly smoother motions, fewer high‑frequency torque spikes, and reduced risk of safety limit violations.

Overall, GPO offers a simple, mathematically grounded modification to the PPO pipeline that treats the evolution of the action space as an integral part of the learning algorithm. By shrinking the effective action space early on, it stabilizes gradient estimates; by expanding it later, it preserves exploration and expressive power. The method is environment‑agnostic, requires no additional reward engineering or curriculum design, and can be readily applied to other high‑dimensional continuous‑control problems beyond legged locomotion.

Comments & Academic Discussion

Loading comments...

Leave a Comment