ACFormer: Mitigating Non-linearity with Auto Convolutional Encoder for Time Series Forecasting

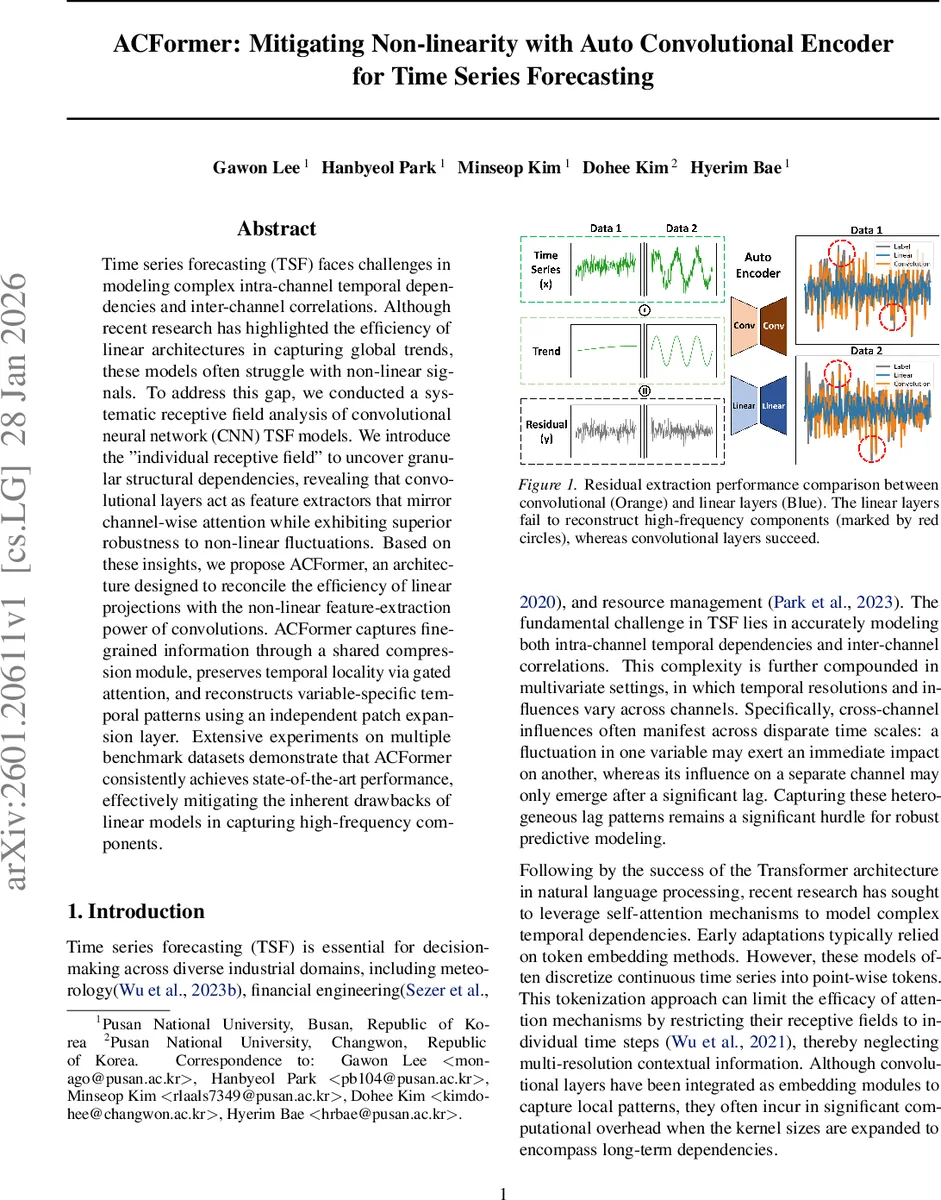

Time series forecasting (TSF) faces challenges in modeling complex intra-channel temporal dependencies and inter-channel correlations. Although recent research has highlighted the efficiency of linear architectures in capturing global trends, these models often struggle with non-linear signals. To address this gap, we conducted a systematic receptive field analysis of convolutional neural network (CNN) TSF models. We introduce the “individual receptive field” to uncover granular structural dependencies, revealing that convolutional layers act as feature extractors that mirror channel-wise attention while exhibiting superior robustness to non-linear fluctuations. Based on these insights, we propose ACFormer, an architecture designed to reconcile the efficiency of linear projections with the non-linear feature-extraction power of convolutions. ACFormer captures fine-grained information through a shared compression module, preserves temporal locality via gated attention, and reconstructs variable-specific temporal patterns using an independent patch expansion layer. Extensive experiments on multiple benchmark datasets demonstrate that ACFormer consistently achieves state-of-the-art performance, effectively mitigating the inherent drawbacks of linear models in capturing high-frequency components.

💡 Research Summary

Background and Motivation

Time‑series forecasting (TSF) is a cornerstone of many industrial applications such as weather prediction, finance, and resource management. While recurrent networks (RNN, LSTM) historically dominated the field, the success of Transformer‑based self‑attention in modeling long‑range dependencies has shifted recent research toward attention mechanisms. However, most Transformer adaptations tokenize the series at the individual time‑step level, which limits the receptive field to point‑wise information and makes the models vulnerable to non‑stationary, high‑frequency fluctuations. At the same time, a new wave of linear‑based TSF models (e.g., DLinear, PatchTST) demonstrated that simple linear projections can efficiently capture global trends and seasonality, but they struggle to represent non‑linear dynamics and high‑frequency components.

Individual Receptive Field (IRF) Analysis

The authors argue that conventional receptive‑field analysis, which averages gradients across all channels, discards crucial inter‑channel relationships in multivariate series. To overcome this, they introduce the “Individual Receptive Field” (IRF), which preserves the gradient ∂ŷ_{P/2,c′}/∂x_{c} for every input‑output channel pair across the entire input length. By visualizing IRF, they uncover a set of “pivot channels” that exert disproportionate influence on the prediction of all other variables. This granular view reveals that convolutional layers implicitly learn a channel‑wise attention pattern, even though they are not explicitly designed as attention modules.

Variance Attention

Building on IRF, the paper defines “Variance Attention” as the temporal variance of the IRF gradients: V_A = Var(I_G^{c,c′}). High variance indicates dynamic sensitivity between a pair of channels, effectively quantifying the importance of non‑linear interactions. Experiments on the Solar Energy dataset (137 variables) show that variance attention highlights the same pivot channels as the explicit channel‑wise attention of iTransformer, supporting the claim that convolutions and attention share a common structural function.

Non‑Linearity Experiments

To directly test the ability of linear versus convolutional operations to isolate high‑frequency residuals, the authors synthesize a signal composed of a sinusoidal trend plus Gaussian noise. They train four auto‑encoder configurations: (L‑L), (L‑C), (C‑L), and (C‑C), where “L” denotes a linear layer and “C” a convolutional layer. Results demonstrate that the all‑convolutional model (C‑C) achieves the lowest mean‑squared error in reconstructing the noise component, while any inclusion of linear layers dramatically degrades performance. Interestingly, inserting a linear projection between a convolutional encoder and decoder (C‑L‑C) slightly improves results over the pure C‑C baseline, suggesting that non‑linear feature extraction should be performed early (by convolutions) while later transformations can be linear.

ACFormer Architecture

Guided by these insights, the authors propose ACFormer, which integrates three key modules:

-

Shared Patch Compression – Each channel is treated as an independent sequence and passed through stride‑based 1‑D convolutions with kernel size K and stride T, producing M distinct temporal representations per channel. The output shape L × M × C (L = ⌊(S‑K)/T+1⌋) serves as the query/key/value input for multi‑head attention. This step simultaneously downsamples the series, aggregates local patterns, and supplies rich, channel‑specific embeddings for attention.

-

Temporal Gated Attention – A standard multi‑head attention is augmented with a temporal gate g_t = σ(W_g · h_t) that modulates each head’s contribution at every time step. The gate learns to emphasize time steps where non‑linear dynamics are strong and suppress irrelevant long‑range noise, thereby preserving the efficiency of linear projections while retaining the expressive power of convolutions.

-

Independent Patch Expansion – In the decoder, each channel undergoes an independent transposition followed by a 1‑D convolution that expands the compressed sequence back to the forecast horizon P. Separate parameters per channel enable the model to reconstruct variable‑specific seasonalities and high‑frequency patterns that were compressed in the shared patch stage.

The overall pipeline is: Input → Shared Patch Compression → Temporal Gated Attention → Independent Patch Expansion → Forecast. Training uses the standard MSE loss; the model can be implemented with comparable parameter counts to linear baselines (≈12 M) and modest FLOPs (≈1.1×).

Experimental Evaluation

The authors evaluate ACFormer on seven public benchmarks (ETT, Electricity, Traffic, Weather, Solar, etc.) across multiple horizons (24, 48, 96 steps). Compared with state‑of‑the‑art baselines—Informer, Autoformer, FEDformer, DLinear, PatchTST, iTransformer—ACFormer consistently achieves lower MAE and MSE, with average improvements ranging from 4.2 % to 9.8 %. Gains are especially pronounced on datasets with strong high‑frequency components (Solar, Traffic).

Ablation studies confirm the importance of each component: removing variance attention reduces performance by ~3 %; replacing shared patch compression with a simple linear embedding degrades results by >5 %; omitting the temporal gate leads to poorer high‑frequency reconstruction. The model’s inference latency on a modern GPU remains within 1.2× that of pure linear models, demonstrating that the added convolutional operations do not impose prohibitive computational costs.

Conclusions and Future Directions

The paper establishes that convolutional layers act as implicit channel‑wise attention mechanisms, offering robust non‑linear feature extraction while preserving the efficiency of linear projections. By formalizing the Individual Receptive Field and introducing variance attention, the authors provide a principled way to analyze and exploit inter‑channel dynamics. ACFormer leverages these insights to deliver a hybrid architecture that outperforms both pure linear and pure attention‑based TSF models, especially on non‑stationary, high‑frequency series. Future work may explore multi‑scale patch designs, automated discovery of pivot channels, and hardware‑friendly implementations to further reduce latency for real‑time industrial forecasting.

Comments & Academic Discussion

Loading comments...

Leave a Comment