ConStruM: A Structure-Guided LLM Framework for Context-Aware Schema Matching

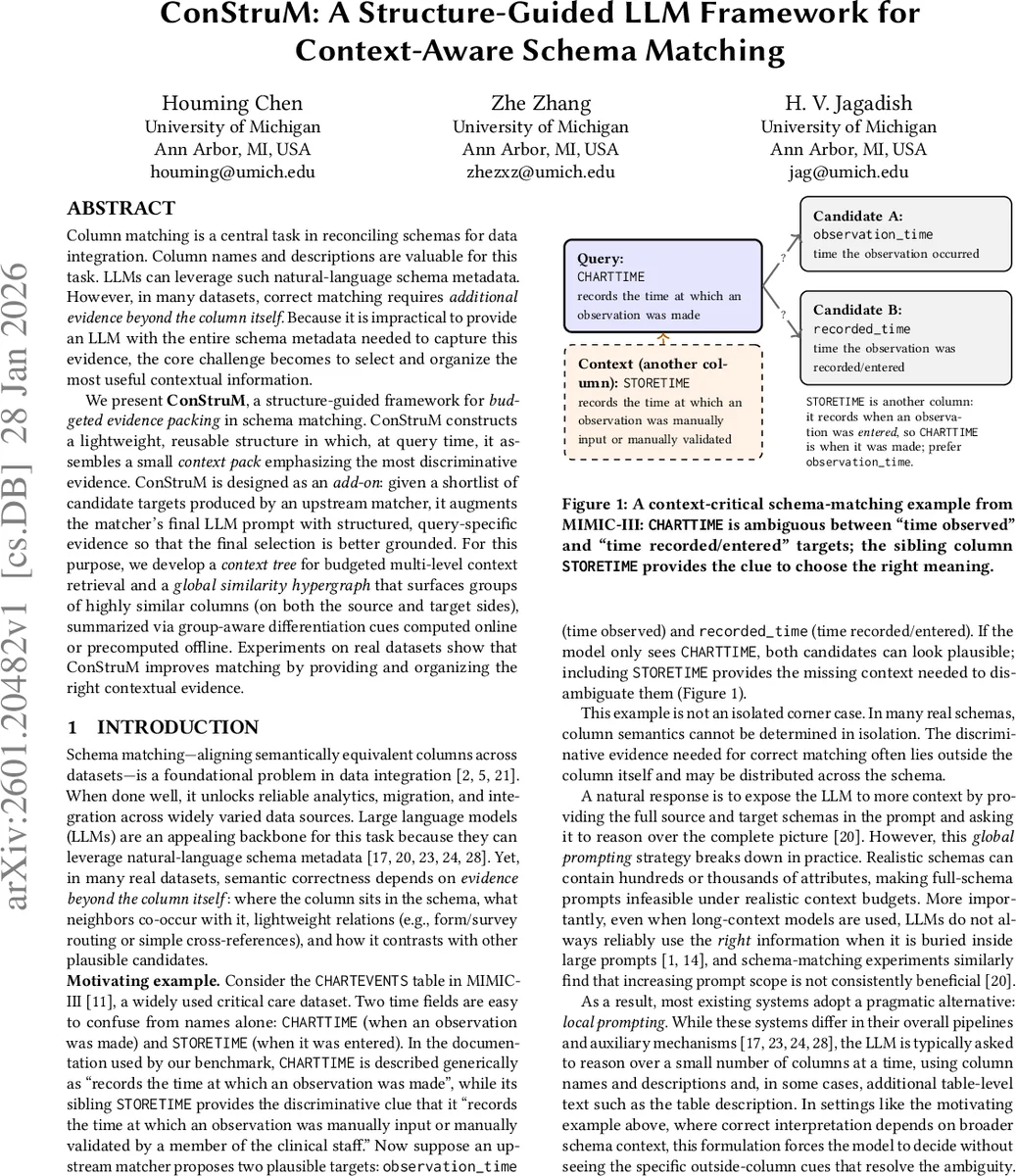

Column matching is a central task in reconciling schemas for data integration. Column names and descriptions are valuable for this task. LLMs can leverage such natural-language schema metadata. However, in many datasets, correct matching requires additional evidence beyond the column itself. Because it is impractical to provide an LLM with the entire schema metadata needed to capture this evidence, the core challenge becomes to select and organize the most useful contextual information. We present ConStruM, a structure-guided framework for budgeted evidence packing in schema matching. ConStruM constructs a lightweight, reusable structure in which, at query time, it assembles a small context pack emphasizing the most discriminative evidence. ConStruM is designed as an add-on: given a shortlist of candidate targets produced by an upstream matcher, it augments the matcher’s final LLM prompt with structured, query-specific evidence so that the final selection is better grounded. For this purpose, we develop a context tree for budgeted multi-level context retrieval and a global similarity hypergraph that surfaces groups of highly similar columns (on both the source and target sides), summarized via group-aware differentiation cues computed online or precomputed offline. Experiments on real datasets show that ConStruM improves matching by providing and organizing the right contextual evidence.

💡 Research Summary

The paper tackles the long‑standing problem of column‑level schema matching in data integration, focusing on how large language models (LLMs) can be leveraged to use natural‑language metadata such as column names and descriptions. While LLMs excel at interpreting textual cues, many real‑world schemas contain ambiguous columns whose correct target can only be resolved by looking at surrounding columns, table‑level context, or lightweight relationships. Providing the entire source and target schemas to an LLM (the “global prompting” approach) is impractical because schemas often contain hundreds or thousands of attributes, exceeding token budgets and overwhelming the model, which frequently fails to attend to the most relevant evidence. Conversely, the “local prompting” strategy—feeding only the source‑target pair—misses the crucial contextual clues needed for disambiguation.

To bridge this gap, the authors introduce ConStruM, a structure‑guided, budget‑aware framework that treats context‑critical schema matching as an evidence‑packing problem. ConStruM is designed as an add‑on to any existing LLM‑based matcher that already produces a short shortlist of candidate target columns (denoted C₀). Its core contribution is the construction of two lightweight intermediate data structures during an offline preprocessing phase:

-

Context Tree – A hierarchical index that captures multi‑level schema context. Each table is first turned into a small tree whose leaves are columns and whose internal nodes store natural‑language summaries of increasingly larger column groups. Tables themselves are then clustered based on similarity and merged into a single database‑wide tree (a “tree of trees”). At query time, for any source column s, ConStruM retrieves the lineage from the leaf up to the root, providing a coarse‑to‑fine set of summaries that fit within a fixed token budget.

-

Global Similarity Hypergraph – A graph‑like structure where nodes are all columns (both source and target) and hyper‑edges connect groups of highly similar columns. For each hyper‑edge, a concise “differentiation cue” is either pre‑computed or generated on‑the‑fly, summarizing the subtle distinctions among the grouped columns.

During the online matching phase, ConStruM receives a source column s and its candidate set C₀ from the upstream matcher. It then:

- Queries the hypergraph to identify confusable groups that contain members of C₀ (and possibly s itself).

- Retrieves a multi‑level context pack from the context tree, allocating more tokens to the most discriminative levels while respecting the overall prompt budget.

- Constructs a final prompt that includes the source column description, each candidate description, the group‑wise differentiation cues, and the hierarchical context summaries.

This prompt explicitly encourages the LLM to perform comparative reasoning rather than evaluating each candidate in isolation, thereby improving disambiguation.

The authors evaluate ConStruM on two benchmarks:

- MIMIC‑2‑OMOP, a medical dataset previously used by ReMatch. ConStruM achieves 59.69 % Top‑1 accuracy (vs. ReMatch’s lower score) and strong Top‑3/Top‑5 results (76.92 % / 82.05 %).

- HRS‑B, a newly constructed benchmark derived from the HRS Employment survey. In this benchmark, near‑duplicate fields are deliberately separated in column order, making contextual cues essential. ConStruM attains 0.935 accuracy, dramatically outperforming ReMatch’s 0.503.

Ablation studies reveal that both components are essential: removing multi‑level context (using only a single summary level) degrades performance, and omitting the hypergraph‑based contrast cues leads to confusion among similar candidates. These findings confirm the authors’ hypothesis that “selecting and structuring the right evidence” is more important than simply increasing the amount of text presented to the LLM.

ConStruM’s design as a plug‑in means it can be integrated with any existing LLM‑based matcher without altering the upstream candidate generation pipeline. The offline‑built structures are reusable across many queries, and the online packing step is lightweight, making the approach practical for real‑world integration scenarios.

In summary, the paper contributes:

- A novel perspective on schema matching as evidence packing.

- Two reusable data structures (context tree and similarity hypergraph) that enable budget‑constrained, multi‑level, contrastive context provision.

- A new benchmark (HRS‑B) that highlights the importance of beyond‑column evidence.

- Empirical evidence that the proposed framework substantially improves LLM‑driven schema matching, especially in contexts where column meaning depends on surrounding schema elements. Future work may explore richer schema relationships (foreign keys, triggers) and more advanced summarization models to further enhance evidence quality.

Comments & Academic Discussion

Loading comments...

Leave a Comment