RAW-Flow: Advancing RGB-to-RAW Image Reconstruction with Deterministic Latent Flow Matching

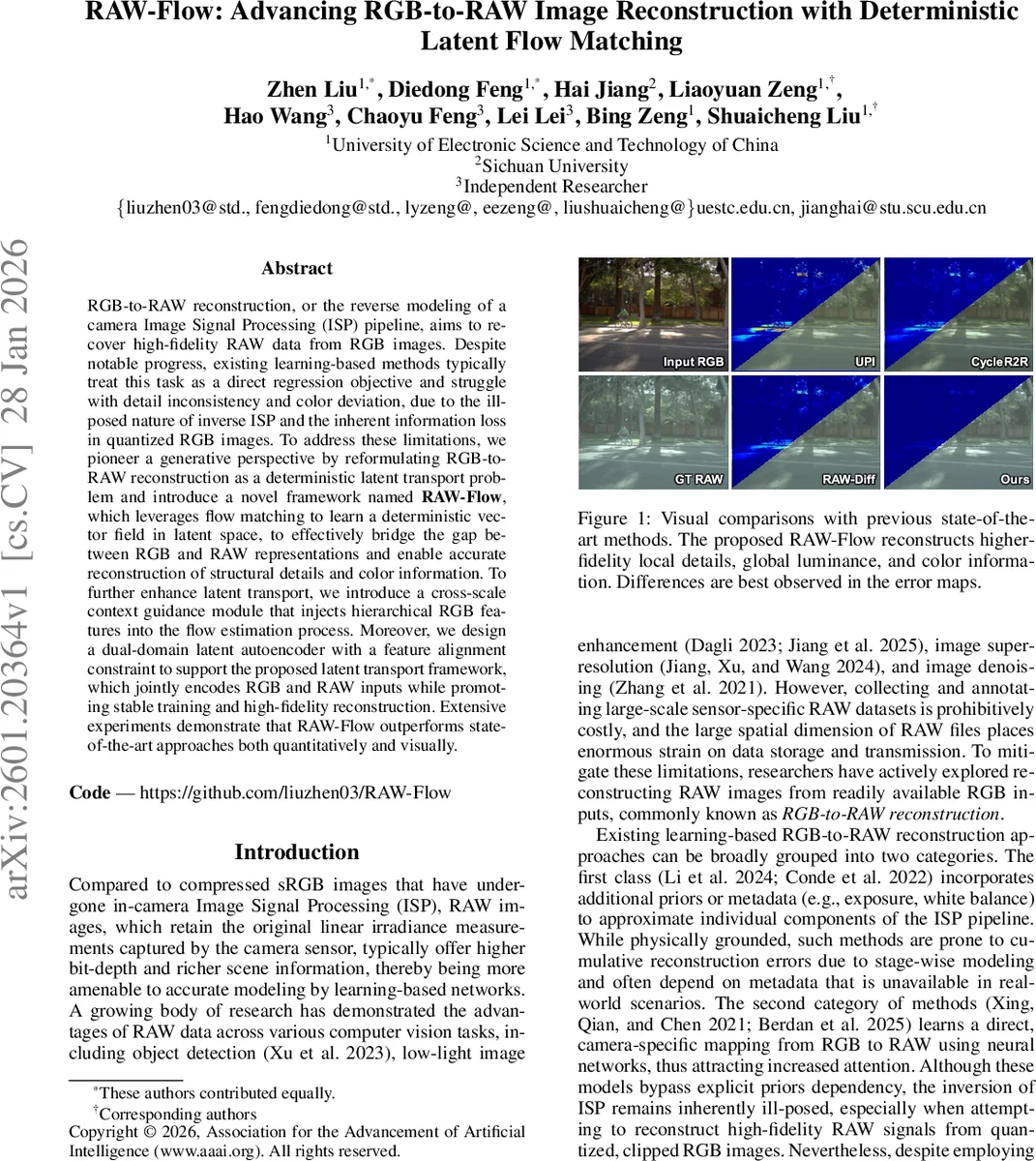

RGB-to-RAW reconstruction, or the reverse modeling of a camera Image Signal Processing (ISP) pipeline, aims to recover high-fidelity RAW data from RGB images. Despite notable progress, existing learning-based methods typically treat this task as a direct regression objective and struggle with detail inconsistency and color deviation, due to the ill-posed nature of inverse ISP and the inherent information loss in quantized RGB images. To address these limitations, we pioneer a generative perspective by reformulating RGB-to-RAW reconstruction as a deterministic latent transport problem and introduce a novel framework named RAW-Flow, which leverages flow matching to learn a deterministic vector field in latent space, to effectively bridge the gap between RGB and RAW representations and enable accurate reconstruction of structural details and color information. To further enhance latent transport, we introduce a cross-scale context guidance module that injects hierarchical RGB features into the flow estimation process. Moreover, we design a dual-domain latent autoencoder with a feature alignment constraint to support the proposed latent transport framework, which jointly encodes RGB and RAW inputs while promoting stable training and high-fidelity reconstruction. Extensive experiments demonstrate that RAW-Flow outperforms state-of-the-art approaches both quantitatively and visually.

💡 Research Summary

The paper “RAW-Flow: Advancing RGB-to-RAW Image Reconstruction with Deterministic Latent Flow Matching” addresses the challenging inverse problem of reconstructing a camera’s original RAW sensor data from a processed, compressed RGB image. This task is inherently ill-posed due to the non-invertible and lossy nature of the in-camera Image Signal Processing (ISP) pipeline. Existing learning-based methods, which often treat it as a direct regression task or employ stochastic generative models like diffusion, struggle with issues such as loss of fine detail, color inaccuracy, and high computational cost.

To overcome these limitations, RAW-Flow pioneers a generative perspective by reformulating RGB-to-RAW reconstruction as a deterministic latent transport problem. The core innovation is a novel framework comprising three synergistic components.

First, a Dual-domain Latent Autoencoder (DLAE) is designed to learn compact and high-fidelity latent representations for both RGB and RAW domains. A key challenge is the instability of training an autoencoder solely in the RAW domain. The DLAE mitigates this by introducing a dual-domain feature alignment loss, which encourages the shallow-layer features of the RAW encoder to align with those of the semantically richer and more stable RGB encoder. This stabilizes training and fosters a shared, meaningful latent structure.

Second, the Deterministic Latent Flow Matching (DLFM) module learns the optimal transport path between these latent spaces. Instead of a stochastic diffusion process from noise to data, DLFM defines a straight-line path in latent space: z_t = t * z_raw + (1-t) * z_rgb, where t is a continuous time variable. A neural network is trained to predict a time-dependent vector field that matches the constant ground-truth velocity (z_raw - z_rgb) pointing from the RGB latent to the RAW latent. This deterministic approach provides a clear, supervised objective, leading to more efficient training and precise mapping compared to probabilistic methods.

Third, to further refine the transformation, a Cross-scale Context Guidance module is introduced. Multi-scale feature maps extracted from the input RGB image are injected into the flow prediction network at corresponding layers. This injection provides crucial global and local contextual information (e.g., luminance, color, texture) to guide the latent flow, significantly improving the fidelity of the final reconstructed RAW image’s structural details and color consistency.

During inference, given an RGB image, its latent code is extracted by the RGB encoder. This code is then transported through the learned deterministic flow field via numerical integration (e.g., Euler method) to arrive at the predicted RAW latent code. Finally, the RAW decoder reconstructs the high-fidelity RAW image from this latent code.

Extensive experiments demonstrate that RAW-Flow outperforms state-of-the-art methods across standard quantitative metrics (PSNR, SSIM, LPIPS) on benchmark datasets. Visual comparisons consistently show its superior ability to recover accurate colors, preserve global luminance, and reconstruct fine-grained textures that are often lost or distorted by previous approaches. The work successfully demonstrates that a deterministic latent flow matching paradigm, augmented with cross-domain feature alignment and multi-scale context guidance, provides a powerful and efficient solution for the highly ill-posed RGB-to-RAW reconstruction problem.

Comments & Academic Discussion

Loading comments...

Leave a Comment