Beyond Speedup -- Utilizing KV Cache for Sampling and Reasoning

KV caches, typically used only to speed up autoregressive decoding, encode contextual information that can be reused for downstream tasks at no extra cost. We propose treating the KV cache as a lightweight representation, eliminating the need to recompute or store full hidden states. Despite being weaker than dedicated embeddings, KV-derived representations are shown to be sufficient for two key applications: \textbf{(i) Chain-of-Embedding}, where they achieve competitive or superior performance on Llama-3.1-8B-Instruct and Qwen2-7B-Instruct; and \textbf{(ii) Fast/Slow Thinking Switching}, where they enable adaptive reasoning on Qwen3-8B and DeepSeek-R1-Distil-Qwen-14B, reducing token generation by up to $5.7\times$ with minimal accuracy loss. Our findings establish KV caches as a free, effective substrate for sampling and reasoning, opening new directions for representation reuse in LLM inference. Code: https://github.com/cmd2001/ICLR2026_KV-Embedding.

💡 Research Summary

The paper investigates the often‑overlooked potential of the key‑value (KV) cache that is automatically generated during autoregressive decoding of large language models (LLMs). While the KV cache is traditionally treated solely as a speed‑up mechanism for attention, the authors argue that it already contains rich contextual information that can be repurposed as a lightweight representation without any additional computation or memory overhead.

Two downstream applications are explored. The first is “Chain‑of‑Embedding” (CoE), a method that evaluates the quality of a generation by tracking the trajectory of internal representations across layers. The authors replace the original hidden‑state trajectory with a trajectory built from KV tensors. For each token they flatten the key and value matrices from all layers, average them, and obtain a token‑level embedding. By computing Euclidean distances and cosine angles between successive token embeddings, they derive the same Δr and Δθ metrics used in vanilla CoE. Because the KV tensors are already stored, this KV‑CoE incurs virtually zero extra FLOPs or memory. Experiments on Llama‑3.1‑8B‑Instruct and Qwen2‑7B‑Instruct show that KV‑CoE matches or slightly exceeds the original hidden‑state based CoE on several classification‑style self‑evaluation benchmarks, despite the embeddings being weaker than dedicated embedding models.

The second application is an adaptive “Fast/Slow Thinking Switch”. During generation the KV‑CoE score is monitored in real time. If the score exceeds a predefined difficulty threshold, the model switches to a “slow” mode that allocates more tokens, higher temperature, or additional reasoning steps; otherwise it stays in a “fast” mode that produces a single token per step. The switch is implemented simply by inserting control tokens (e.g.,

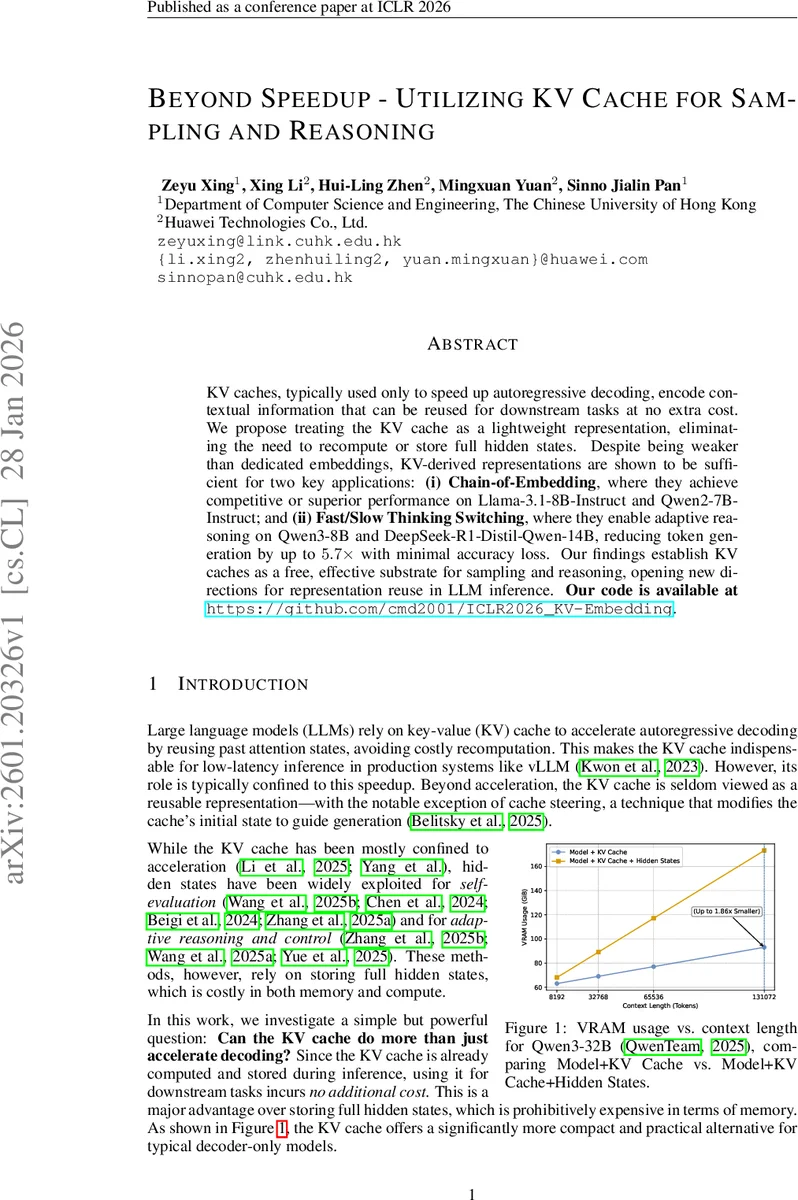

A detailed analysis of memory usage (Figure 1) demonstrates that keeping only the KV cache, instead of both KV cache and hidden states, can cut VRAM consumption by up to 1.86× for long contexts. The authors acknowledge that KV‑derived embeddings are not suitable for tasks requiring globally calibrated semantics (e.g., large‑scale retrieval) because they are anisotropic, position‑dependent, and have lower dimensionality (d_head ≪ d_model). However, for tasks with a limited candidate set or those that rely on relative ordering within a small group, KV embeddings provide sufficient discriminative power.

The paper also discusses limitations and future directions: adding lightweight normalization to mitigate anisotropy, incorporating KV‑aware contrastive objectives during pre‑training to improve representation quality, and extending KV‑based signals to multimodal or more complex control schemes.

In summary, the work presents a practical, cost‑free way to turn the KV cache—an inevitable by‑product of efficient LLM inference—into a useful representation for both evaluation (Chain‑of‑Embedding) and dynamic control (Fast/Slow Thinking). By demonstrating substantial token‑generation savings and competitive accuracy on modern instruction‑tuned models, the study opens a new design space for inference pipelines where the KV cache becomes a first‑class resource beyond mere acceleration.

Comments & Academic Discussion

Loading comments...

Leave a Comment