TPGDiff: Hierarchical Triple-Prior Guided Diffusion for Image Restoration

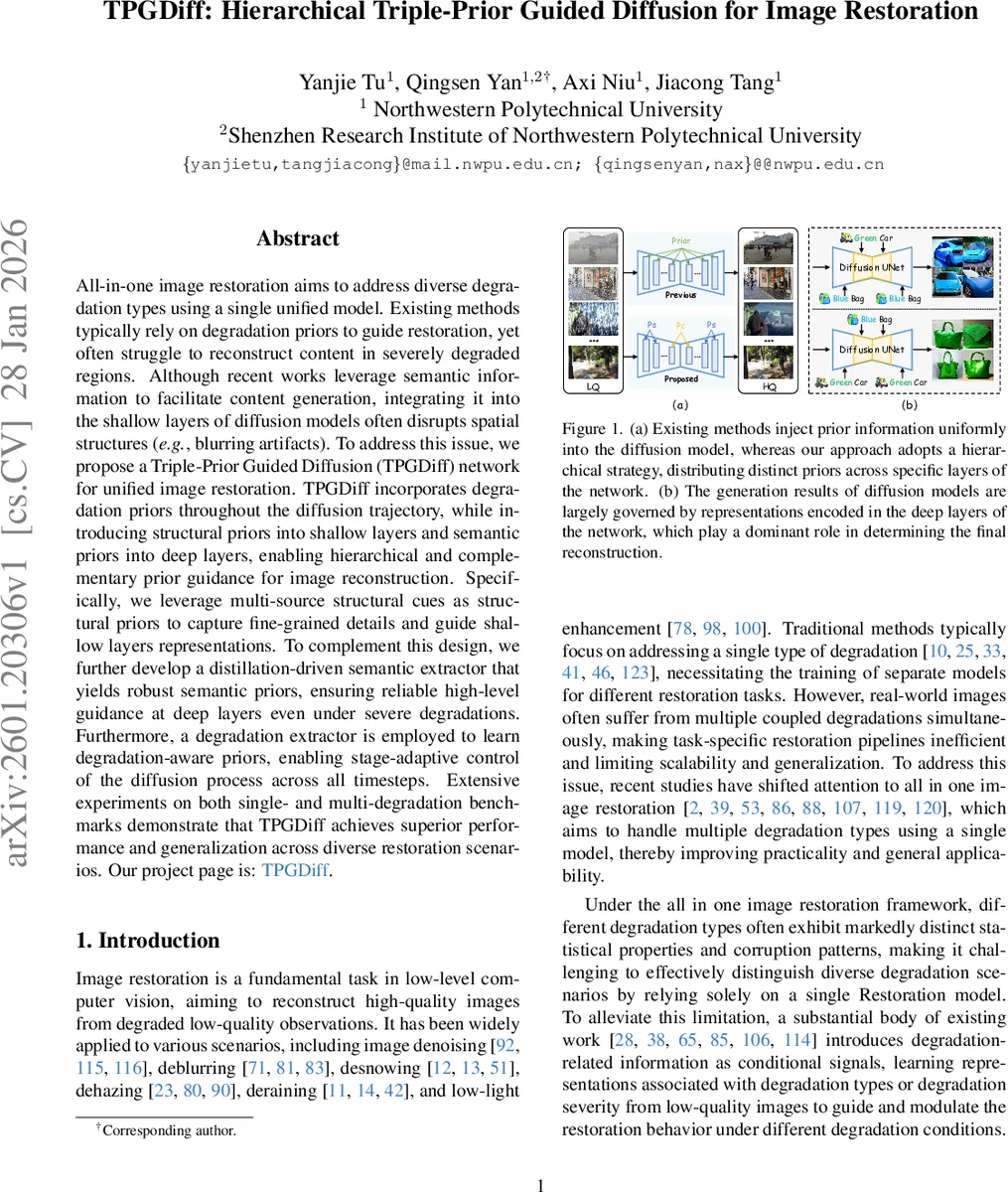

All-in-one image restoration aims to address diverse degradation types using a single unified model. Existing methods typically rely on degradation priors to guide restoration, yet often struggle to reconstruct content in severely degraded regions. Although recent works leverage semantic information to facilitate content generation, integrating it into the shallow layers of diffusion models often disrupts spatial structures (\emph{e.g.}, blurring artifacts). To address this issue, we propose a Triple-Prior Guided Diffusion (TPGDiff) network for unified image restoration. TPGDiff incorporates degradation priors throughout the diffusion trajectory, while introducing structural priors into shallow layers and semantic priors into deep layers, enabling hierarchical and complementary prior guidance for image reconstruction. Specifically, we leverage multi-source structural cues as structural priors to capture fine-grained details and guide shallow layers representations. To complement this design, we further develop a distillation-driven semantic extractor that yields robust semantic priors, ensuring reliable high-level guidance at deep layers even under severe degradations. Furthermore, a degradation extractor is employed to learn degradation-aware priors, enabling stage-adaptive control of the diffusion process across all timesteps. Extensive experiments on both single- and multi-degradation benchmarks demonstrate that TPGDiff achieves superior performance and generalization across diverse restoration scenarios. Our project page is: https://leoyjtu.github.io/tpgdiff-project.

💡 Research Summary

TPGDiff introduces a novel hierarchical prior‑guided diffusion framework for all‑in‑one image restoration. The authors identify three major shortcomings of existing approaches: (1) reliance on degradation priors alone, which cannot guarantee semantic plausibility; (2) premature injection of high‑level semantic cues into shallow diffusion layers, leading to structural blurring; and (3) lack of a systematic way to exploit heterogeneous priors. To address these issues, TPGDiff extracts three complementary priors from a low‑quality input: a degradation prior, a structural prior, and a semantic prior, and coordinates their injection across the UNet backbone of a diffusion model.

The degradation extractor learns degradation‑aware embeddings that modulate the reverse diffusion process at every timestep, providing stage‑adaptive control. The structural prior is built from three modalities—depth maps, segmentation masks, and Difference‑of‑Gaussians (DoG) features. Each modality is processed by a lightweight encoder with modality‑specific embeddings, flattened into token sequences, and then compressed via a Structural Token Aggregator (STA) that uses learnable latent tokens and cross‑attention. The resulting compact structural prior is injected into the shallow layers through a lightweight Structural Adapter, directly influencing low‑level feature channels via element‑wise addition and multiplication.

Semantic priors are obtained through a teacher‑student distillation scheme. A frozen teacher encoder extracts high‑quality semantic features from the ground‑truth image, while a student encoder learns to reproduce these features from the degraded input. A cosine‑similarity distillation loss forces the student’s representations to be degradation‑invariant. The distilled semantic prior is then used as a global context in the deep layers of the diffusion UNet via cross‑attention, ensuring that high‑level content remains consistent throughout the generation process.

All three priors are incorporated into the reverse stochastic differential equation governing the diffusion process: dx = hθ(t)(μ – x) – σ²(t) sθ(x, t; μ, z_sem, z_struct, z_deg) dt + σ(t) dŵ, where sθ is the score network conditioned on the three priors. This formulation allows the model to simultaneously exploit degradation information (for noise level adaptation), structural cues (for fine‑grained geometry), and semantic guidance (for global coherence).

Extensive experiments on both single‑degradation benchmarks (e.g., Gaussian noise, motion blur) and multi‑degradation datasets (combined noise, blur, low‑light, compression artifacts) demonstrate that TPGDiff consistently outperforms state‑of‑the‑art diffusion‑based restorers (MPerceiver, DiffRes) and leading all‑in‑one CNN methods (AirNet, PromptIR). Gains are reported in PSNR/SSIM (up to 1.2 dB improvement), LPIPS, and human perceptual studies. Ablation analyses confirm that removing any of the three priors degrades performance, highlighting their complementary nature. While the approach requires auxiliary pretrained models for depth, segmentation, and DoG extraction, the overall parameter increase is modest (<10 %) and inference memory remains comparable to existing diffusion models.

In summary, TPGDiff’s hierarchical coordination of degradation, structural, and semantic priors resolves the longstanding trade‑off between preserving fine‑level geometry and maintaining high‑level semantic fidelity in diffusion‑based image restoration. The work sets a new benchmark for unified restoration across diverse degradation scenarios and opens avenues for further research on dynamic prior selection and real‑time deployment.

Comments & Academic Discussion

Loading comments...

Leave a Comment