TRACER: Texture-Robust Affordance Chain-of-Thought for Deformable-Object Refinement

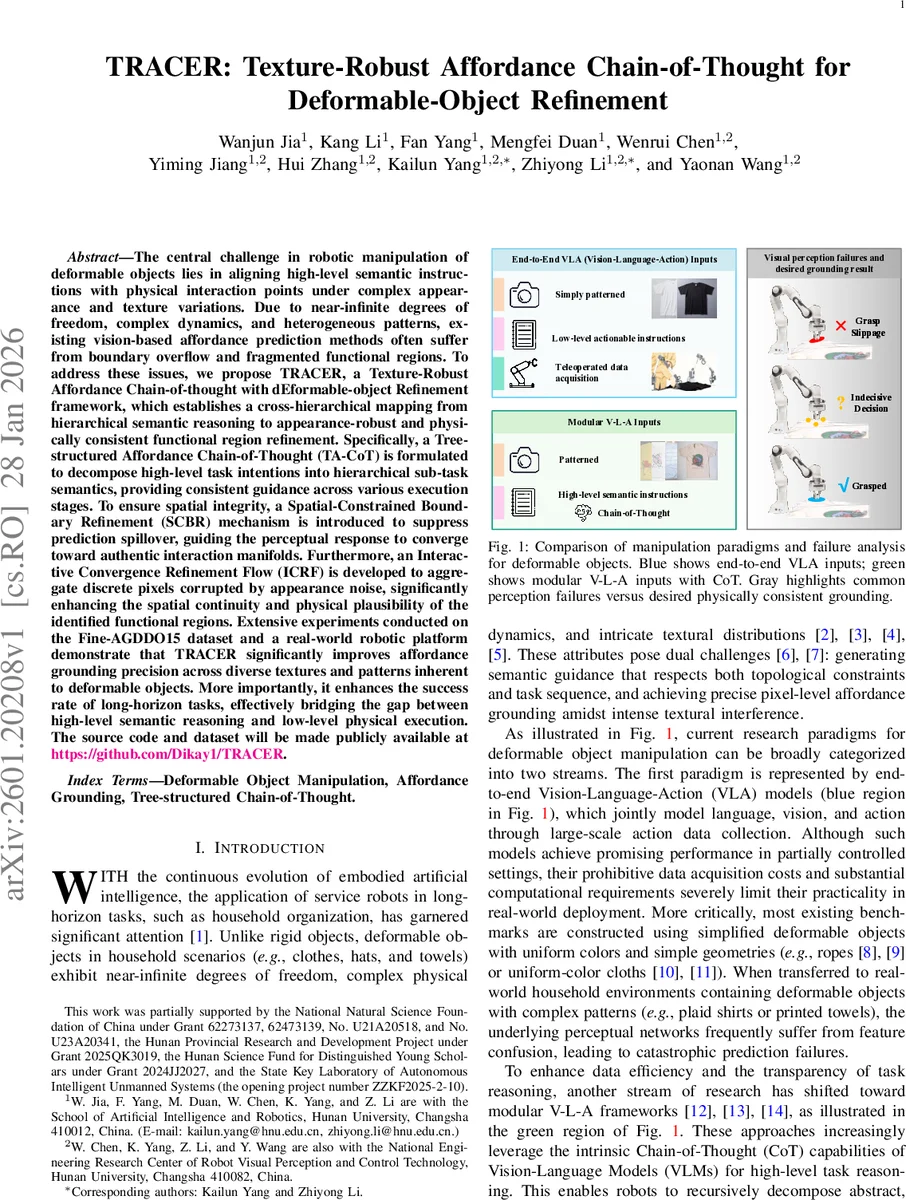

The central challenge in robotic manipulation of deformable objects lies in aligning high-level semantic instructions with physical interaction points under complex appearance and texture variations. Due to near-infinite degrees of freedom, complex dynamics, and heterogeneous patterns, existing vision-based affordance prediction methods often suffer from boundary overflow and fragmented functional regions. To address these issues, we propose TRACER, a Texture-Robust Affordance Chain-of-thought with dEformable-object Refinement framework, which establishes a cross-hierarchical mapping from hierarchical semantic reasoning to appearance-robust and physically consistent functional region refinement. Specifically, a Tree-structured Affordance Chain-of-Thought (TA-CoT) is formulated to decompose high-level task intentions into hierarchical sub-task semantics, providing consistent guidance across various execution stages. To ensure spatial integrity, a Spatial-Constrained Boundary Refinement (SCBR) mechanism is introduced to suppress prediction spillover, guiding the perceptual response to converge toward authentic interaction manifolds. Furthermore, an Interactive Convergence Refinement Flow (ICRF) is developed to aggregate discrete pixels corrupted by appearance noise, significantly enhancing the spatial continuity and physical plausibility of the identified functional regions. Extensive experiments conducted on the Fine-AGDDO15 dataset and a real-world robotic platform demonstrate that TRACER significantly improves affordance grounding precision across diverse textures and patterns inherent to deformable objects. More importantly, it enhances the success rate of long-horizon tasks, effectively bridging the gap between high-level semantic reasoning and low-level physical execution. The source code and dataset will be made publicly available at https://github.com/Dikay1/TRACER.

💡 Research Summary

TRACER (Texture‑Robust Affordance Chain‑of‑Thought for Deformable‑Object Refinement) tackles the long‑standing gap between high‑level language instructions and low‑level interaction points when manipulating highly textured deformable objects such as clothes, towels, and fabrics. Existing approaches either rely on end‑to‑end Vision‑Language‑Action (VLA) models that demand massive annotated action data and often fail on real‑world textures, or adopt modular V‑L‑A pipelines that use Chain‑of‑Thought (CoT) reasoning but still suffer from two critical perception defects: (1) spatial prediction overflow—affordance heatmaps spill beyond object boundaries, causing grasp slippage, and (2) functional region fragmentation—single functional zones are broken into scattered pixel clusters, making reliable target selection impossible.

TRACER introduces three synergistic components to close this perception loop.

-

Tree‑structured Affordance Chain‑of‑Thought (TA‑CoT) – High‑level commands (e.g., “fold a T‑shirt”) are parsed into a decision tree of hierarchical sub‑tasks (e.g., “grasp sleeve”, “lift sleeve”). Each node carries both what to do and when it is physically feasible, verified by a visual state‑verification module that checks the current topology (e.g., sleeve position). This ensures that language reasoning stays grounded in the actual object state, eliminating logical inconsistencies.

-

Spatial‑Constrained Boundary Refinement (SCBR) loss – Traditional affordance heatmaps are overly sensitive to texture variations and often extend into background regions. SCBR adds a boundary‑aware regularizer that penalizes activation outside the object mask and suppresses gradients near edges, encouraging the network to prioritize global structural cues over local texture cues. The result is a prediction that naturally contracts within the true physical contour of the deformable item, reducing grasp‑slippage risk.

-

Interactive Convergence Refinement Flow (ICRF) – Initial affordance predictions are frequently multimodal and fragmented. ICRF models a physically plausible convergence process: a learned pixel‑wise velocity field iteratively transports scattered activations toward a coherent interaction manifold. Unlike static MSE‑based flow methods, ICRF explicitly captures multimodality by allowing pixels to interact and merge, yielding a single, continuous region that respects physical occupancy constraints.

The framework builds upon the OS‑AGDO architecture and is trained in two stages: (i) coarse affordance heatmap generation with TA‑CoT conditioning, and (ii) refinement using SCBR and ICRF.

Experimental validation is performed on the Fine‑AGDDO15 dataset (15 object categories, 15 affordance classes) and on a real‑world bimanual robot platform. Quantitatively, TRACER improves Kullback‑Leibler Divergence by 4.8 %, Similarity (SIM) by 7.5 %, and Normalized Scanpath Saliency (NSS) by 4.3 % over the OS‑AGDO baseline. Qualitatively, it eliminates boundary overflow and merges fragmented zones, as illustrated in comparative visualizations. In real‑robot long‑horizon tasks, TRACER achieves a 70 % success rate for tissue pull‑out and 60 % for garment organization under diverse patterns—substantial gains over prior modular pipelines. Ablation studies confirm that each component contributes: TA‑CoT reduces logical failures, SCBR cuts overflow incidents by ~15 %, and ICRF improves region continuity by ~20 %.

Contributions: (1) First one‑shot, texture‑robust affordance grounding framework for complex deformable objects, requiring no expensive action datasets. (2) A closed‑loop mapping from hierarchical semantic reasoning to physically consistent visual refinement via TA‑CoT, SCBR, and ICRF. (3) Extensive multi‑modal evaluation demonstrating robustness to texture interference and improved manipulation stability.

Limitations and future work: The current system operates on 2D RGB images and does not explicitly model material properties such as elasticity or friction, which may limit performance on tasks demanding precise physical simulation. Extending TRACER with depth or point‑cloud inputs, integrating physics engines, and exploring multimodal CoT that incorporates tactile feedback are promising directions to achieve fully generalizable deformable‑object manipulation.

Comments & Academic Discussion

Loading comments...

Leave a Comment