LVLMs and Humans Ground Differently in Referential Communication

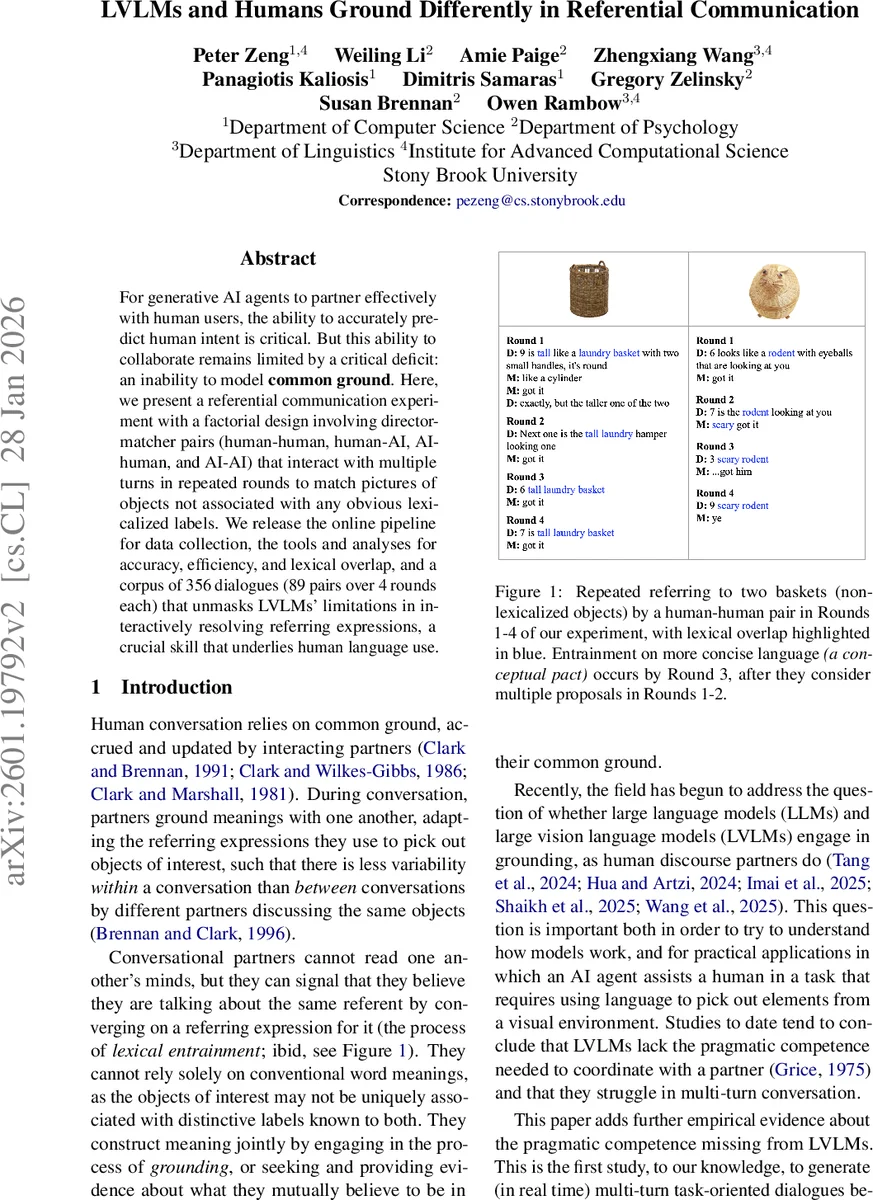

For generative AI agents to partner effectively with human users, the ability to accurately predict human intent is critical. But this ability to collaborate remains limited by a critical deficit: an inability to model common ground. Here, we present a referential communication experiment with a factorial design involving director-matcher pairs (human-human, human-AI, AI-human, and AI-AI) that interact with multiple turns in repeated rounds to match pictures of objects not associated with any obvious lexicalized labels. We release the online pipeline for data collection, the tools and analyses for accuracy, efficiency, and lexical overlap, and a corpus of 356 dialogues (89 pairs over 4 rounds each) that unmasks LVLMs’ limitations in interactively resolving referring expressions, a crucial skill that underlies human language use.

💡 Research Summary

This paper investigates the ability of large vision‑language models (LVLMs) to ground meaning in multi‑turn referential communication, a core prerequisite for effective human‑AI collaboration. Building on the classic Clark and Wilkes‑Gibbs (1986) director‑matcher task, the authors created an online experimental platform (oTree) where a “director” describes a sequence of 12 non‑lexicalized objects (baskets) and a “matcher” selects the corresponding objects on a shared visual interface. The task is repeated over four rounds with the same object set, allowing participants to develop and refine common ground across turns.

Four partner configurations were examined: human‑human (HH), human‑AI (HA), AI‑human (AH), and AI‑AI (AA). Human participants were recruited from Prolific, screened for native‑level English proficiency and a perfect approval record, and completed the full four‑round session (average duration ≈ 1 hour). For the AI side, the authors selected OpenAI’s GPT‑5.2 model, using the “none” reasoning mode to prioritize response speed. Prompt engineering included explicit role definitions, communication norms (conciseness, turn‑taking, comparative language), and a JSON‑based scaffolding that forced the model to perform a zero‑shot chain‑of‑thought before emitting an utterance. After iterative testing, GPT‑5.2 was chosen over earlier GPT‑4o because the task required more sophisticated visual‑linguistic reasoning.

The resulting corpus comprises 356 dialogues (89 dyads) across 4 rounds, amounting to 4,272 individual referential attempts. After discarding sessions with cheating or protocol violations, the final dataset contains 32 HH, 17 HA, 22 AH, and 18 AA dyads. The authors release the raw transcripts, the online pipeline, and analysis scripts for the community.

Three quantitative metrics capture grounding performance:

-

Communicative Success (Accuracy) – percentage of correctly matched baskets per round. HH dyads approach ceiling (≈ 98 % by round 4), whereas AA dyads plateau around 58 %, and HA/AH hover near 70 %. No systematic improvement across rounds is observed for AI‑involved conditions.

-

Communication Effort – total word count and number of dialogue turns per round, serving as proxies for efficiency. HH dyads show a clear downward trend (≈ 22 % fewer words and 30 % fewer turns by the final round), reflecting lexical entrainment and reduced need for clarification. AI‑involved dyads exhibit flat or slightly increasing effort, indicating an inability to economize language over time.

-

Lexical Entrainment (Round‑to‑Round Lexical Overlap, RLO) – proportion of content words in a referring expression that overlap with the expression used in the previous round. HH dyads achieve high RLO values (0.55–0.68), demonstrating the formation of conceptual pacts and reuse of concise terminology. AA dyads record near‑zero overlap (≈ 0.07), and HA/AH dyads remain low (≤ 0.20), showing that LVLMs neither adopt nor reinforce shared lexical conventions.

Qualitative inspection of dialogue excerpts reveals typical failure modes for LVLMs. When acting as director, the model often produces overly verbose or ambiguous descriptions, fails to incorporate feedback from the matcher, and does not converge on shorter, consistent labels. As matcher, the model can sometimes interpret human descriptions but frequently selects incorrect items, especially when the human’s expression deviates from the model’s training distribution. Moreover, LVLMs rarely initiate grounding acts (e.g., clarification questions) that humans naturally employ when uncertainty arises.

The authors discuss the implications of these findings. First, LVLMs currently lack pragmatic competence: they do not dynamically align with a partner’s mental model, nor do they engage in repair strategies essential for robust dialogue. Second, the asymmetry between director and matcher roles suggests that the model’s ability to generate concise, shared terminology is weaker than its capacity to parse existing language. Third, the “none” reasoning setting, while improving latency, appears to limit the depth of internal reasoning required for multi‑turn grounding.

Future work is outlined along several axes: (a) incorporating continual learning or reinforcement signals from interaction to adapt lexical conventions on‑the‑fly; (b) designing richer prompting frameworks that explicitly model Gricean maxims and encourage the model to request clarification; (c) exploring hybrid architectures that combine LVLM perception with a dedicated dialogue manager for grounding acts; and (d) extending the dataset to include multimodal cues such as gaze or pointing, which humans use to facilitate grounding.

In sum, the paper provides the first large‑scale, controlled study of LVLMs engaged in real‑time, multi‑turn referential communication across all possible director‑matcher role combinations. It demonstrates that, despite impressive single‑turn accuracy on benchmark datasets, LVLMs fall short of human performance in establishing common ground, reducing communicative effort, and forming lexical entrainment. The released corpus and analysis tools constitute a valuable benchmark for the community to develop next‑generation vision‑language agents capable of truly collaborative, grounded dialogue.

Comments & Academic Discussion

Loading comments...

Leave a Comment