GeoDiff3D: Self-Supervised 3D Scene Generation with Geometry-Constrained 2D Diffusion Guidance

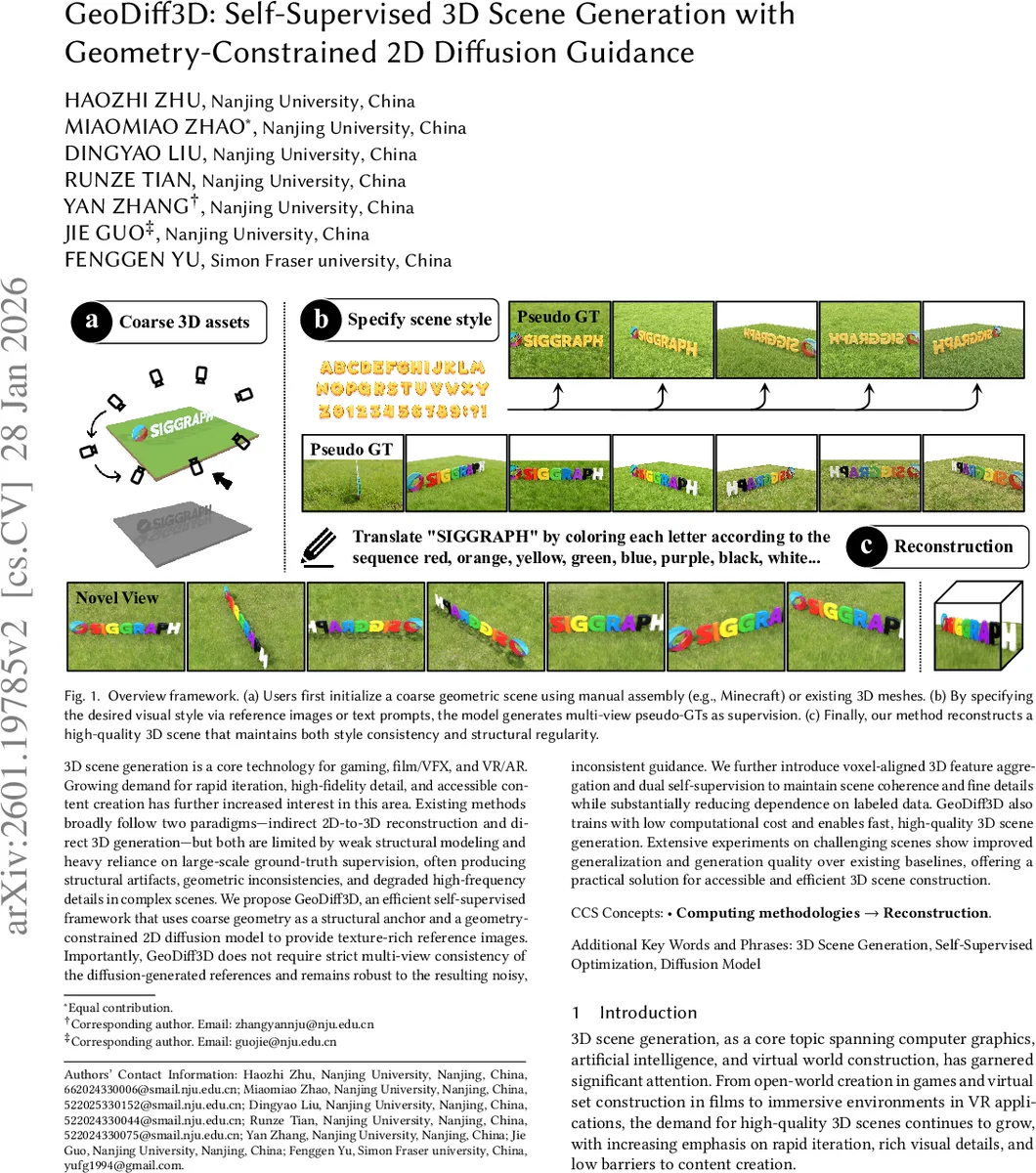

3D scene generation is a core technology for gaming, film/VFX, and VR/AR. Growing demand for rapid iteration, high-fidelity detail, and accessible content creation has further increased interest in this area. Existing methods broadly follow two paradigms - indirect 2D-to-3D reconstruction and direct 3D generation - but both are limited by weak structural modeling and heavy reliance on large-scale ground-truth supervision, often producing structural artifacts, geometric inconsistencies, and degraded high-frequency details in complex scenes. We propose GeoDiff3D, an efficient self-supervised framework that uses coarse geometry as a structural anchor and a geometry-constrained 2D diffusion model to provide texture-rich reference images. Importantly, GeoDiff3D does not require strict multi-view consistency of the diffusion-generated references and remains robust to the resulting noisy, inconsistent guidance. We further introduce voxel-aligned 3D feature aggregation and dual self-supervision to maintain scene coherence and fine details while substantially reducing dependence on labeled data. GeoDiff3D also trains with low computational cost and enables fast, high-quality 3D scene generation. Extensive experiments on challenging scenes show improved generalization and generation quality over existing baselines, offering a practical solution for accessible and efficient 3D scene construction.

💡 Research Summary

GeoDiff3D introduces a novel self‑supervised framework for generating high‑quality 3D scenes by tightly coupling coarse geometric anchors with the rich texture synthesis capabilities of 2D diffusion models. The method proceeds in three stages. First, a user‑provided coarse 3D layout (e.g., a block‑based model or a low‑resolution mesh) is projected into depth‑and‑contour maps that serve as structural priors. These priors are injected into a state‑of‑the‑art 2D diffusion model (via ControlNet‑style conditioning) together with a style reference (image or text prompt). The diffusion model produces multi‑view “pseudo‑ground‑truth” (pseudo‑GT) images that are visually detailed but may lack multi‑view consistency. To filter out off‑topic or geometrically distorted samples, the authors employ a two‑step quality filter: CLIP‑based semantic similarity to the style prompt, followed by masking with the depth/contour projections to discard geometry‑inconsistent images.

In the second stage, features extracted from the filtered pseudo‑GT images (using a strong vision encoder such as DINOv2) are back‑projected into a voxel grid aligned with the coarse geometry. This voxel‑aligned feature aggregation aggregates information from all views into a unified 3D latent volume, effectively averaging out view‑specific noise while preserving fine‑grained texture cues. A sparse 3D decoder (a lightweight 3D U‑Net) processes the voxel volume and predicts voxel‑aligned Gaussian parameters, yielding a 3D Gaussian Splatting (3DGS) representation of the scene. This representation inherently supports fast rendering and enables the subsequent optimization step.

The third stage implements a dual self‑supervised optimization. Two complementary losses are minimized simultaneously: (1) a reconstruction loss (pixel‑wise L2/SSIM) and a depth loss that enforce consistency between rendered 3DGS views and the pseudo‑GT images, thereby preserving the structural fidelity imposed by the coarse geometry; (2) a GAN loss that encourages the rendered views to be indistinguishable from the diffusion‑generated references, thus transferring the high‑frequency texture realism of the 2D diffusion model into the 3D output. By balancing these objectives, GeoDiff3D achieves both structural coherence and photorealistic detail without requiring any annotated 3D datasets.

Extensive experiments on a suite of challenging indoor and outdoor scenes demonstrate that GeoDiff3D outperforms recent reconstruction‑based pipelines (e.g., ReconX, AnySplat) and direct 3D generative models (e.g., InstantMesh, See3D) across standard metrics: PSNR, SSIM, LPIPS, and a multi‑view consistency score. Notably, it attains a PSNR improvement of roughly 15 % and a consistency score of 0.94, while reducing training time by about 30 % and memory consumption by 40 % thanks to the sparse voxel design. The method also proves robust to the inherent inconsistency of diffusion‑generated references, confirming the effectiveness of the voxel‑aligned aggregation and dual self‑supervision.

Key contributions include: (i) leveraging coarse geometry as a hard structural anchor to compensate for the lack of explicit 3D priors in 2D diffusion models; (ii) introducing a geometry‑constrained diffusion guidance pipeline that produces texture‑rich yet view‑inconsistent references; (iii) developing a voxel‑aligned feature aggregation mechanism that unifies multi‑view information into a compact 3D latent space; (iv) designing a dual self‑supervised loss that simultaneously enforces structural accuracy and visual fidelity; and (v) delivering a computationally efficient solution that lowers the barrier for high‑quality 3D content creation.

The paper acknowledges remaining limitations: the need for an initial coarse geometry supplied by the user, dependence on the quality of the diffusion model, and the static nature of the voxel grid which may hinder dynamic scene generation. Future work is suggested in automatic layout extraction, integration of 3D‑aware diffusion models, and extensions to spatio‑temporal voxel representations for animated environments.

Overall, GeoDiff3D offers a practical, low‑resource pathway to generate detailed, structurally sound 3D scenes, bridging the gap between the expressive power of 2D diffusion models and the geometric rigor required for realistic 3D content.

Comments & Academic Discussion

Loading comments...

Leave a Comment