Elastic Attention: Test-time Adaptive Sparsity Ratios for Efficient Transformers

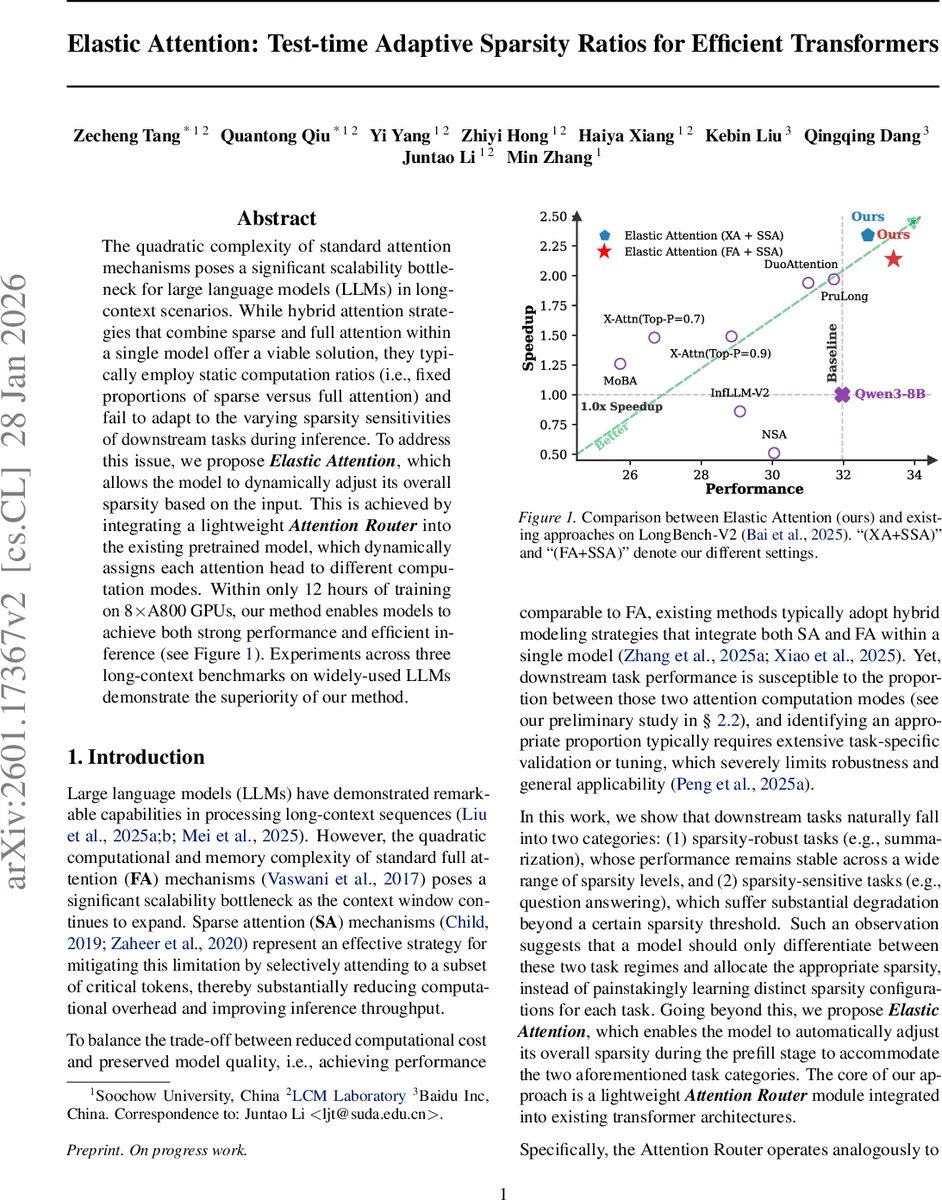

The quadratic complexity of standard attention mechanisms poses a significant scalability bottleneck for large language models (LLMs) in long-context scenarios. While hybrid attention strategies that combine sparse and full attention within a single model offer a viable solution, they typically employ static computation ratios (i.e., fixed proportions of sparse versus full attention) and fail to adapt to the varying sparsity sensitivities of downstream tasks during inference. To address this issue, we propose Elastic Attention, which allows the model to dynamically adjust its overall sparsity based on the input. This is achieved by integrating a lightweight Attention Router into the existing pretrained model, which dynamically assigns each attention head to different computation modes. Within only 12 hours of training on 8xA800 GPUs, our method enables models to achieve both strong performance and efficient inference. Experiments across three long-context benchmarks on widely-used LLMs demonstrate the superiority of our method.

💡 Research Summary

The paper tackles the scalability bottleneck of large language models (LLMs) when processing long contexts, which stems from the quadratic O(N²) cost of standard full‑attention (FA). While sparse‑attention (SA) reduces computation by attending only to a subset of tokens, hybrid approaches that mix FA and SA have become popular. However, existing hybrid methods use a static proportion of sparse heads (the model sparsity ratio, Ω_MSR) that must be manually tuned for each downstream task, limiting robustness and practicality.

Through an empirical study on Llama‑3.1‑8B‑Instruct across six LongBench tasks, the authors discover two broad categories of tasks: (1) sparsity‑robust tasks (e.g., summarization) whose performance is largely insensitive to the amount of sparsity, and (2) sparsity‑sensitive tasks (e.g., question answering) that suffer a sharp performance drop once sparsity exceeds a certain threshold. This observation motivates a model that can decide, at inference time, whether a given input belongs to a sparsity‑robust or sparsity‑sensitive regime and adjust its overall sparsity accordingly.

To achieve this, the authors introduce Elastic Attention, a lightweight plug‑in that can be added to any pretrained transformer without altering its backbone parameters. The core component is an Attention Router that operates on a per‑head basis. For each layer, the router pools the key representations across the sequence dimension to obtain a compact head‑wise summary. This summary is fed into two small multilayer perceptrons (MLPs): a “task MLP” that extracts task‑related features, and a “router MLP” that produces a 2‑dimensional logit for each head, indicating whether the head should use FA or SA.

Routing decisions are made using a Gumbel‑Softmax relaxation with an annealing temperature, yielding a soft probability matrix r_soft. During the forward pass, a hard decision r_hard is obtained by taking the argmax over the two classes. To back‑propagate through the non‑differentiable argmax, a straight‑through estimator (STE) is employed, allowing gradients to flow through r_soft while preserving hard routing behavior at inference. This design ensures that the routing behavior observed during training matches that at test time.

The authors define two sparsity metrics: (1) Model Sparsity Ratio (Ω_MSR) – the fraction of heads assigned to SA, and (2) Effective Sparsity Ratio (Ω_ESR) – the actual token‑pruning proportion across all sparse heads. Training optimizes a combined objective: the standard cross‑entropy language modeling loss plus a sparsity regularization term that penalizes deviations from a task‑specific target interval

Comments & Academic Discussion

Loading comments...

Leave a Comment