Sparsity-Aware Low-Rank Representation for Efficient Fine-Tuning of Large Language Models

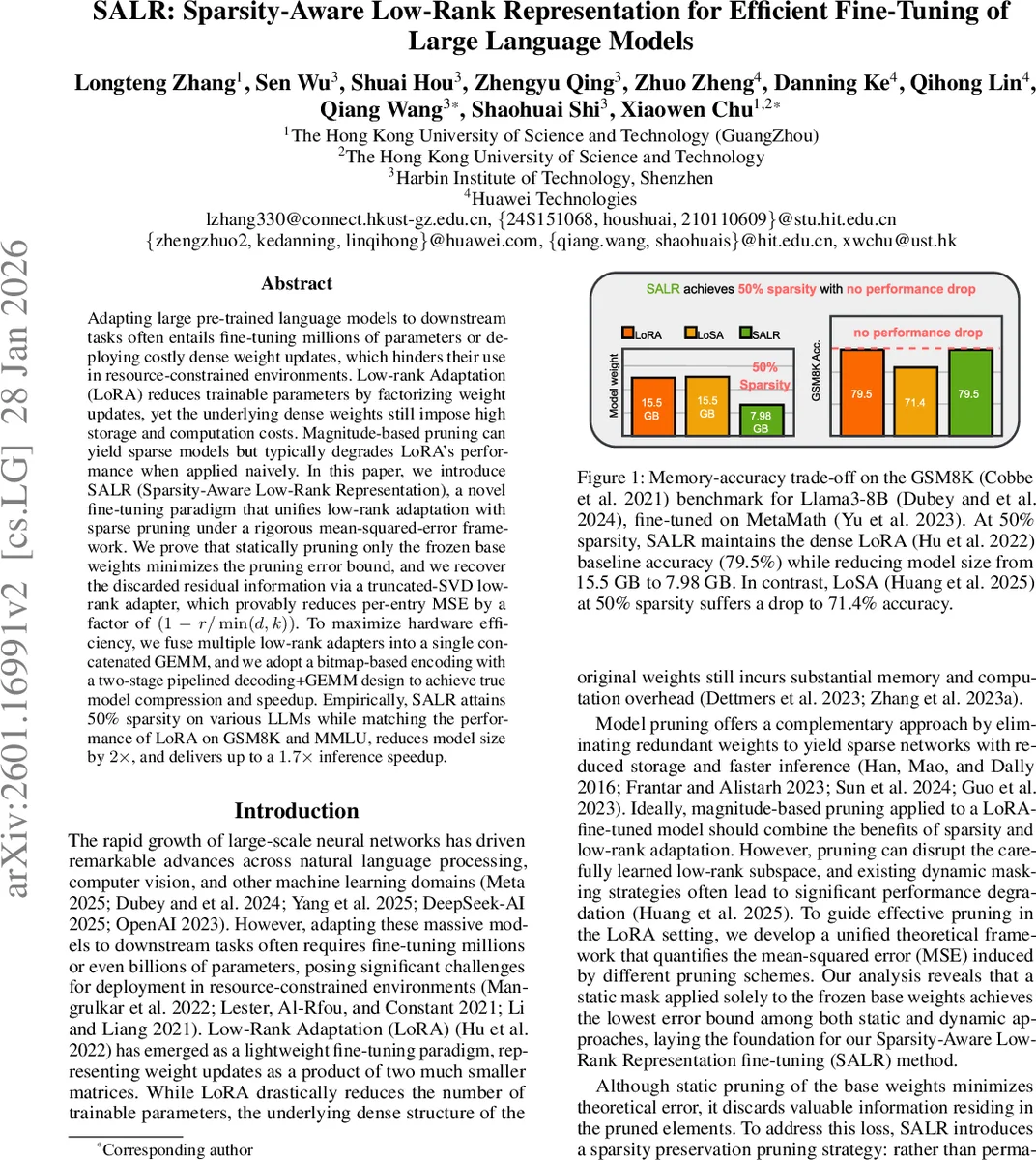

Adapting large pre-trained language models to downstream tasks often entails fine-tuning millions of parameters or deploying costly dense weight updates, which hinders their use in resource-constrained environments. Low-rank Adaptation (LoRA) reduces trainable parameters by factorizing weight updates, yet the underlying dense weights still impose high storage and computation costs. Magnitude-based pruning can yield sparse models but typically degrades LoRA’s performance when applied naively. In this paper, we introduce SALR (Sparsity-Aware Low-Rank Representation), a novel fine-tuning paradigm that unifies low-rank adaptation with sparse pruning under a rigorous mean-squared-error framework. We prove that statically pruning only the frozen base weights minimizes the pruning error bound, and we recover the discarded residual information via a truncated-SVD low-rank adapter, which provably reduces per-entry MSE by a factor of $(1 - r/\min(d,k))$. To maximize hardware efficiency, we fuse multiple low-rank adapters into a single concatenated GEMM, and we adopt a bitmap-based encoding with a two-stage pipelined decoding + GEMM design to achieve true model compression and speedup. Empirically, SALR attains 50% sparsity on various LLMs while matching the performance of LoRA on GSM8K and MMLU, reduces model size by $2\times$, and delivers up to a $1.7\times$ inference speedup.

💡 Research Summary

**

The paper introduces SALR (Sparsity‑Aware Low‑Rank Representation), a novel fine‑tuning paradigm that simultaneously addresses the storage and compute overheads of large language models (LLMs) when adapting them to downstream tasks. Traditional Low‑Rank Adaptation (LoRA) reduces the number of trainable parameters by representing weight updates as a product of two small matrices (A·B), but the underlying frozen base weights (W₀) remain dense, limiting memory savings and inference speed. Conversely, magnitude‑based pruning can sparsify W₀, yet naïvely applying pruning to a LoRA‑fine‑tuned model often destroys the carefully learned low‑rank subspace, causing severe performance degradation.

SALR unifies low‑rank adaptation and sparse pruning under a rigorous mean‑squared‑error (MSE) analysis. First, the authors model weight entries as Gaussian variables and derive a closed‑form expression for the MSE incurred by magnitude pruning at any sparsity ratio p (Theorem 1). They then consider three pruning strategies in the LoRA setting: (1) a static mask applied only to W₀, (2) a dynamic mask derived from the combined matrix U = W₀ + Δ but still pruning only W₀, and (3) a dynamic mask applied to the full U. Theoretical bounds (Theorem 2) prove that strategy 1 yields the smallest per‑entry MSE for every p, establishing that static pruning of the frozen base weights is optimal.

However, static pruning discards the information contained in the zeroed entries. SALR addresses this by preserving the pruned residual matrix E = W – Ŵ. The residual is factorized via truncated singular value decomposition (SVD) to obtain a rank‑r approximation Eᵣ. The Eckart‑Young theorem and subsequent analysis (Theorem 3) show that adding Eᵣ reduces the per‑entry MSE by a factor of (1 – r/min(d,k)), where d and k are the input and output dimensions of the weight matrix. In practice, this means that even with 50 % sparsity, a modest rank (e.g., r = 8 for a 4096×4096 layer) can recover most of the lost accuracy.

Training with multiple low‑rank adapters (the original LoRA A·B and the SVD‑derived residual) could lead to kernel‑launch overhead if each adapter is applied sequentially. SALR solves this by concatenating all adapters along the rank dimension, forming A_cat and B_cat. The forward pass then reduces to two large GEMM operations (x·A_cat and the result·B_cat), dramatically improving hardware utilization on GPUs/TPUs. This fusion also simplifies memory access patterns and enables higher throughput.

To achieve genuine model compression, SALR stores the sparsified base weights using a bitmap encoding. Only the non‑zero entries and a compact bitmask are kept on disk. At inference time, a two‑stage pipelined decoder reconstructs sparse sub‑matrices while the LoRA computation proceeds in parallel. The second stage feeds the reconstructed blocks into the fused GEMM kernels. This design hides decoding latency behind compute, ensuring the pipeline remains compute‑bound throughout.

Empirical evaluation spans several state‑of‑the‑art LLMs (e.g., Llama‑3‑8B, Llama‑2‑13B). With a target sparsity of 50 %, SALR matches LoRA’s performance on GSM8K (≈79.5 % accuracy) and MMLU, while reducing model size from ~15.5 GB to ~7.9 GB—a 2× compression. Inference speedups of up to 1.7× are reported, measured on a single A100 GPU with batch size 1. Comparisons against recent methods—LoSA (static pruning of both base and low‑rank weights) and SparseLoRA (dynamic pruning without actual model sparsity at inference)—show that SALR uniquely delivers both high accuracy and true speedup/compression.

Key contributions are:

- A unified MSE‑based theoretical framework proving that static pruning of frozen weights minimizes error.

- A sparsity‑preserving residual recovery via truncated SVD, with provable MSE reduction.

- An adapter‑concatenation scheme that fuses multiple low‑rank updates into a single GEMM, maximizing accelerator efficiency.

- A practical deployment pipeline using bitmap encoding and a two‑stage decoding‑plus‑GEMM pipeline for real‑world model size reduction and inference acceleration.

Overall, SALR offers a mathematically grounded, hardware‑aware solution that makes fine‑tuning and deploying large language models feasible in resource‑constrained environments without sacrificing task performance.

Comments & Academic Discussion

Loading comments...

Leave a Comment