ColorConceptBench: A Benchmark for Probabilistic Color-Concept Understanding in Text-to-Image Models

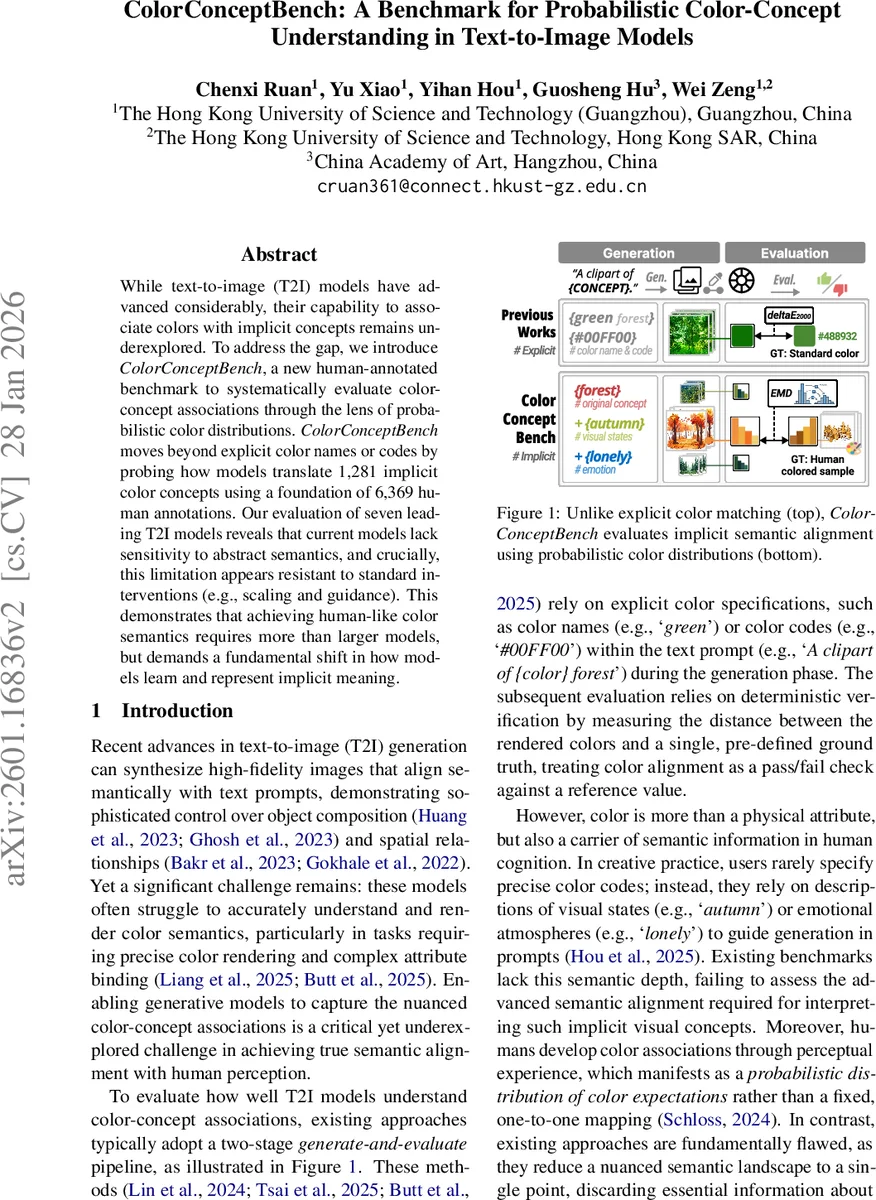

While text-to-image (T2I) models have advanced considerably, their capability to associate colors with implicit concepts remains underexplored. To address the gap, we introduce ColorConceptBench, a new human-annotated benchmark to systematically evaluate color-concept associations through the lens of probabilistic color distributions. ColorConceptBench moves beyond explicit color names or codes by probing how models translate 1,281 implicit color concepts using a foundation of 6,369 human annotations. Our evaluation of seven leading T2I models reveals that current models lack sensitivity to abstract semantics, and crucially, this limitation appears resistant to standard interventions (e.g., scaling and guidance). This demonstrates that achieving human-like color semantics requires more than larger models, but demands a fundamental shift in how models learn and represent implicit meaning.

💡 Research Summary

ColorConceptBench introduces a novel, human‑grounded benchmark for evaluating how well text‑to‑image (T2I) models understand and reproduce the probabilistic color semantics associated with implicit concepts. The authors first identify 1,281 concepts that combine concrete objects (drawn from the THINGS dataset) with descriptive adjectives covering two dimensions: visual states (e.g., “polluted water”, “unripe plant”) and emotions (e.g., “lonely”, “cozy”). Frequency filtering with the COCA corpus ensures linguistic relevance, and iterative designer review guarantees that each concept is both semantically and visually plausible.

To isolate color as the primary variable, the team generates five sketches per concept using two state‑of‑the‑art generators (Qwen‑Image and Stable Diffusion 3.5 Medium). A professional artist selects the clearest sketch for each concept, after which 168 professional designers each colorize five sketches, yielding 6,369 high‑quality colorizations. Designers are instructed to rely on everyday experience rather than personal style, and outlier removal plus quality‑control pipelines produce a stable set of human color choices.

For each colored sketch, the authors extract the target object region using Grounding‑DINO followed by Segment Anything Model (SAM). Colors are quantized in CIELAB space, and a perceptual clustering step (ΔE2000) separates distinct visual modes (e.g., red apples vs. green apples). Within each cluster, indistinguishable colors are merged, and the final human distribution (P_H(x|c)) is built by weighting each cluster according to its population. This process captures both the diversity of possible colors and the relative intensity with which humans associate each hue to a concept.

Seven leading open‑source T2I models are evaluated: Stable Diffusion XL, SD‑3, SD‑3.5, Flux‑1‑dev, Qwen‑Image, OmniGen2, and SAN‑A‑1.5. For every concept the authors generate images across two visual styles (natural and clipart) and seven classifier‑free guidance (CFG) scales, producing five samples per configuration at 1024×1024 resolution. The same extraction pipeline yields a model‑generated distribution (P_M(x|c)).

Four quantitative metrics compare (P_H) and (P_M): (1) Pearson Correlation Coefficient (PCC) for linear similarity, (2) Earth Mover’s Distance (EMD) for perceptual distance in CIELAB, (3) Entropy Difference (ED) to assess whether the model captures the complexity of human color diversity, and (4) Dominant Color Accuracy (DCA), a deterministic check that the most probable color bin matches between human and model.

Results reveal a clear dichotomy. For concrete object‑color pairs (e.g., “apple”, “car”), models achieve moderate PCC (0.6–0.8), low EMD (≈10–15), and DCA above 70 %. However, for abstract visual‑state or emotional concepts (“lonely”, “festive”, “polluted water”), PCC drops below 0.3, EMD rises above 30, and DCA falls under 20 %. Scaling model parameters from 1 B to 10 B or increasing CFG from 1 to 15 yields negligible improvements; in some cases higher guidance even harms DCA, suggesting that stronger conditioning amplifies deterministic color cues at the expense of probabilistic nuance.

The authors argue that current diffusion‑based T2I models primarily learn object‑centric visual features and lack mechanisms to encode the richer, probabilistic semantics of color‑concept associations. They propose three avenues for future work: (i) incorporating explicit color‑concept annotations during large‑scale multimodal pre‑training, (ii) integrating the human‑derived color distributions directly into the loss function as a probabilistic regularizer, and (iii) enriching prompts with structured meta‑information (e.g., emotion tags, cultural context) to guide the model toward the appropriate color manifold.

In sum, ColorConceptBench provides the first systematic, distribution‑based evaluation of implicit color semantics in generative models. By exposing a persistent gap between human perception and model output, the benchmark encourages the community to move beyond pixel‑level fidelity toward truly semantic color control that aligns with human affect, culture, and imagination.

Comments & Academic Discussion

Loading comments...

Leave a Comment