AfroScope: A Framework for Studying the Linguistic Landscape of Africa

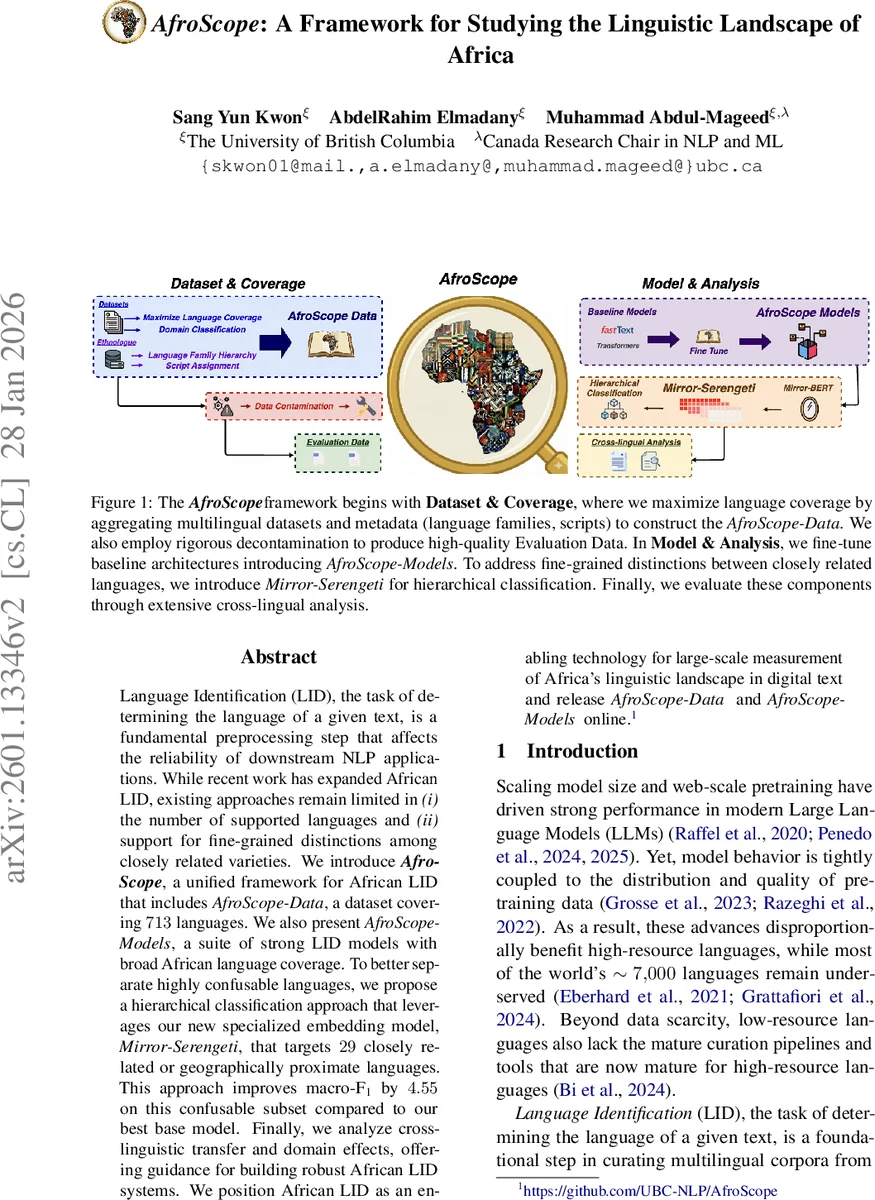

Language Identification (LID) is the task of determining the language of a given text and is a fundamental preprocessing step that affects the reliability of downstream NLP applications. While recent work has expanded LID coverage for African languages, existing approaches remain limited in (i) the number of supported languages and (ii) their ability to make fine-grained distinctions among closely related varieties. We introduce AfroScope, a unified framework for African LID that includes AfroScope-Data, a dataset covering 713 African languages, and AfroScope-Models, a suite of strong LID models with broad language coverage. To better distinguish highly confusable languages, we propose a hierarchical classification approach that leverages Mirror-Serengeti, a specialized embedding model targeting 29 closely related or geographically proximate languages. This approach improves macro F1 by 4.55 on this confusable subset compared to our best base model. Finally, we analyze cross linguistic transfer and domain effects, offering guidance for building robust African LID systems. We position African LID as an enabling technology for large scale measurement of Africas linguistic landscape in digital text and release AfroScope-Data and AfroScope-Models publicly.

💡 Research Summary

AfroScope presents a comprehensive solution to two persistent challenges in African language identification (LID): limited language coverage and difficulty distinguishing closely related varieties. The authors first construct AfroScope‑Data, a massive, curated dataset that aggregates 11 publicly available sources to cover 713 African languages across nine language families, seven scripts, and nine domains, totaling 19,682,636 unique sentences. Language labels are standardized to ISO‑639‑3 codes, enriched with family hierarchy and script metadata from Ethnologue, and de‑duplicated using a 4‑gram containment check to minimize train‑test leakage. To prevent high‑resource languages from dominating the training signal, each language is capped at 100 K sentences for training and 100 sentences for evaluation, with a two‑stage sampling strategy that first draws from primary sources and then supplements from secondary sources when needed.

Using this dataset, the authors fine‑tune a suite of LID models—AfroScope‑Models—including FastText‑based classifiers (a custom FastText model and ConLID, a contrastive learning variant), transformer‑based models pre‑trained for African languages (AfroLID, Serengeti based on XLM‑R, and Cheetah based on T5), and evaluate them under both internal (blind test split) and external (original secondary datasets) settings. Macro‑F1 is the primary metric. Across all 713 languages, the best AfroScope‑Model achieves a macro‑F1 of 97.2 %, substantially outperforming prior African LID baselines. An analysis of performance versus training data size reveals an “inflection point” at roughly 980 sentences: languages with fewer than 98 sentences (low‑resource) average 41.6 % macro‑F1, those with 98–980 sentences (medium‑resource) average 89.1 %, and those with more than 980 sentences (high‑resource) plateau around 97.7 % macro‑F1.

To address the second challenge—fine‑grained disambiguation—the paper introduces Mirror‑Serengeti, a hierarchical classification framework targeting 29 highly confusable languages. Mirror‑Serengeti first predicts a coarse language‑family label, then refines the prediction using a contrastive embedding model specifically trained on the confusable subset. This hierarchical approach yields a 4.55 % absolute gain in macro‑F1 on the confusable subset compared with the best non‑hierarchical baseline, and markedly reduces error rates for low‑resource languages within that group.

Domain analysis shows that religious and news texts achieve the highest accuracy, while web, health, and other domains exhibit greater variance, underscoring the impact of domain bias on LID robustness. The authors also conduct a detailed cross‑lingual transfer study: positive transfer is strongest when source and target languages share both family and script, whereas mismatched scripts within the same family can cause negative interference. These findings suggest that future data collection should prioritize family‑ and script‑diverse corpora and that multi‑script training strategies can mitigate transfer loss.

Finally, the authors release both AfroScope‑Data and AfroScope‑Models publicly, enabling reproducibility and facilitating downstream applications such as large‑scale multilingual corpus curation, pre‑training of multilingual language models, and language‑aware information retrieval for African languages. By providing extensive coverage, a specialized hierarchical disambiguation mechanism, and thorough analyses of resource, domain, and transfer effects, AfroScope establishes a new benchmark for African LID and offers practical guidance for building robust, inclusive language technologies across the continent.

Comments & Academic Discussion

Loading comments...

Leave a Comment