Arab Voices: Mapping Standard and Dialectal Arabic Speech Technology

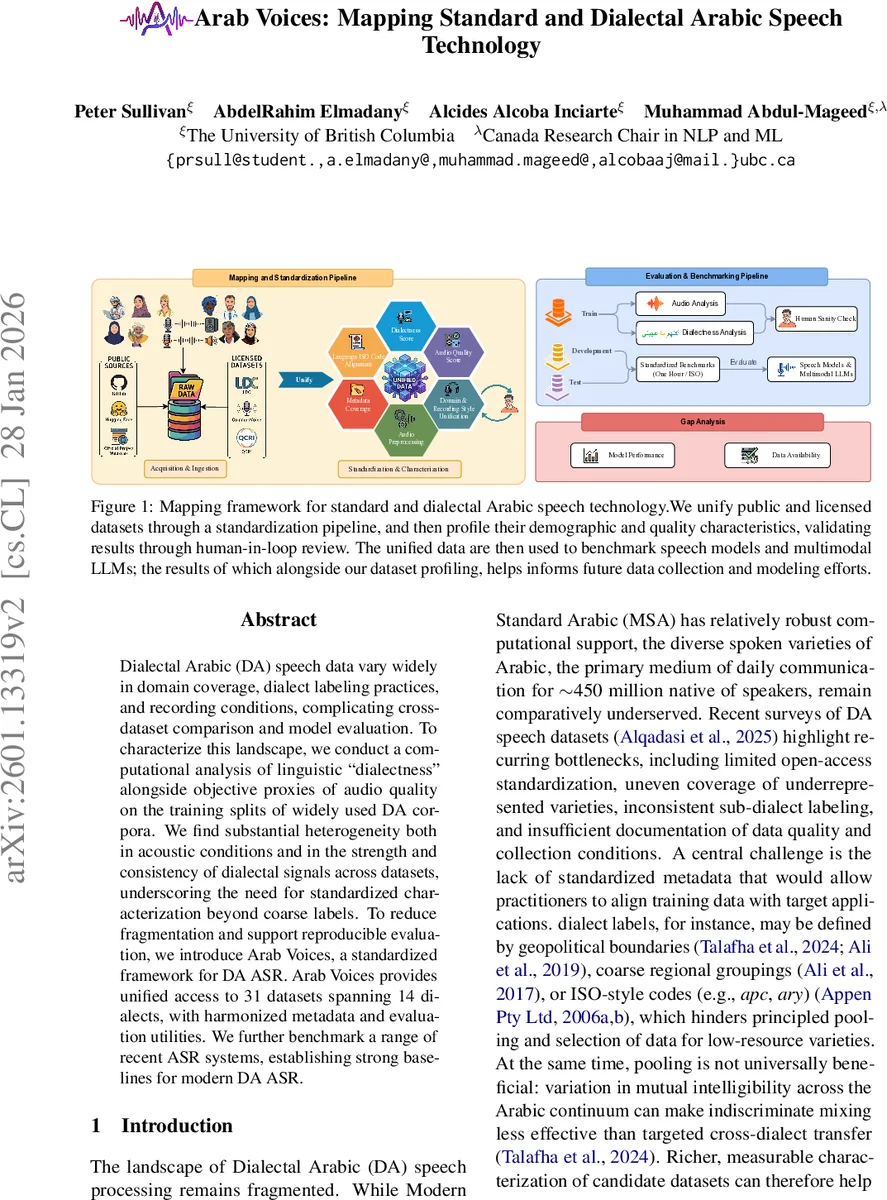

Dialectal Arabic (DA) speech data vary widely in domain coverage, dialect labeling practices, and recording conditions, complicating cross-dataset comparison and model evaluation. To characterize this landscape, we conduct a computational analysis of linguistic ``dialectness’’ alongside objective proxies of audio quality on the training splits of widely used DA corpora. We find substantial heterogeneity both in acoustic conditions and in the strength and consistency of dialectal signals across datasets, underscoring the need for standardized characterization beyond coarse labels. To reduce fragmentation and support reproducible evaluation, we introduce Arab Voices, a standardized framework for DA ASR. Arab Voices provides unified access to 31 datasets spanning 14 dialects, with harmonized metadata and evaluation utilities. We further benchmark a range of recent ASR systems, establishing strong baselines for modern DA ASR.

💡 Research Summary

The paper addresses the fragmented state of Dialectal Arabic (DA) speech resources by introducing a comprehensive framework called Arab Voices. The authors first collect 31 publicly available and licensed DA corpora, covering 14 Arabic dialects and a wide range of recording styles (studio, telephone, broadcast, YouTube, etc.). All audio is normalized to mono, 16 kHz, 16‑bit PCM, and transcripts are converted to Arabic script while preserving diacritics. A unified schema stores utterance ID, audio path, duration, raw and standardized transcripts, speaker ID, recording metadata, and both domain and dialect labels.

Metadata standardization is a core contribution. Dialect tags are aligned to ISO 639‑3 codes combined with country‑region identifiers (e.g., “afb_ARE‑AZ” for Gulf Arabic spoken in Abu Dhabi). When location information is ambiguous, Ethnologue language maps are consulted. Domain labels, originally 61 distinct strings, are manually collapsed into 11 broad themes to enable consistent reporting. A sanity‑check on a stratified sample of 83 utterances led to minor rule adjustments and highlighted datasets that require conservative handling.

The framework then automatically characterizes each dataset along two measurable axes. The “dialectness” score is derived from a pre‑trained dialect identification model that outputs the probability that an utterance belongs to its annotated dialect. The “audio‑quality” score aggregates objective acoustic metrics such as signal‑to‑noise ratio, clipping rate, reverberation time, and sampling consistency. Analysis shows substantial heterogeneity: even within the same dialect, recordings from broadcast, telephone, or YouTube differ markedly in both scores, and several corpora exhibit low dialectness, indicating weak alignment between label and linguistic content.

Using these profiled datasets, the authors construct a multi‑dialect benchmark. The benchmark defines a clear train/validation/test split, guarantees at least one hour of test material per dialect, and supplies evaluation utilities that respect the harmonized metadata. They evaluate a diverse set of recent ASR systems—including OpenAI Whisper, Conformer‑based models, wav2vec 2.0, and multimodal foundation models capable of processing speech (e.g., SpeechGPT). The best system achieves an average word error rate (WER) of 18 % across all test sets, but performance varies widely: WER ranges from 12 % for well‑recorded broadcast/read data to over 30 % for low‑quality, code‑switching, or conversational YouTube recordings. The results highlight that current models are still sensitive to acoustic conditions and dialectal variation, especially in under‑represented varieties.

In summary, Arab Voices provides three essential infrastructures for DA speech research: (1) a reproducible pipeline that unifies heterogeneous corpora into a common format with harmonized dialect and domain metadata; (2) automated, scalable characterization of linguistic “dialectness” and acoustic quality; and (3) a standardized, multi‑dialect ASR benchmark with strong baselines. These contributions enable researchers to make informed dataset selection decisions, design targeted data augmentation or transfer‑learning strategies, and fairly compare models across dialects and recording conditions. The paper also outlines future work, such as finer‑grained sub‑dialect labeling, inclusion of sociolinguistic attributes (age, gender, education), and development of models robust to low‑quality and code‑switching speech.

Comments & Academic Discussion

Loading comments...

Leave a Comment