RubricHub: A Comprehensive and Highly Discriminative Rubric Dataset via Automated Coarse-to-Fine Generation

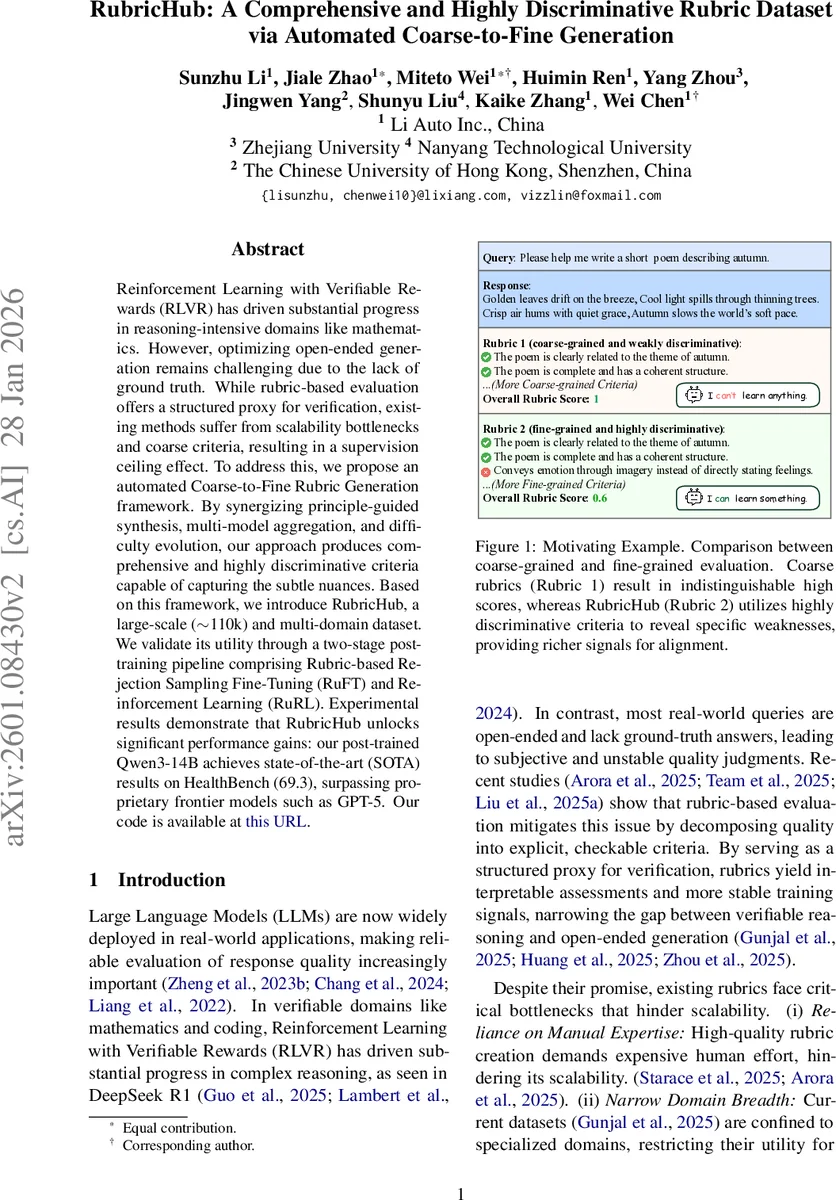

Reinforcement Learning with Verifiable Rewards (RLVR) has driven substantial progress in reasoning-intensive domains like mathematics. However, optimizing open-ended generation remains challenging due to the lack of ground truth. While rubric-based evaluation offers a structured proxy for verification, existing methods suffer from scalability bottlenecks and coarse criteria, resulting in a supervision ceiling effect. To address this, we propose an automated Coarse-to-Fine Rubric Generation framework. By synergizing principle-guided synthesis, multi-model aggregation, and difficulty evolution, our approach produces comprehensive and highly discriminative criteria capable of capturing the subtle nuances. Based on this framework, we introduce RubricHub, a large-scale ($\sim$110k) and multi-domain dataset. We validate its utility through a two-stage post-training pipeline comprising Rubric-based Rejection Sampling Fine-Tuning (RuFT) and Reinforcement Learning (RuRL). Experimental results demonstrate that RubricHub unlocks significant performance gains: our post-trained Qwen3-14B achieves state-of-the-art (SOTA) results on HealthBench (69.3), surpassing proprietary frontier models such as GPT-5. Our code is available at \href{https://github.com/teqkilla/RubricHub}{ this URL}.

💡 Research Summary

**

The paper introduces RubricHub, a large‑scale, highly discriminative rubric dataset created through an automated coarse‑to‑fine generation pipeline, and demonstrates its effectiveness for improving large language model (LLM) alignment.

Motivation. Reinforcement Learning with Verifiable Rewards (RLVR) has driven progress in domains where ground‑truth answers exist (e.g., mathematics, coding). However, most real‑world queries are open‑ended, lacking a definitive answer, which makes reward design difficult. Prior work has employed rubric‑based evaluation to decompose quality into explicit, checkable criteria, but suffers from three major bottlenecks: (1) heavy reliance on manual expert creation, limiting scalability; (2) narrow domain coverage, restricting usefulness for general‑purpose LLMs; and (3) coarse, low‑discriminability criteria that create a supervision ceiling.

Proposed Solution. The authors propose an automated Coarse‑to‑Fine Rubric Generation framework consisting of three stages:

-

Principle‑Guided & Response‑Grounded Generation. For each query q, a reference answer oᵢ is generated. A meta‑principle set P_meta (Consistency & Alignment, Structure & Scope, Clarity & Quality, Reasoning & Evaluability) is embedded in a generation prompt P_gen. The model M then synthesizes a candidate rubric Rᵢ^cand = M(P_gen(q, oᵢ, P_meta)). Grounding the rubric on an actual answer prevents “rubric drift” toward generic or hallucinated criteria.

-

Multi‑Model Aggregation. Candidate rubrics are produced in parallel by heterogeneous frontier models (e.g., GPT‑5.1, Gemini 3 Pro). All candidates are pooled (R_cand) and distilled via an aggregation prompt P_agg into a compact base rubric R_base = M(P_agg(q, R_cand)). This step mitigates single‑model bias and yields a more comprehensive, objective standard.

-

Difficulty Evolution. To overcome the ceiling effect, the authors select two high‑quality reference answers (A_ref) and apply an augmentation prompt P_aug to extract fine‑grained nuances that differentiate “excellent” from “exceptional”. The resulting additional criteria R_add are merged with R_base to produce the final rubric R_final = R_base ∪ R_add. Example upgrades include turning a generic “code is correct?” check into “does the code handle edge cases with O(n) complexity?”.

Dataset Construction. Applying the pipeline to ~110 k queries across five domains (Science, Medical, Writing, Chat, Instruction‑Following) yields RubricHub, a multi‑domain rubric dataset. Each query is paired with a weighted set of criteria (average 25–32 criteria per domain). The dataset is publicly released.

Post‑Training Pipeline. RubricHub is leveraged in two complementary stages:

-

Rubric‑based Rejection Sampling Fine‑Tuning (RuFT). Rubric scores are used as a filter; only responses exceeding a threshold are retained as high‑quality training data for supervised fine‑tuning.

-

Rubric‑based Reinforcement Learning (RuRL). The final rubric provides a structured reward signal r(q, o) for policy optimization (e.g., PPO). The reward aggregates weighted criterion scores, delivering dense, interpretable feedback.

Experiments. The authors fine‑tune Qwen‑3‑14B‑Base using RuFT followed by RuRL. On HealthBench, the post‑trained model achieves 69.3 points, a 22.6‑point gain over the official Instruct variant and surpassing the proprietary GPT‑5 (67.2 points) despite being smaller. Additional analyses show that RubricHub’s fine‑grained criteria produce a broader score distribution, enabling clearer differentiation among top‑performing models compared to coarse baselines.

Critical Assessment. The work’s novelty lies in the systematic automation of rubric creation, especially the difficulty‑evolution step that explicitly addresses the supervision ceiling. The multi‑model aggregation strategy is a pragmatic way to reduce single‑source bias, though it depends on access to multiple frontier models, some of which are proprietary, raising reproducibility concerns. The paper provides limited human evaluation of rubric alignment with user satisfaction, leaving open the question of whether optimizing for rubric scores fully captures real‑world quality. Moreover, the meta‑principles and prompts are English‑centric; cross‑lingual generalization may require additional adaptation. Ethical considerations include the risk that models over‑fit to the rubric, potentially suppressing creative or unconventional outputs.

Conclusion and Outlook. RubricHub demonstrates that automatically generated, high‑discriminability rubrics can serve as powerful supervision signals for LLM alignment, bridging the gap between verifiable reward domains and open‑ended generation tasks. Future work should explore multilingual rubric generation, deeper human‑in‑the‑loop validation, and robust safeguards to prevent over‑optimization toward narrow rubric criteria. With these extensions, RubricHub could become a cornerstone resource for trustworthy, high‑performing LLMs.

Comments & Academic Discussion

Loading comments...

Leave a Comment