Concentrated Monte Carlo sampling for local observables in quantum spin chains

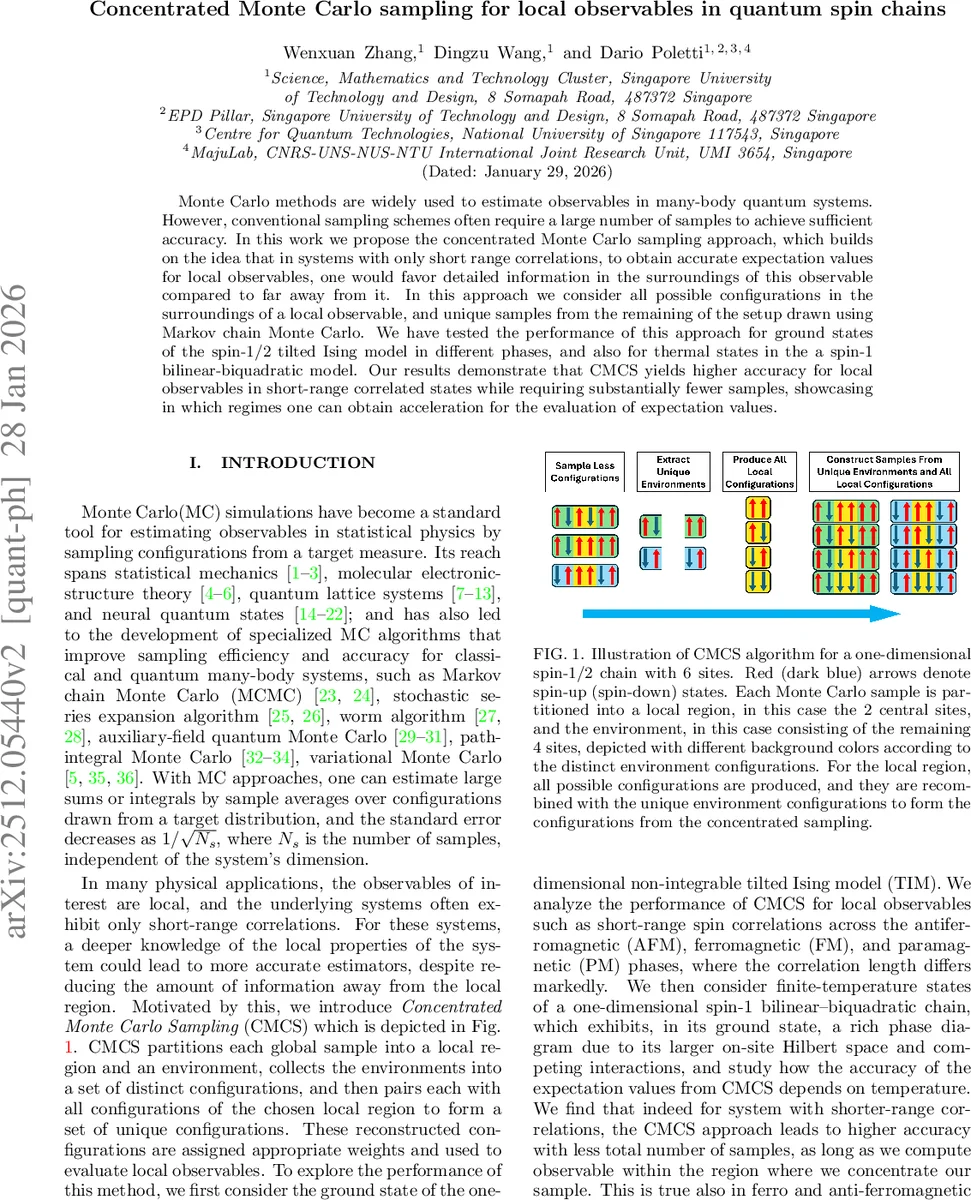

Monte Carlo methods are widely used to estimate observables in many-body quantum systems. However, conventional sampling schemes often require a large number of samples to achieve sufficient accuracy. In this work we propose the concentrated Monte Carlo sampling approach, which builds on the idea that in systems with only short range correlations, to obtain accurate expectation values for local observables, one would favor detailed information in the surroundings of this observable compared to far away from it. In this approach we consider all possible configurations in the surroundings of a local observable, and unique samples from the remaining of the setup drawn using Markov chain Monte Carlo. We have tested the performance of this approach for ground states of the spin-1/2 tilted Ising model in different phases, and also for thermal states in the a spin-1 bilinear-biquadratic model. Our results demonstrate that CMCS yields higher accuracy for local observables in short-range correlated states while requiring substantially fewer samples, showcasing in which regimes one can obtain acceleration for the evaluation of expectation values.

💡 Research Summary

The paper introduces a novel Monte Carlo technique called Concentrated Monte Carlo Sampling (CMCS) designed to improve the efficiency of estimating local observables in quantum spin chains, especially when the system exhibits only short‑range correlations. Traditional Markov‑chain Monte Carlo (MCMC) draws independent configurations from the full probability distribution of the many‑body wavefunction, but the statistical error of a local observable scales as 1/√Nₛ regardless of how many degrees of freedom are actually relevant. The authors argue that, for short‑range correlated states, most of the information needed to evaluate a local observable is contained in a small “local region” surrounding the observable, while the rest of the chain (the “environment”) can be sampled more coarsely.

CMCS proceeds as follows. First, a conventional MCMC run generates a set S of Nₛ global configurations sampled from the target distribution P(x). Each configuration x is split into a local part x_loc (ℓ contiguous sites that include the observable) and an environment part x_env (the remaining L‑ℓ sites). All possible local configurations {u_i^loc} are enumerated; for a spin‑½ chain this yields Nℓ = 2^ℓ possibilities, and for a spin‑1 chain Nℓ = 3^ℓ. From the MCMC data the distinct environment configurations {u_j^env} are extracted, giving Nₑ unique environments. The Cartesian product of the two sets produces Nᵤ = Nℓ·Nₑ “re‑combined” global configurations. Their original probabilities P(u) are renormalized within this reduced ensemble to obtain weights ˜P(u) = P(u)/∑{y∈U}P(y). The expectation value of any local observable O_loc is then estimated as ⟨O_loc⟩ = Σ{u∈U} ˜P(u) O_loc(u).

The key advantage is twofold. (i) By exhaustively covering the local subspace, CMCS eliminates statistical fluctuations associated with the observable’s own degrees of freedom. (ii) By collapsing the environment to a set of unique samples, the effective number of configurations needed to achieve a given accuracy can be dramatically reduced compared with plain MCMC. In the limit of large Nₛ and Nₑ the renormalized distribution converges to the true distribution, guaranteeing unbiasedness.

The authors benchmark CMCS on two paradigmatic one‑dimensional models. The first is the tilted Ising model (TIM) with Hamiltonian H = J Σ σ_i^z σ_{i+1}^z – Σ (h_x σ_i^x + h_z σ_i^z). By varying the transverse field h_x and the longitudinal field h_z, they explore four phases: paramagnetic (PM), antiferromagnetic (AFM), ferromagnetic (FM), and a critical region where the correlation length diverges. For a 20‑site chain they compute single‑site magnetizations ⟨σ_i^z⟩ and nearest‑neighbor correlators ⟨σ_i^z σ_{i+1}^z⟩, using matrix‑product‑state (MPS) calculations as the exact reference. In the PM, AFM, and FM phases, CMCS with a modest local region (ℓ = 2–4) achieves mean absolute errors that are one to two orders of magnitude smaller than conventional MCMC with Nₛ = 10⁴ samples, even when CMCS uses only a few hundred effective configurations (Nᵤ). The improvement is especially striking in the PM phase, where the probability distribution in the σ_z basis is nearly uniform; MCMC’s 1/√Nₛ noise overwhelms the tiny expectation values, whereas CMCS’s uniform environment weights effectively increase the sample size for the observable. In ordered phases, the distribution concentrates on a few configurations, leading to long autocorrelation times for local‑update MCMC; CMCS, by enumerating all local possibilities, sidesteps this bottleneck. In the critical region, however, the correlation length becomes comparable to the system size, making the observable sensitive to distant sites. Here CMCS’s limited environment sampling cannot capture the necessary long‑range information, and its performance degrades to that of standard MCMC.

The second testbed is a spin‑1 bilinear‑biquadratic chain with Hamiltonian H = Σ

Comments & Academic Discussion

Loading comments...

Leave a Comment