Hierarchical Transformers for Unsupervised 3D Shape Abstraction

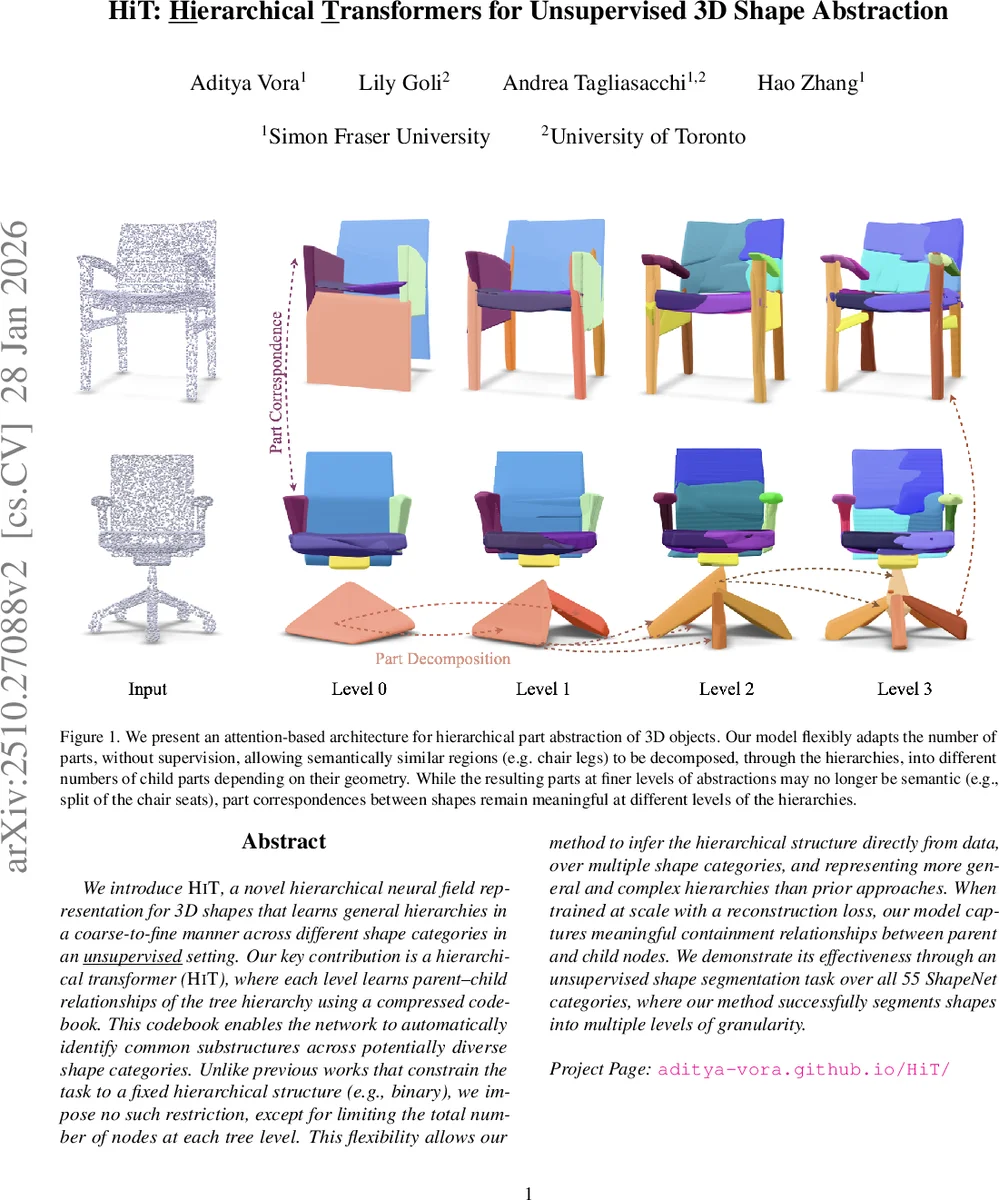

We introduce HiT, a novel hierarchical neural field representation for 3D shapes that learns general hierarchies in a coarse-to-fine manner across different shape categories in an unsupervised setting. Our key contribution is a hierarchical transformer (HiT), where each level learns parent-child relationships of the tree hierarchy using a compressed codebook. This codebook enables the network to automatically identify common substructures across potentially diverse shape categories. Unlike previous works that constrain the task to a fixed hierarchical structure (e.g., binary), we impose no such restriction, except for limiting the total number of nodes at each tree level. This flexibility allows our method to infer the hierarchical structure directly from data, over multiple shape categories, and representing more general and complex hierarchies than prior approaches. When trained at scale with a reconstruction loss, our model captures meaningful containment relationships between parent and child nodes. We demonstrate its effectiveness through an unsupervised shape segmentation task over all 55 ShapeNet categories, where our method successfully segments shapes into multiple levels of granularity.

💡 Research Summary

The paper introduces HiT (Hierarchical Transformer), a novel unsupervised framework that learns multi‑level part hierarchies for 3D shapes directly from raw point clouds. Unlike prior work that either relies on supervised part annotations or imposes a fixed binary tree structure, HiT discovers the tree topology itself, only constraining the total number of nodes per level. The architecture consists of a ConvOccNet‑based point‑cloud encoder that produces a voxel‑grid feature Z⁽⁰⁾, followed by L decoder layers. Each decoder layer ℓ contains a learnable codebook C⁽ℓ⁾ of Nℓ part codes. These codes act as queries in a standard transformer attention mechanism, attending to the feature map from the previous layer (Z⁽ℓ⁻¹⁾). The resulting attention matrix A⁽ℓ⁾ defines soft parent‑child assignments; a straight‑through estimator turns these soft assignments into hard one‑hot selections during the forward pass while preserving gradients.

Geometrically, every part is grounded as a 3D convex primitive, following the CvxNet formulation. A small MLP G_ϕ maps each part feature to H half‑space parameters (normals, offsets, blending weights) together with a rigid transform (Euler angles, translation, uniform scale). The occupancy of a part s at a query point x is ˜Oₛ(x)=σ(−Φₛ(x)), where Φₛ aggregates the half‑spaces in log‑sum‑exp form; σ is set to 75 to obtain a sharp sigmoid. Spatial containment is enforced by multiplying the child occupancy with its parent’s occupancy: ˆOₛ(x)=ˆOₚ(x)·˜Oₛ(x). This guarantees that a child contributes only inside the region occupied by its parent.

Training combines four loss terms: (1) L_recon – per‑level reconstruction of the ground‑truth occupancy field using the union of part occupancies; (2) L_contain – penalizes occupancy of a child outside its parent; (3) L_cvxnet – adopts the convex‑primitive regularizers from CvxNet to discourage redundant overlapping primitives; (4) L_balance – encourages the number of active parts at each level to stay close to the predefined Nℓ, yielding a balanced tree. The total loss is a weighted sum L = L_recon + λ₁L_contain + λ₂L_cvxnet + λ₃L_balance.

Experiments are conducted on all 55 categories of ShapeNet. Quantitatively, HiT outperforms state‑of‑the‑art unsupervised baselines (AE‑Net, DAE‑Net, RIMNet, etc.) in part segmentation IoU, often by a large margin. Qualitatively, the method produces three‑level hierarchies where the coarsest level captures semantically meaningful components (e.g., chair seat, back, legs), the intermediate level refines these into sub‑components, and the finest level splits further into geometric fragments. Importantly, because the tree is not fixed, categories with variable part counts (e.g., chairs with different numbers of legs) are handled gracefully: the model dynamically allocates more nodes where needed, while baselines with a binary tree cannot adapt.

Key contributions are: (i) a codebook‑based information bottleneck that learns reusable part embeddings across diverse categories; (ii) cross‑layer attention that yields differentiable, data‑driven parent‑child relationships; (iii) explicit spatial containment via occupancy multiplication, ensuring geometric consistency; (iv) a fully unsupervised pipeline that requires only a reconstruction objective and scales to large, heterogeneous shape collections.

The authors argue that such a representation is directly useful for downstream tasks: part‑aware editing (changing or transferring sub‑parts), robotic manipulation planning (reasoning from coarse to fine grasp points), and compositional shape generation (recombining learned primitives). By removing the need for manual annotations or predefined hierarchies, HiT opens a path toward more human‑like perception of 3D objects in both graphics and robotics.

Comments & Academic Discussion

Loading comments...

Leave a Comment