What Matters in LLM-Based Feature Extractor for Recommender? A Systematic Analysis of Prompts, Models, and Adaptation

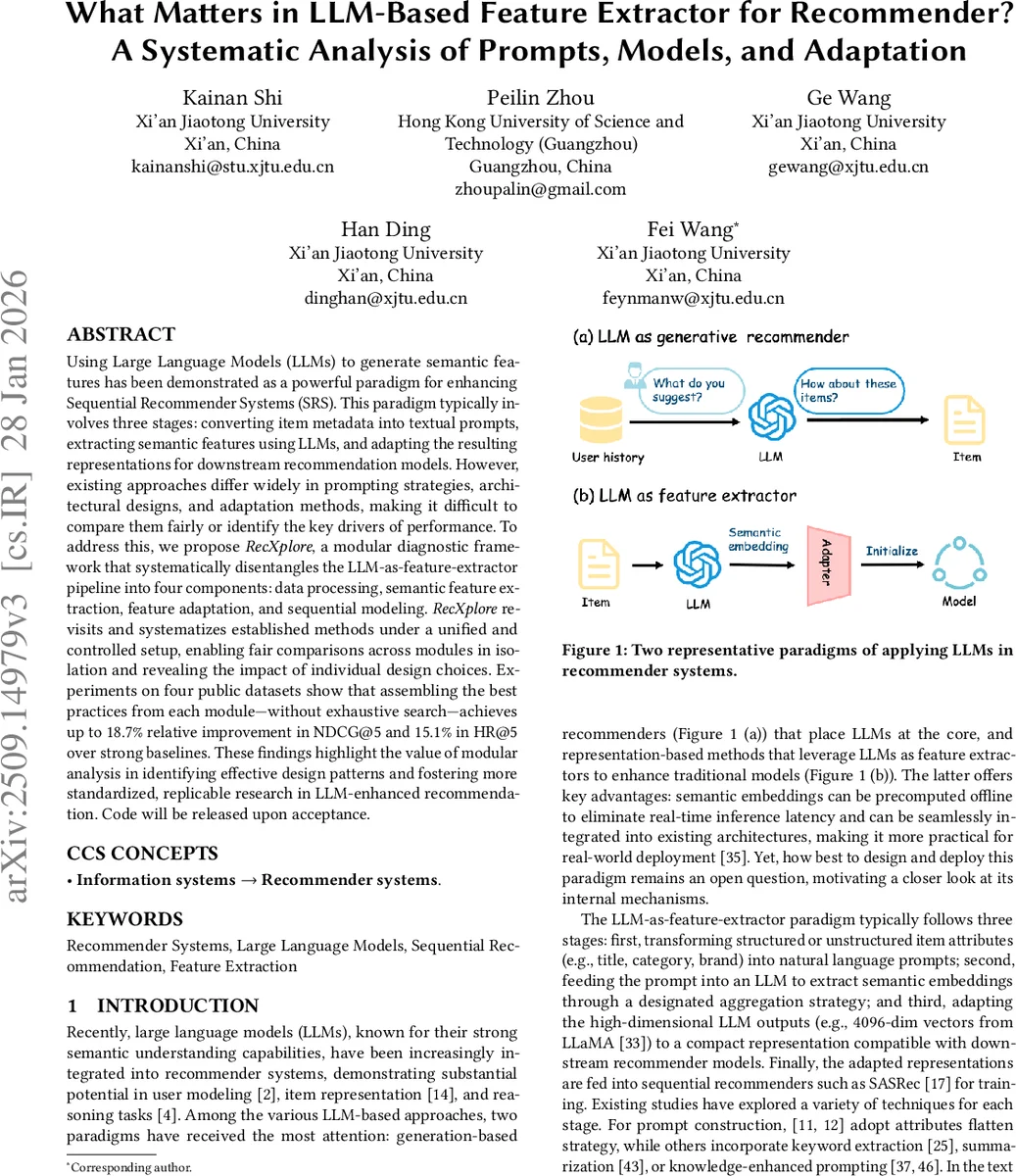

Using Large Language Models (LLMs) to generate semantic features has been demonstrated as a powerful paradigm for enhancing Sequential Recommender Systems (SRS). This typically involves three stages: processing item text, extracting features with LLMs, and adapting them for downstream models. However, existing methods vary widely in prompting, architecture, and adaptation strategies, making it difficult to fairly compare design choices and identify what truly drives performance. In this work, we propose RecXplore, a modular analytical framework that decomposes the LLM-as-feature-extractor pipeline into four modules: data processing, semantic feature extraction, feature adaptation, and sequential modeling. Instead of proposing new techniques, RecXplore revisits and organizes established methods, enabling systematic exploration of each module in isolation. Experiments on four public datasets show that simply combining the best designs from existing techniques without exhaustive search yields up to 18.7% relative improvement in NDCG@5 and 12.7% in HR@5 over strong baselines. These results underscore the utility of modular benchmarking for identifying effective design patterns and promoting standardized research in LLM-enhanced recommendation.

💡 Research Summary

This paper addresses the growing interest in using large language models (LLMs) as feature extractors for sequential recommender systems (SRS). While many recent works have demonstrated that LLM‑derived semantic embeddings can improve recommendation performance, the literature suffers from fragmented experimental setups: different studies adopt disparate prompting techniques, LLM fine‑tuning strategies, dimensionality‑reduction methods, and ways of integrating ID embeddings, making it difficult to isolate the true drivers of performance gains.

To remedy this, the authors propose RecXplore, a modular diagnostic framework that decomposes the LLM‑as‑feature‑extractor pipeline into four independent components:

- Data Processing – conversion of raw item attributes (title, brand, category, etc.) into natural‑language prompts.

- Feature Extraction – encoding of prompts with a fixed LLM backbone (LLaMA‑2‑7B in the main experiments) and optional fine‑tuning.

- Feature Adaptation – reduction of the high‑dimensional LLM output to a size suitable for downstream recommenders, possibly combined with ID‑based embeddings.

- Sequential Modeling – the final recommendation model (GRU4Rec, BERT4Rec, or SASRec) that consumes the adapted item vectors.

Within each module the authors enumerate representative design choices and evaluate them under a unified experimental protocol on four public datasets (e.g., Amazon, MovieLens).

Key findings:

- Prompt design – Simple attribute flattening (concatenating fields with a fixed template) consistently outperforms more sophisticated LLM‑augmented prompts such as keyword extraction, summarization, or knowledge expansion. Excessive prompt engineering introduces noise and harms downstream performance.

- LLM fine‑tuning – A two‑stage adaptation consisting of Continued Pre‑Training (CPT) on unlabeled item texts followed by Supervised Fine‑Tuning (SFT) on a QA‑style task yields the most transferable item embeddings. CPT alone improves domain alignment, while SFT injects task‑specific semantics; their combination (CPT + SFT) outperforms either alone and also beats contrastive fine‑tuning (SCFT).

- Token aggregation – Mean pooling of token hidden states is the most stable and effective method for collapsing a sequence of token vectors into a single item representation. Max pooling, last‑token, and explicit one‑word summarization (EOL) are less reliable.

- Feature adaptation – A multi‑step dimensionality‑reduction (MDR) pipeline that first applies Principal Component Analysis (PCA) and then a learnable adapter outperforms direct dimensionality reduction (DDR). Among adapters, a Mixture‑of‑Experts (MoE) network combined with PCA delivers the highest HR@5 and NDCG@5, balancing expressiveness and parameter efficiency.

- ID‑embedding fusion – When semantic embeddings are sufficiently expressive, simply replacing traditional ID embeddings yields the best results. Concatenation or alignment (contrastive loss) adds unnecessary dimensionality and can destabilize training.

- Sequential model choice – Transformer‑based SASRec consistently benefits most from the LLM‑derived features, outperforming GRU4Rec and BERT4Rec across all datasets.

By assembling the best‑performing option from each module (flattened prompts, CPT + SFT, mean pooling, PCA + MoE, replacement of ID embeddings, and SASRec), the authors achieve up to 18.7 % relative improvement in NDCG@5 and 15.1 % in HR@5 over strong LLM‑based baselines, without introducing additional architectural complexity or exhaustive hyper‑parameter search.

Contributions:

- Introduction of RecXplore, a controlled, modular framework that enables isolated evaluation of design choices in LLM‑enhanced recommendation pipelines.

- Systematic empirical study of prompting, LLM adaptation, feature compression, and ID integration across multiple datasets, providing reproducible evidence and practical guidelines.

- Demonstration that a thoughtfully assembled “best‑of‑both‑worlds” configuration can surpass state‑of‑the‑art methods, highlighting the value of modular analysis over monolithic, over‑engineered designs.

The paper concludes with a call for standardized benchmarking in this emerging area and suggests future work on extending RecXplore to larger proprietary LLMs, exploring meta‑learning for automatic module selection, and evaluating scalability on industrial‑scale recommendation logs.

Comments & Academic Discussion

Loading comments...

Leave a Comment