OPERA: A Reinforcement Learning--Enhanced Orchestrated Planner-Executor Architecture for Reasoning-Oriented Multi-Hop Retrieval

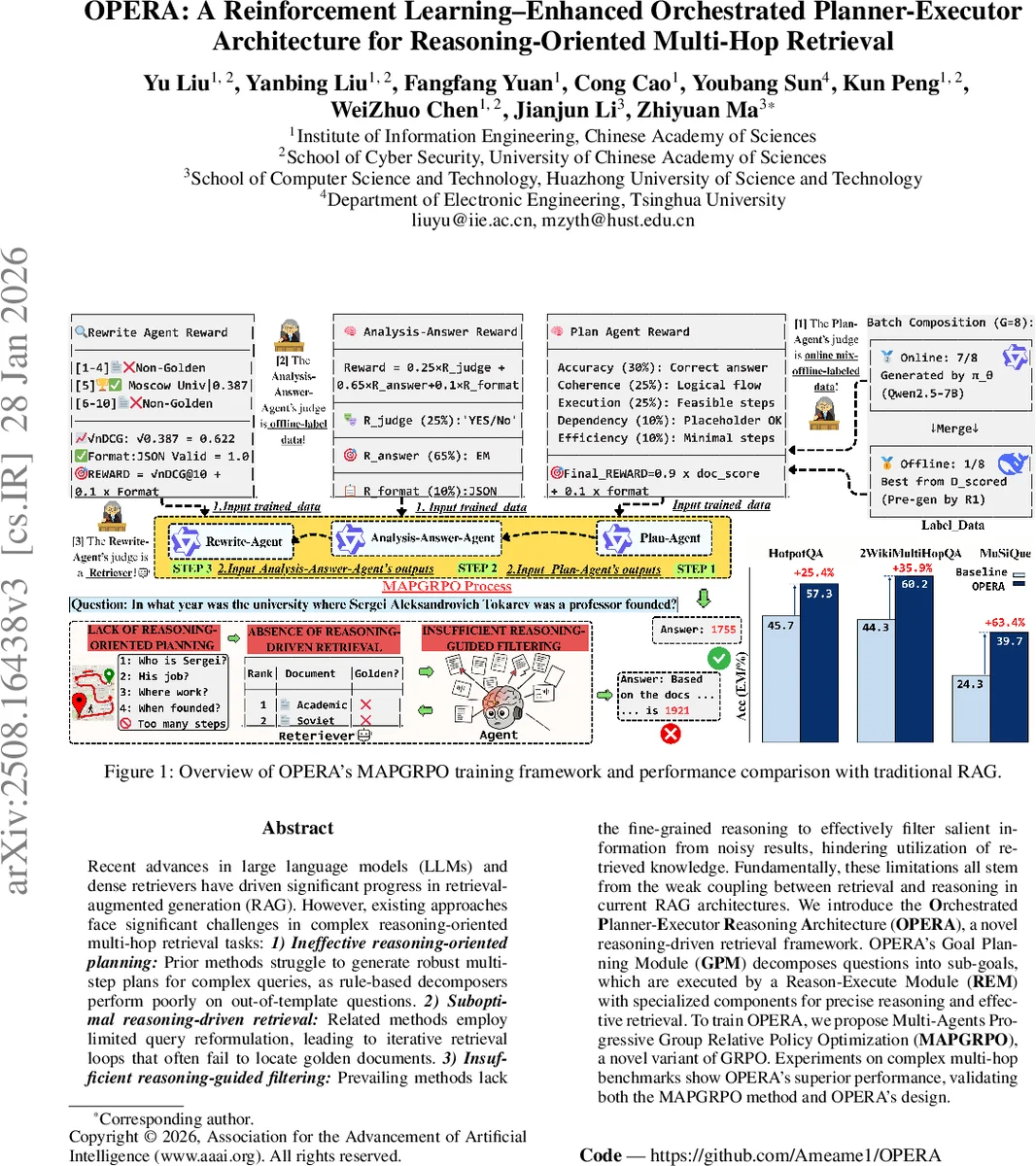

Recent advances in large language models (LLMs) and dense retrievers have driven significant progress in retrieval-augmented generation (RAG). However, existing approaches face significant challenges in complex reasoning-oriented multi-hop retrieval tasks: 1) Ineffective reasoning-oriented planning: Prior methods struggle to generate robust multi-step plans for complex queries, as rule-based decomposers perform poorly on out-of-template questions. 2) Suboptimal reasoning-driven retrieval: Related methods employ limited query reformulation, leading to iterative retrieval loops that often fail to locate golden documents. 3) Insufficient reasoning-guided filtering: Prevailing methods lack the fine-grained reasoning to effectively filter salient information from noisy results, hindering utilization of retrieved knowledge. Fundamentally, these limitations all stem from the weak coupling between retrieval and reasoning in current RAG architectures. We introduce the Orchestrated Planner-Executor Reasoning Architecture (OPERA), a novel reasoning-driven retrieval framework. OPERA’s Goal Planning Module (GPM) decomposes questions into sub-goals, which are executed by a Reason-Execute Module (REM) with specialized components for precise reasoning and effective retrieval. To train OPERA, we propose Multi-Agents Progressive Group Relative Policy Optimization (MAPGRPO), a novel variant of GRPO. Experiments on complex multi-hop benchmarks show OPERA’s superior performance, validating both the MAPGRPO method and OPERA’s design.

💡 Research Summary

The paper addresses persistent shortcomings in Retrieval‑Augmented Generation (RAG) systems when tackling complex, multi‑hop reasoning tasks. Existing approaches suffer from three main issues: (1) weak planning capabilities that cannot robustly decompose intricate queries, (2) limited reasoning‑driven retrieval that relies on simplistic query reformulation and often fails to retrieve the necessary “golden” documents, and (3) inadequate filtering of retrieved information, leaving relevant passages buried in noisy top‑K results. The authors argue that these problems stem from a loose coupling between the retrieval component and the reasoning component in current architectures.

To overcome these limitations, they propose OPERA (Orchestrated Planner‑Executor Reasoning Architecture), a hierarchical framework that explicitly separates high‑level strategic planning from low‑level tactical execution. OPERA consists of two core modules:

-

Goal Planning Module (GPM) – contains a dedicated Plan Agent that transforms a complex question into an ordered set of sub‑goals, each possibly containing placeholders that specify the type of information expected from earlier steps. The planning reward combines logical validity, structural correctness of placeholders, and end‑to‑end execution success, encouraging the generation of executable, dependency‑aware plans.

-

Reason‑Execute Module (REM) – comprises two specialized agents:

- Analysis‑Answer Agent evaluates whether the documents retrieved for a sub‑goal are sufficient (ϕ). If sufficient, it extracts the answer, assigns a confidence score, and records a “YES” decision; otherwise it emits a “NO” decision together with the missing information type. Its reward blends a binary correctness term for the sufficiency decision, an exact‑match (EM) score for the extracted answer, and a format‑quality term.

- Rewrite Agent rewrites the query when the Analysis‑Answer Agent signals insufficiency. Its reward is a weighted sum of NDCG@k (measuring retrieval effectiveness of the rewritten query) and a format‑quality metric, with a strong bias toward retrieval performance.

A Trajectory Memory Component (TMC) logs every action and its rationale, improving interpretability and facilitating debugging.

Training OPERA is achieved with a novel reinforcement‑learning algorithm called MAPGRPO (Multi‑Agents Progressive Group Relative Policy Optimization). MAPGRPO extends Group Relative Policy Optimization (GRPO) by allowing heterogeneous reward functions for each specialized agent and by optimizing agents sequentially. For each agent, candidate outputs are sampled, a high‑scoring “best” candidate is drawn from a pre‑scored dataset, and a group‑relative advantage is computed against the mean reward of the group. The KL‑divergence regularizer ensures stability against policy collapse. Crucially, each agent is trained on the distribution induced by previously trained agents, providing realistic execution contexts and solving credit‑assignment challenges inherent in multi‑agent RL.

The authors evaluate OPERA on three multi‑hop QA benchmarks: HotpotQA, 2WikiMultiHopQA, and Musique. Baselines include single‑LLM models, standard CoT, existing planner‑first RAG systems (PlanRAG, REAPER), adaptive RAG methods, and prior RL‑based approaches such as ReAct and IRCoT. OPERA’s RL version (trained with MAPGRPO) achieves substantial gains: on HotpotQA EM improves from 45.7 % (best baseline) to 57.3 % (+11.6), and F1 from 56.9 % to 69.5 % (+11.0). Similar improvements are observed on the other two datasets, with EM and F1 jumps of roughly 15–16 % over the strongest baselines. The authors also report that the TMC provides clear, human‑readable rationales for each step, enhancing transparency.

In summary, the paper makes three key contributions: (1) a reasoning‑centric, two‑stage architecture that tightly couples planning, retrieval, and answer extraction; (2) the MAPGRPO algorithm that delivers fine‑grained, role‑specific credit assignment while preserving coordination among agents; and (3) strong empirical evidence that this design outperforms state‑of‑the‑art methods on challenging multi‑hop QA tasks. The work opens avenues for extending OPERA to other domains requiring complex reasoning over large corpora, and for integrating even larger LLMs with more efficient sampling strategies.

Comments & Academic Discussion

Loading comments...

Leave a Comment