FastDINOv2: Frequency Based Curriculum Learning Improves Robustness and Training Speed

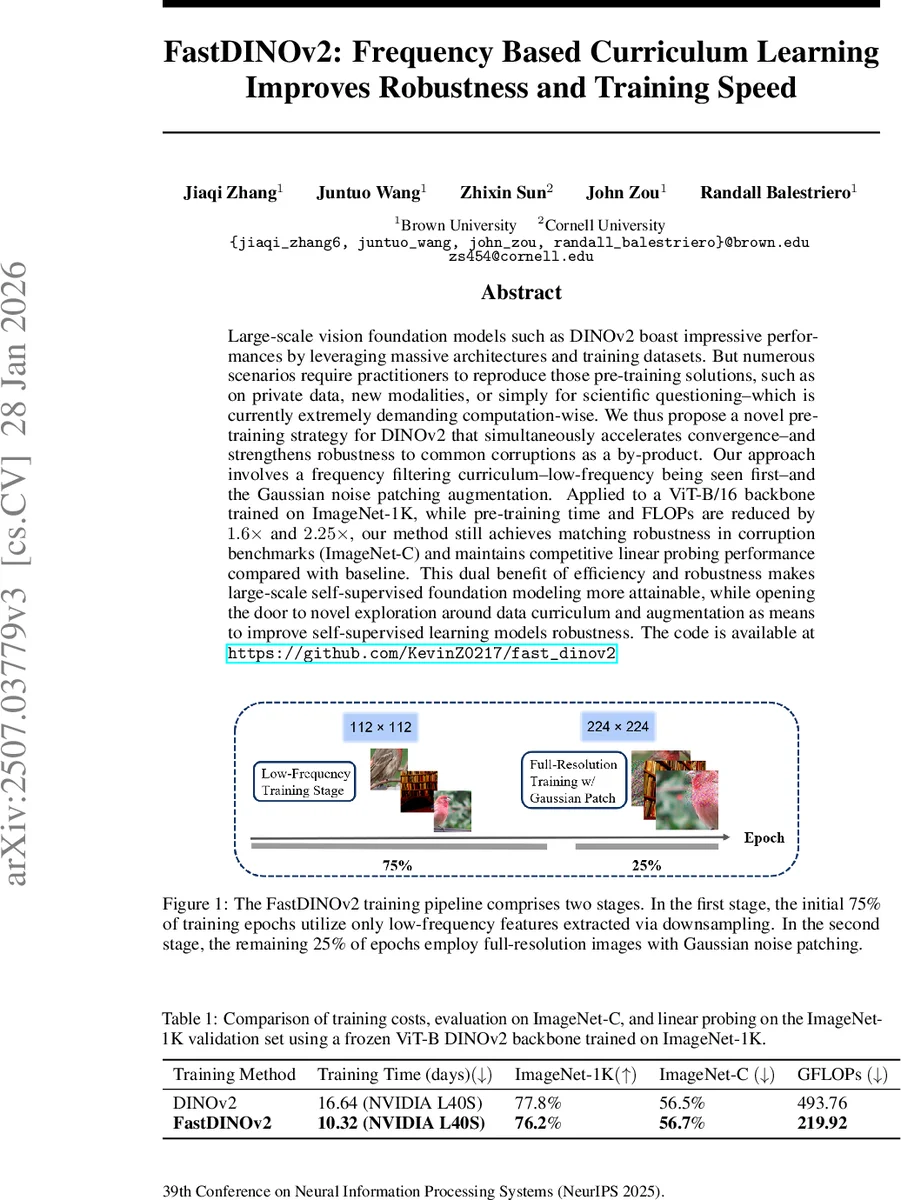

Large-scale vision foundation models such as DINOv2 boast impressive performances by leveraging massive architectures and training datasets. But numerous scenarios require practitioners to reproduce those pre-training solutions, such as on private data, new modalities, or simply for scientific questioning–which is currently extremely demanding computation-wise. We thus propose a novel pre-training strategy for DINOv2 that simultaneously accelerates convergence–and strengthens robustness to common corruptions as a by-product. Our approach involves a frequency filtering curriculum–low-frequency being seen first–and the Gaussian noise patching augmentation. Applied to a ViT-B/16 backbone trained on ImageNet-1K, while pre-training time and FLOPs are reduced by 1.6x and 2.25x, our method still achieves matching robustness in corruption benchmarks (ImageNet-C) and maintains competitive linear probing performance compared with baseline. This dual benefit of efficiency and robustness makes large-scale self-supervised foundation modeling more attainable, while opening the door to novel exploration around data curriculum and augmentation as means to improve self-supervised learning models robustness. The code is available at https://github.com/KevinZ0217/fast_dinov2

💡 Research Summary

**

The paper introduces FastDINOv2, a training scheme that makes self‑supervised vision foundation models both faster to train and more robust to common image corruptions. The authors observe that Vision Transformers (ViTs) naturally prioritize low‑frequency information during early training and that low‑frequency corruptions (e.g., brightness shifts, fog) and high‑frequency corruptions (e.g., Gaussian noise, blur) affect different parts of the Fourier spectrum. Leveraging this insight, they design a two‑stage curriculum:

-

Low‑frequency stage (first 75 % of epochs). Images are down‑sampled (224×224 → 112×112) after the standard DINOv2 cropping, effectively discarding high‑frequency details while preserving most structural information. This reduces the number of tokens per forward pass by 75 %, cutting FLOPs and accelerating convergence. During this phase the model learns coarse, global patterns quickly.

-

Full‑resolution stage (remaining 25 % of epochs). Training resumes on the original resolution images, but a new augmentation—Gaussian‑noise patching—is added. For each image a random square patch is replaced with pixel‑wise Gaussian noise (mean = 1, variance = scale²). This forces the network to become tolerant to high‑frequency perturbations and balances the low‑frequency bias introduced by the first stage.

The curriculum is applied to a ViT‑B/16 backbone pre‑trained on ImageNet‑1K using the DINOv2 self‑supervised objective. Compared with the vanilla DINOv2 baseline, FastDINOv2 reduces total training time from 16.64 days to 10.32 days on a single NVIDIA L40S GPU (≈1.6× speed‑up) and cuts FLOPs from 493.76 G to 219.92 G (≈2.25× reduction). Despite the efficiency gains, the model attains comparable linear‑probe top‑1 accuracy on ImageNet‑1K (76.2 % vs. 77.8 % for the baseline) and matches robustness on the ImageNet‑C benchmark (56.7 % mean corruption error vs. 56.5 % for the baseline).

Extensive ablations explore the impact of the first‑stage resolution. Resolutions of 112×112 provide the best trade‑off: they are low enough to yield substantial compute savings while retaining sufficient detail for the student network to learn useful representations. Smaller resolutions (64×64, 96×96) degrade downstream performance, confirming that overly aggressive low‑frequency filtering harms learning.

The authors also analyze the frequency bias introduced by the curriculum. Training only on low‑frequency data pushes the model toward high‑frequency features, which improves robustness to low‑frequency corruptions but can hurt performance on high‑frequency distortions. Gaussian‑noise patching counteracts this by injecting high‑frequency noise, resulting in a more balanced frequency response and improved overall robustness.

Related work on curriculum learning, frequency‑guided training, and augmentation‑based robustness is discussed. Prior methods such as EfficientTrain++ have applied low‑resolution curricula to other SSL frameworks but did not achieve notable speed‑ups for DINO. FastDINOv2 uniquely combines the curriculum with the DINOv2 teacher‑student paradigm and a targeted augmentation, delivering both efficiency and robustness without scaling up model size or dataset volume.

Limitations include the focus on a single backbone (ViT‑B/16) and dataset (ImageNet‑1K). The effect of the curriculum on larger ViT‑L/G models, multimodal data, or other downstream tasks (e.g., detection, segmentation) remains to be investigated. Moreover, robustness to medium‑frequency corruptions (e.g., motion blur) is not thoroughly quantified, and the optimal noise‑scale hyper‑parameter may be dataset‑dependent.

In summary, FastDINOv2 demonstrates that careful manipulation of image frequency content during pre‑training can simultaneously accelerate convergence and endow self‑supervised vision models with strong corruption robustness, offering a practical pathway for researchers with limited compute resources to train high‑quality foundation models.

Comments & Academic Discussion

Loading comments...

Leave a Comment