In-Context Bias Propagation in LLM-Based Tabular Data Generation

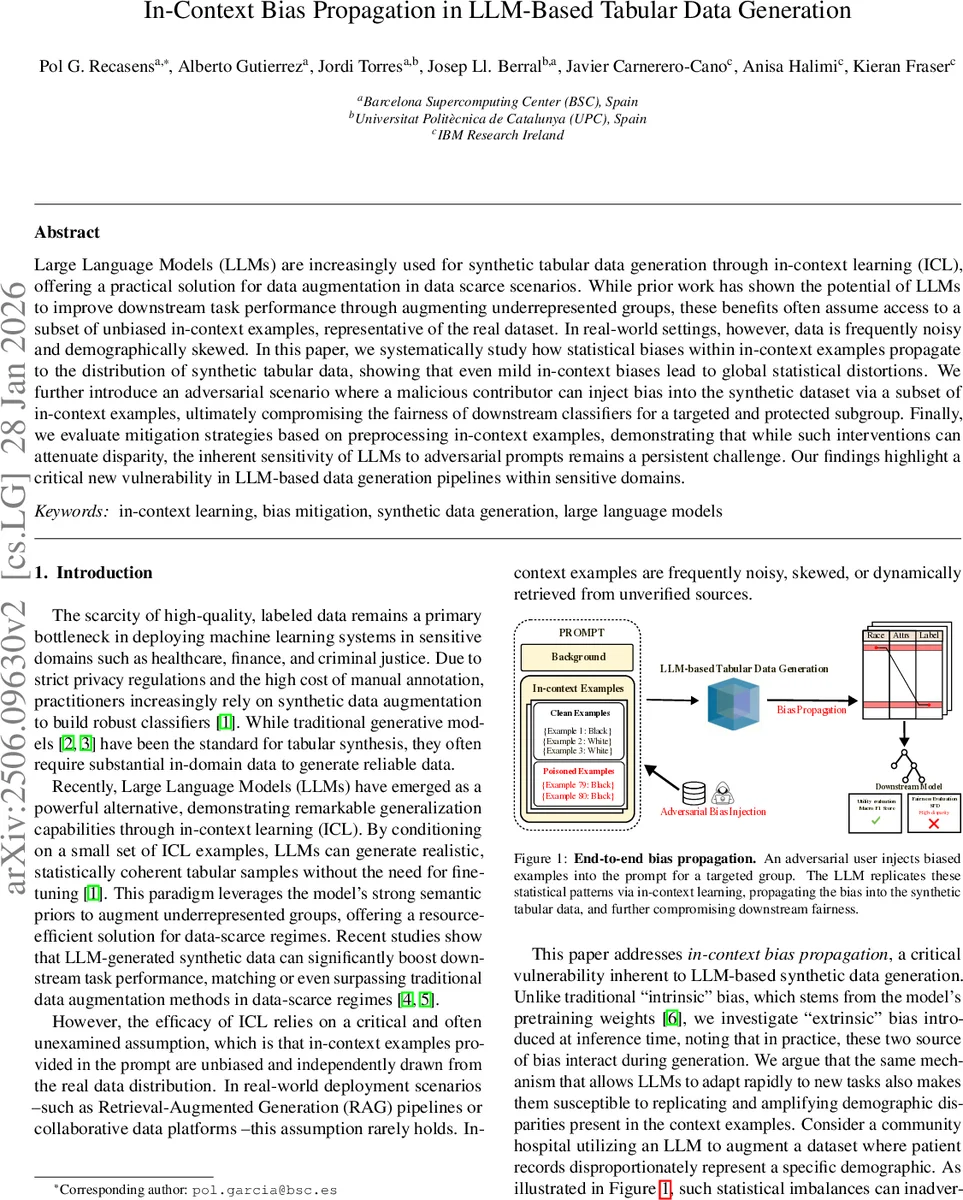

Large Language Models (LLMs) are increasingly used for synthetic tabular data generation through in-context learning (ICL), offering a practical solution for data augmentation in data scarce scenarios. While prior work has shown the potential of LLMs to improve downstream task performance through augmenting underrepresented groups, these benefits often assume access to a subset of unbiased in-context examples, representative of the real dataset. In real-world settings, however, data is frequently noisy and demographically skewed. In this paper, we systematically study how statistical biases within in-context examples propagate to the distribution of synthetic tabular data, showing that even mild in-context biases lead to global statistical distortions. We further introduce an adversarial scenario where a malicious contributor can inject bias into the synthetic dataset via a subset of in-context examples, ultimately compromising the fairness of downstream classifiers for a targeted and protected subgroup. Finally, we evaluate mitigation strategies based on preprocessing in-context examples, demonstrating that while such interventions can attenuate disparity, the inherent sensitivity of LLMs to adversarial prompts remains a persistent challenge. Our findings highlight a critical new vulnerability in LLM-based data generation pipelines within sensitive domains.

💡 Research Summary

This paper investigates a previously under‑explored vulnerability of large language model (LLM)‑based synthetic tabular data generation: the propagation of statistical bias from the in‑context learning (ICL) examples into the generated dataset and downstream classifiers. While prior work has shown that LLMs can augment scarce data and improve performance for under‑represented groups, those studies assume that the few demonstration examples supplied in the prompt are unbiased and i.i.d. with respect to the true data distribution. In practice, data sources are often noisy, demographically skewed, or dynamically retrieved, violating this assumption.

The authors conduct a systematic empirical study using four open‑source LLMs ranging from 8 B to 70 B parameters, prompting them with varying numbers of in‑context examples (k = 20–100) drawn from real‑world fairness‑focused datasets (Adult, COMPAS, Diabetes, Thyroid). They introduce controlled bias into a fraction π of the prompt examples, ranging from marginal (single protected attribute) to conditional (protected attribute correlated with the label) and intersectional (joint sub‑group) distortions. For each setting they generate 5 000 synthetic rows and evaluate three dimensions: downstream utility (macro F1), distributional fidelity (total variation distance for categorical features, Jensen–Shannon divergence for numeric features), and group fairness (statistical parity difference, equalized odds, equal opportunity).

A key theoretical contribution is a two‑component mixture model of the generated distribution:

( D_G \approx (1-\alpha_k),\tilde D_0 + \alpha_k,\Phi_M(D_P) )

where (\tilde D_0) is the zero‑shot anchor distribution, (D_P) is the empirical distribution of the in‑context examples, (\Phi_M) is the model‑specific transformation induced by prompting, and (\alpha_k\in

Comments & Academic Discussion

Loading comments...

Leave a Comment