AdaSCALE: Adaptive Scaling for OOD Detection

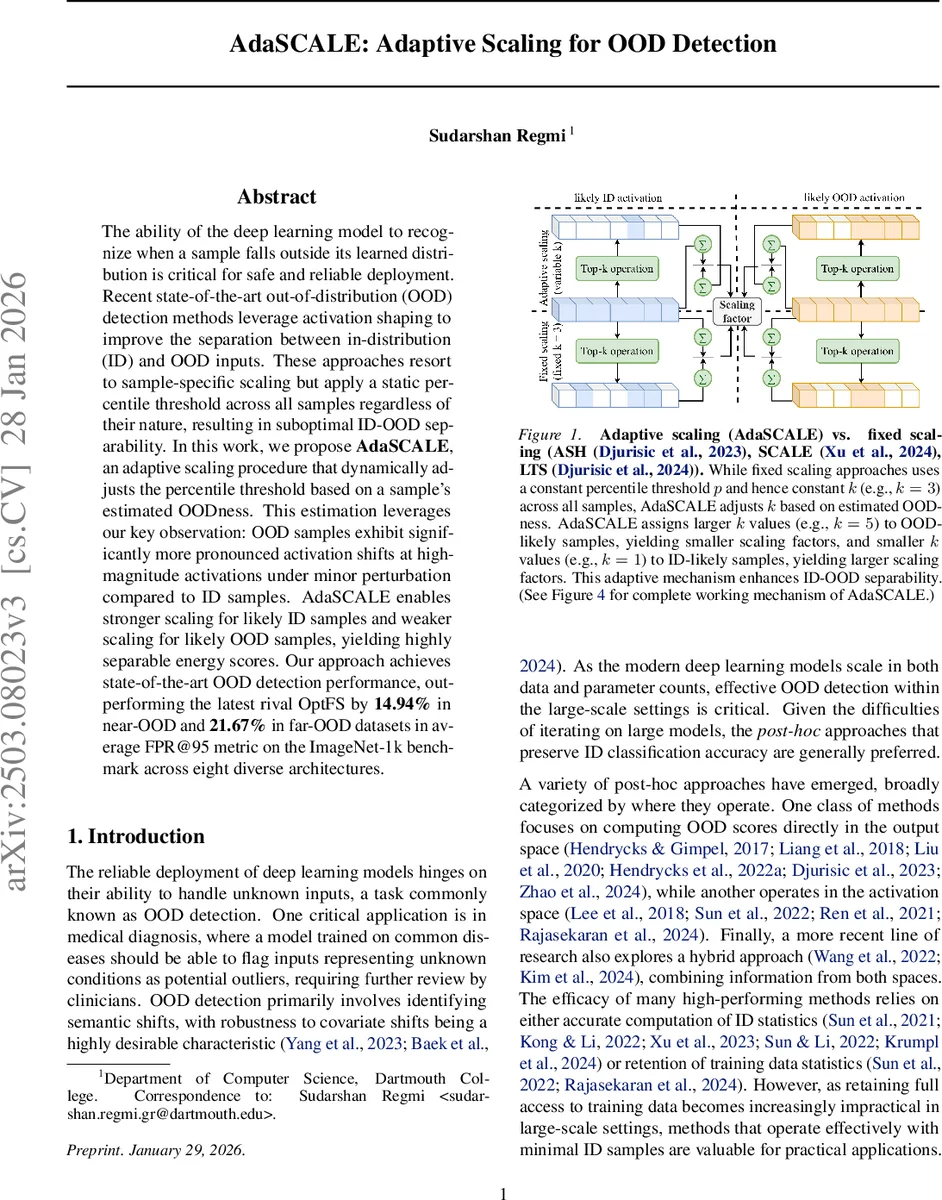

The ability of the deep learning model to recognize when a sample falls outside its learned distribution is critical for safe and reliable deployment. Recent state-of-the-art out-of-distribution (OOD) detection methods leverage activation shaping to improve the separation between in-distribution (ID) and OOD inputs. These approaches resort to sample-specific scaling but apply a static percentile threshold across all samples regardless of their nature, resulting in suboptimal ID-OOD separability. In this work, we propose \textbf{AdaSCALE}, an adaptive scaling procedure that dynamically adjusts the percentile threshold based on a sample’s estimated OOD likelihood. This estimation leverages our key observation: OOD samples exhibit significantly more pronounced activation shifts at high-magnitude activations under minor perturbation compared to ID samples. AdaSCALE enables stronger scaling for likely ID samples and weaker scaling for likely OOD samples, yielding highly separable energy scores. Our approach achieves state-of-the-art OOD detection performance, outperforming the latest rival OptFS by 14.94% in near-OOD and 21.67% in far-OOD datasets in average FPR@95 metric on the ImageNet-1k benchmark across eight diverse architectures. The code is available at: https://github.com/sudarshanregmi/AdaSCALE/

💡 Research Summary

AdaSCALE introduces an adaptive scaling mechanism for post‑hoc out‑of‑distribution (OOD) detection that dynamically adjusts the percentile threshold used to compute scaling factors based on an estimated OOD likelihood for each input sample. The authors first observe that recent state‑of‑the‑art scaling‑based methods (ASH, SCALE, LTS) improve ID‑OOD separability by scaling top‑k activations or logits, but they all share a static percentile p (or equivalently a fixed k) across all test samples. This static choice limits the potential separation because it cannot account for the varying OODness of individual inputs.

To overcome this limitation, the paper proposes to use the sensitivity of high‑magnitude activations to a small perturbation as a signal of OODness. Specifically, for a given image x, a gradient‑based attribution (AT) is computed for each pixel, and the o % of pixels with the lowest absolute attribution are selected. These pixels are perturbed by adding ε · sign(AT) while leaving the rest unchanged, producing a perturbed image xε. The original activation a = fθ(x) and the perturbed activation aε = fθ(xε) are then compared; the absolute difference |aε − a| is defined as the activation shift. Empirically, OOD samples exhibit markedly larger shifts in their top‑k activations than ID samples, while ID samples remain relatively stable.

The activation shift is used to estimate an OODness score for each sample. If the shift is large, the method selects a higher percentile p (i.e., a smaller k) which yields a larger scaling factor r (weaker scaling). Conversely, a small shift leads to a lower percentile (larger k) and a smaller r (stronger scaling). This adaptive choice makes ID‑likely samples receive stronger scaling, pushing their energy scores (−log ∑e^{zi}) to lower values (more negative), while OOD‑likely samples receive weaker scaling, resulting in higher energy scores. The adaptive scaling can be applied either directly to the activation tensor or, following the LTS approach, to the logits after the ReLU layer.

Extensive experiments are conducted on the ImageNet‑1k benchmark across eight diverse architectures (including ResNet‑50, EfficientNet‑B0, ViT‑B/16, etc.) and on CIFAR‑10/100 with two architectures. The evaluation covers both near‑OOD datasets (ImageNet‑A, ImageNet‑R) and far‑OOD datasets (iNaturalist, SUN). AdaSCALE achieves state‑of‑the‑art performance, surpassing the recent OptFS method by an average of 14.94 % lower FPR@95 on near‑OOD and 21.67 % lower on far‑OOD, and also improves AUROC. On ResNet‑50, it outperforms SCALE by 12.95 % / 6.44 % (FPR@95 / AUROC) on near‑OOD and 16.79 % / 0.79 % on far‑OOD.

The computational overhead consists of a single forward pass, a lightweight gradient attribution, and a simple element‑wise difference, which adds modest cost and does not hinder real‑time inference. Importantly, the method requires only a small number of ID samples (a few hundred) to tune the percentile hyper‑parameter, making it practical for large‑scale deployments where full training data may be unavailable.

In summary, AdaSCALE identifies a novel OOD signal—instability of high‑magnitude activations under minor perturbations—and leverages it to adaptively control scaling. This resolves the rigidity of fixed‑percentile scaling, yields substantially better ID‑OOD separation, and demonstrates strong generalization across architectures and datasets. Future directions include exploring alternative perturbation strategies, extending adaptive scaling to intermediate layers for multi‑scale detection, and applying the concept to non‑vision domains such as NLP or time‑series data.

Comments & Academic Discussion

Loading comments...

Leave a Comment