LLMStinger: Jailbreaking LLMs using RL fine-tuned LLMs

We introduce LLMStinger, a novel approach that leverages Large Language Models (LLMs) to automatically generate adversarial suffixes for jailbreak attacks. Unlike traditional methods, which require complex prompt engineering or white-box access, LLMStinger uses a reinforcement learning (RL) loop to fine-tune an attacker LLM, generating new suffixes based on existing attacks for harmful questions from the HarmBench benchmark. Our method significantly outperforms existing red-teaming approaches (we compared against 15 of the latest methods), achieving a +57.2% improvement in Attack Success Rate (ASR) on LLaMA2-7B-chat and a +50.3% ASR increase on Claude 2, both models known for their extensive safety measures. Additionally, we achieved a 94.97% ASR on GPT-3.5 and 99.4% on Gemma-2B-it, demonstrating the robustness and adaptability of LLMStinger across open and closed-source models.

💡 Research Summary

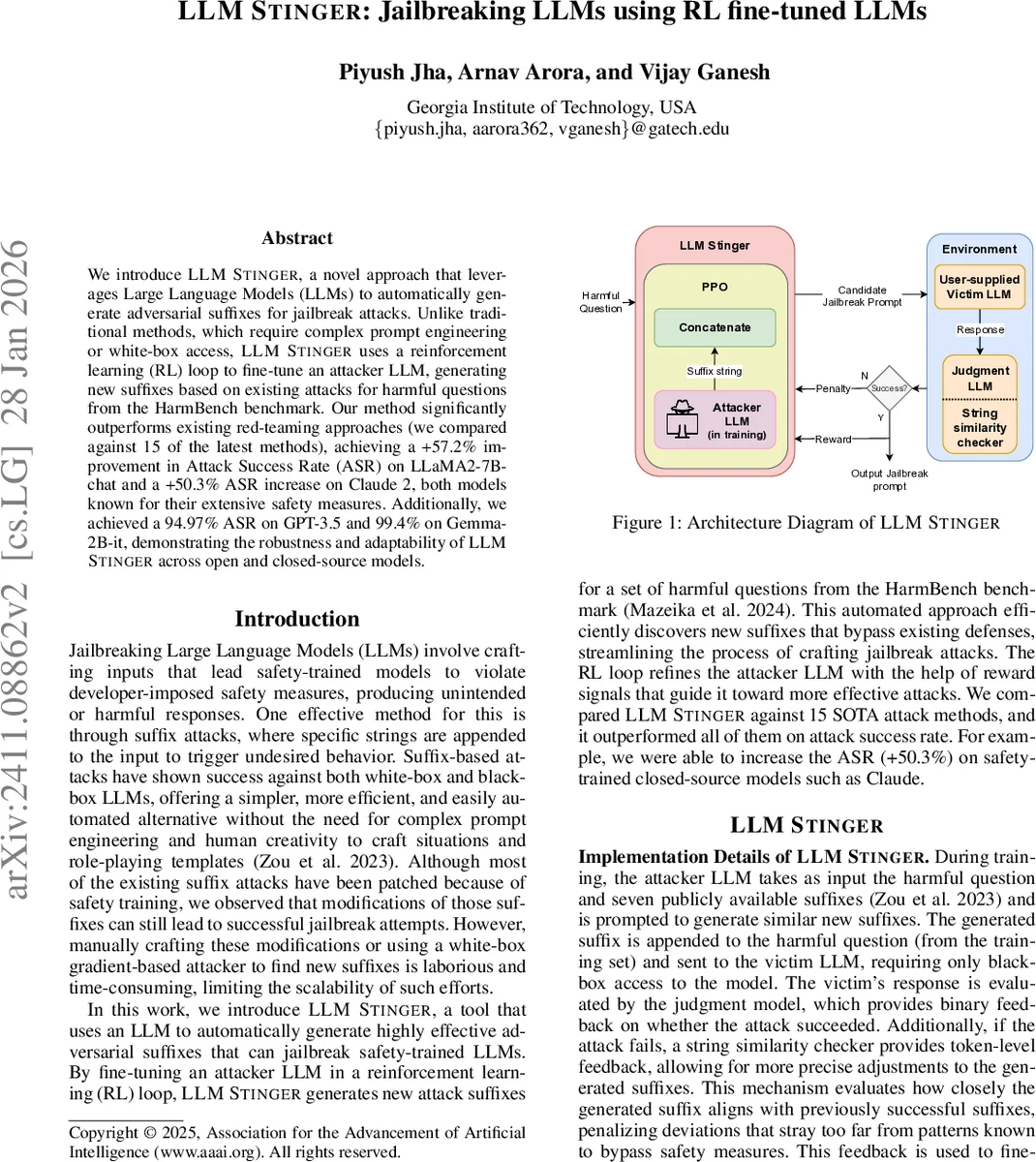

LLMStinger introduces a novel, reinforcement‑learning‑driven framework for automatically generating adversarial suffixes that jailbreak safety‑trained large language models (LLMs). Unlike prior suffix‑based attacks that rely on manual prompt engineering, gradient‑based white‑box optimization, or static handcrafted suffix libraries, LLMStinger treats the attacker model itself as a learnable policy. An attacker LLM (in the paper, the open‑source Gemma model) is fine‑tuned using Proximal Policy Optimization (PPO) over 50 epochs. Each training iteration follows a four‑step loop: (1) a harmful question from the HarmBench benchmark and a set of seven publicly known suffixes are fed to the attacker LLM, which generates a new candidate suffix; (2) the candidate suffix is concatenated to the question and sent to a victim LLM accessed only via a black‑box API; (3) the victim’s response is evaluated by a judgment LLM (the HarmBench judgment model) that returns a binary success/failure signal; (4) if the attack fails, a token‑level string‑similarity checker computes a similarity score between the generated suffix and previously successful suffixes, providing a dense penalty. The binary success signal and the similarity score are combined into a scalar reward that guides PPO updates. This dual‑reward design supplies both coarse‑grained (success/failure) and fine‑grained (token‑level similarity) feedback, encouraging the policy to stay close to known effective patterns while still exploring novel variations.

The authors evaluate LLMStinger against fifteen state‑of‑the‑art jailbreak methods—including GCG, GCG‑M, GCG‑T, PEZ, GBDA, UAT, AutoPrompt, Stochastic Few‑Shot, Zero‑Shot, PAIR, TAP, TAP‑T, AutoDAN, PAP, and human‑crafted jailbreaks—as well as a Direct Request baseline. Experiments are conducted on a selection of open‑source and closed‑source victim models: LLaMA‑2‑7B‑chat, Vicuna‑7B, Claude 2, Claude 2.1, GPT‑3.5‑Turbo (both 0613 and 1106 versions), GPT‑4‑Turbo, and the attacker’s own Gemma‑2B‑it. All victim models are accessed only via black‑box APIs; no internal weights or gradients are required.

Results (Table 1) show that LLMStinger consistently achieves the highest Attack Success Rate (ASR) across the board. On LLaMA‑2‑7B‑chat, it raises ASR from the next‑best 32.1 % to 89.3 % (+57.2 percentage points). On Claude 2—a model with extensive safety training—the method attains 52.2 % ASR, a dramatic increase over the second‑best 1.9 % (≈+50 pp). GPT‑3.5‑Turbo (0613) reaches 88.67 % ASR, and the later 1106 version climbs to 94.97 %. The attacker’s own Gemma‑2B‑it is broken with a staggering 99.4 % ASR, demonstrating that the approach works even when the attacker and victim share architecture. Importantly, these gains are achieved with only black‑box access, highlighting the practicality of the method against commercial APIs.

The paper also discusses implementation details: the RL loop is built on a customized version of the TRL (Transformer Reinforcement Learning) library, extended to accept vector‑valued rewards for token‑level feedback. Training runs on a CentOS 7 cluster equipped with two NVIDIA V100 GPUs, Intel Broadwell CPUs, and 64 GiB RAM. The authors manually verify a subset of successful attacks to ensure that the judgment model’s binary labels truly reflect harmful content, mitigating the risk of “gaming” the evaluator.

Critical analysis reveals several strengths and limitations. Strengths include (i) the novel use of RL to fine‑tune an attacker LLM, moving beyond static suffix libraries; (ii) the dual‑reward mechanism that balances exploration with adherence to proven patterns; (iii) the ability to attack closed‑source models without white‑box information; and (iv) extensive empirical comparison against a broad set of baselines. Limitations involve (i) potential over‑reliance on the similarity checker, which may bias the search toward variations of known suffixes rather than discovering fundamentally new attack vectors; (ii) dependence on the judgment LLM’s labeling accuracy—misclassifications could inflate ASR; (iii) high computational cost due to PPO training on large GPUs, which may hinder reproducibility for smaller labs; and (iv) ethical concerns: publishing a powerful black‑box jailbreak method could accelerate malicious exploitation, despite the authors’ intent to motivate stronger defenses.

Ethically, the authors acknowledge the dual‑use nature of their work and suggest restricted release of code and models, coupled with parallel development of defensive strategies such as In‑Context Adversarial Games (ICAG) and adaptive safety fine‑tuning. The paper positions LLMStinger as a “red‑team” tool that reveals systemic vulnerabilities in current alignment pipelines, arguing that only by exposing these weaknesses can the community design robust mitigations.

In conclusion, LLMStinger represents a significant advance in automated LLM jailbreak research. By integrating reinforcement learning, token‑level feedback, and black‑box victim interaction, it achieves unprecedented attack success rates across a diverse set of models. Future work should explore richer reward signals (e.g., semantic similarity, toxicity scores), more efficient RL algorithms, and defensive countermeasures that can detect and neutralize RL‑generated suffixes in real time.

Comments & Academic Discussion

Loading comments...

Leave a Comment