LogogramNLP: Comparing Visual and Textual Representations of Ancient Logographic Writing Systems for NLP

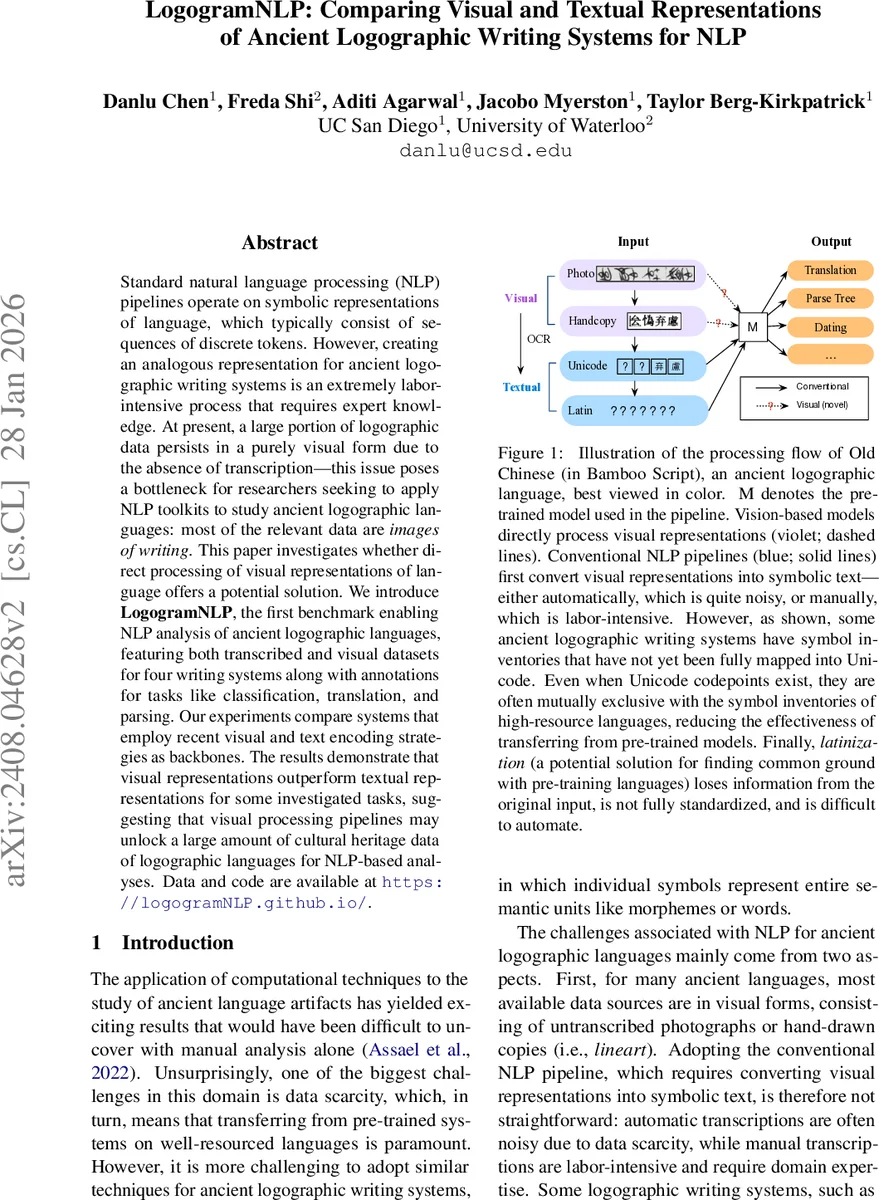

Standard natural language processing (NLP) pipelines operate on symbolic representations of language, which typically consist of sequences of discrete tokens. However, creating an analogous representation for ancient logographic writing systems is an extremely labor intensive process that requires expert knowledge. At present, a large portion of logographic data persists in a purely visual form due to the absence of transcription – this issue poses a bottleneck for researchers seeking to apply NLP toolkits to study ancient logographic languages: most of the relevant data are images of writing. This paper investigates whether direct processing of visual representations of language offers a potential solution. We introduce LogogramNLP, the first benchmark enabling NLP analysis of ancient logographic languages, featuring both transcribed and visual datasets for four writing systems along with annotations for tasks like classification, translation, and parsing. Our experiments compare systems that employ recent visual and text encoding strategies as backbones. The results demonstrate that visual representations outperform textual representations for some investigated tasks, suggesting that visual processing pipelines may unlock a large amount of cultural heritage data of logographic languages for NLP-based analyses.

💡 Research Summary

LogogramNLP introduces the first benchmark that enables natural language processing (NLP) research on ancient logographic writing systems by providing both visual (image) and textual (transcribed) representations for four historically significant scripts: Linear A, Egyptian hieroglyphic, Cuneiform (Akkadian & Sumerian), and Bamboo script (Old Chinese). The authors argue that the majority of extant data for these languages exists only as photographs or hand‑copied lineart, making conventional token‑based pipelines cumbersome due to the labor‑intensive transcription process and the incomplete Unicode coverage of many glyphs. Moreover, even when Unicode transcriptions are available, the character inventories often do not overlap with those of high‑resource languages, limiting the effectiveness of cross‑lingual transfer from multilingual pretrained models such as mBERT or XLM‑R.

To address these challenges, the paper investigates whether direct processing of visual representations can serve as a viable alternative. The benchmark includes three downstream tasks—attribute classification (e.g., provenance, period, genre), machine translation into English, and Universal Dependencies (UD) parsing—each annotated for all four languages. For each language, the authors curate multiple modalities: raw photographs, line‑by‑line hand‑drawn lineart, Unicode transcriptions (when available), and Latin transliterations (where applicable). They also describe three image preprocessing strategies: (1) using raw images as‑is, (2) creating montage images that concatenate glyphs horizontally (used for Linear A where reading order is uncertain), and (3) digitally rendering Unicode text with appropriate fonts (used for Cuneiform).

The methodological comparison is organized into two families of encoders. Textual encoders comprise (a) vocabulary‑extension approaches that add unseen tokens to multilingual models, (b) Latin‑transliteration proxies feeding into mBERT, and (c) token‑free byte‑level models such as ByT5 and CANINE that operate directly on UTF‑8 bytes. Visual encoders consist of (a) pixel‑based language models (PIXEL and PIXEL‑MT) pretrained on large web‑scale image corpora with masked image modeling objectives, (b) Vision Transformer‑MAE (ViT‑MAE) for generic image encoding, and (c) a ResNet‑50 backbone for full‑document image inputs. All encoders are fine‑tuned on the same downstream heads for each task, allowing a fair comparison.

Experimental results reveal several noteworthy patterns. For machine translation, the pixel‑based model PIXEL‑MT outperforms the best textual baseline (mBERT‑based translation) in BLEU score across most languages, suggesting that visual encoding preserves script‑specific cues that aid cross‑lingual transfer. In attribute classification, visual models also achieve higher accuracy and F1 scores for Linear A and Bamboo script, where Unicode coverage is sparse and visual glyph shapes carry discriminative information. Conversely, for dependency parsing, textual models retain an advantage, likely because syntactic relations are more naturally expressed at the token level and benefit from existing UD annotations.

The authors also evaluate a “transcribe‑then‑process” pipeline, where OCR or manual transcription is performed first and the resulting noisy text is fed to textual models. This pipeline consistently underperforms the direct visual approach, highlighting the error propagation problem inherent in low‑resource OCR for ancient scripts.

Limitations discussed include the focus on only four scripts (raising questions about scalability), the lack of extensive robustness analysis to image quality variations (e.g., lighting, damage), and the need for deeper investigation into how visual pretraining on modern web images aligns with the visual characteristics of ancient artifacts. Future work is proposed to expand the benchmark to additional scripts, explore multimodal pretraining that jointly leverages visual and textual signals, and develop more sophisticated image‑centric models that can handle multi‑line layout and variable reading directions.

In summary, LogogramNLP demonstrates that processing ancient logographic languages directly in the visual domain can rival or surpass traditional text‑centric pipelines for several key tasks. By unlocking the vast reservoir of untranscribed image data, this work opens a new research avenue for digital humanities, enabling scholars to apply state‑of‑the‑art NLP techniques to cultural heritage materials that were previously inaccessible due to transcription bottlenecks.

Comments & Academic Discussion

Loading comments...

Leave a Comment