LEMON: How Well Do MLLMs Perform Temporal Multimodal Understanding on Instructional Videos?

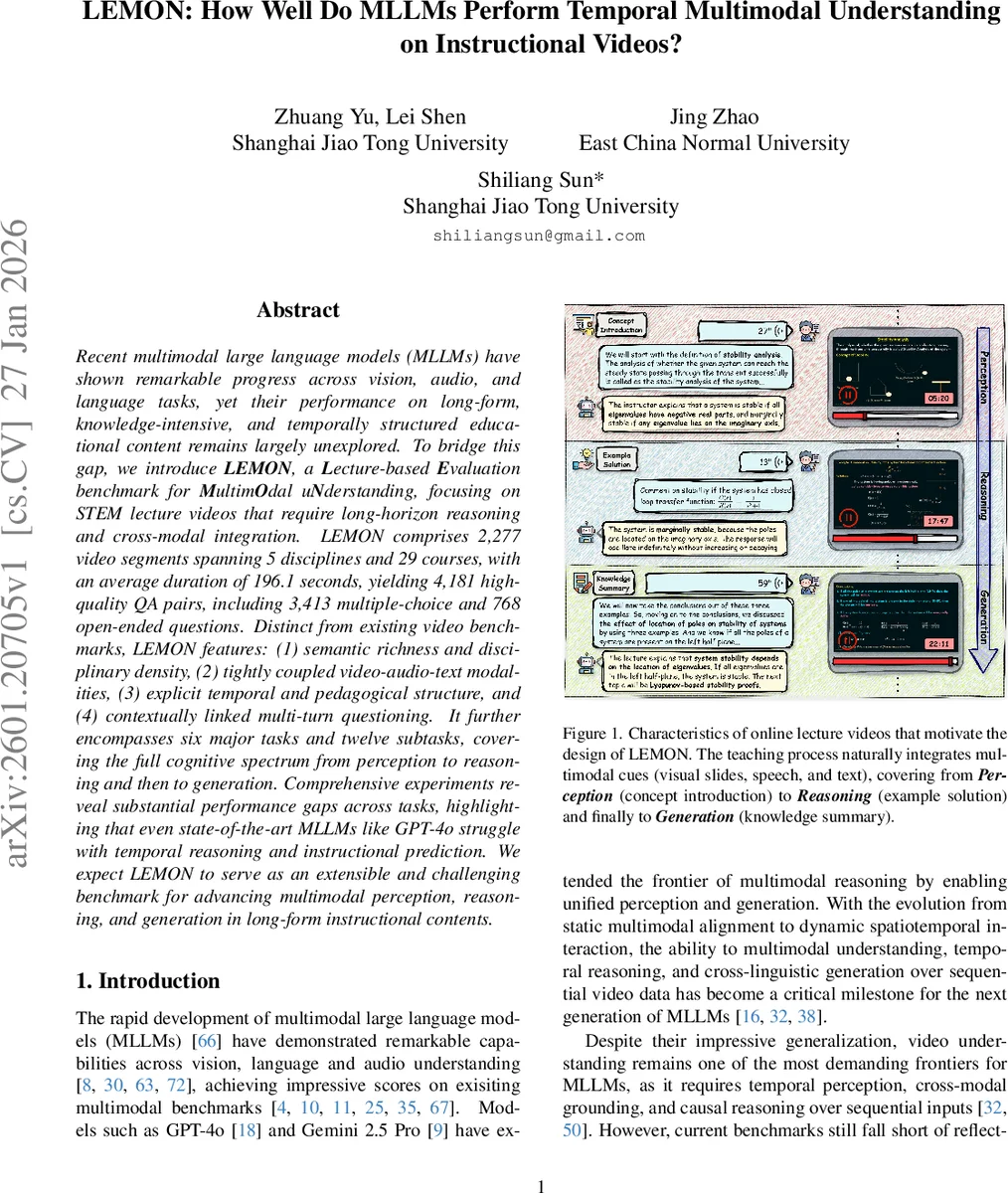

Recent multimodal large language models (MLLMs) have shown remarkable progress across vision, audio, and language tasks, yet their performance on long-form, knowledge-intensive, and temporally structured educational content remains largely unexplored. To bridge this gap, we introduce LEMON, a Lecture-based Evaluation benchmark for MultimOdal uNderstanding, focusing on STEM lecture videos that require long-horizon reasoning and cross-modal integration. LEMON comprises 2,277 video segments spanning 5 disciplines and 29 courses, with an average duration of 196.1 seconds, yielding 4,181 high-quality QA pairs, including 3,413 multiple-choice and 768 open-ended questions. Distinct from existing video benchmarks, LEMON features: (1) semantic richness and disciplinary density, (2) tightly coupled video-audio-text modalities, (3) explicit temporal and pedagogical structure, and (4) contextually linked multi-turn questioning. It further encompasses six major tasks and twelve subtasks, covering the full cognitive spectrum from perception to reasoning and then to generation. Comprehensive experiments reveal substantial performance gaps across tasks, highlighting that even state-of-the-art MLLMs like GPT-4o struggle with temporal reasoning and instructional prediction. We expect LEMON to serve as an extensible and challenging benchmark for advancing multimodal perception, reasoning, and generation in long-form instructional contents.

💡 Research Summary

The paper introduces LEMON (Lecture‑based Evaluation benchmark for Multimodal uNderstanding), a novel benchmark designed to assess the capabilities of multimodal large language models (MLLMs) on temporally structured, knowledge‑intensive instructional videos. LEMON comprises 2,277 video segments drawn from 29 online STEM lecture courses across five disciplines (Mathematics, Artificial Intelligence, Computer Science, Electronic Engineering, and Robotics). Each segment averages 196.1 seconds and is accompanied by synchronized video, audio, and subtitle streams, providing a rich three‑modal context.

From these materials the authors generated 4,181 high‑quality question‑answer (QA) pairs, including 3,413 multiple‑choice and 768 open‑ended items. The QA set is organized into six major task categories—Streaming Perception (SP), OCR‑Based Reasoning (OR), Audio Comprehension (AC), Temporal Awareness (TA), Instructional Prediction (IP), and Advanced Expression (AE)—and further divided into twelve subtasks that span the full cognitive spectrum from low‑level perception (e.g., Key Concept Recognition, Optical Character Recognition) to high‑level reasoning (Problem Solving, Temporal Ordering) and generation (Video Summarization, Knowledge Translation).

A distinctive feature of LEMON is its multi‑turn dialogue format: questions are interdependent, requiring models to retain answers from previous turns and use them to answer subsequent queries. This design mimics realistic educational interactions where a learner builds on prior knowledge. The benchmark also emphasizes genuine multimodal dependence; during construction, each QA pair was validated with and without specific modalities using the Qwen2.5‑Omni model to ensure that removal of any modality degrades performance, confirming that the question truly requires cross‑modal integration.

The dataset was built using a hybrid human‑AI pipeline. GPT‑4o generated initial questions from subtitles, Gemini 2.0 Flash produced answer options, and human annotators refined and verified the content. Multiple rounds of quality control—including independent reviewer checks and automated multimodal dependency tests—were applied to guarantee reliability.

For evaluation, the authors tested a broad spectrum of models: open‑source omni‑modal models, dedicated video‑understanding models, and proprietary systems such as GPT‑4o and Gemini 2.5 Pro. Results reveal a consistent pattern: while models achieve reasonable accuracy on pure perception tasks (e.g., 78% on Key Concept Recognition), they struggle dramatically on tasks that demand temporal reasoning and future prediction. Temporal Ordering accuracy hovers around 40% and Instructional Intent Recognition falls below 35% for the strongest models. OCR‑based reasoning shows similar degradation when visual text is ambiguous and must be disambiguated with audio cues.

These findings highlight two major gaps in current MLLMs. First, most architectures are optimized for short‑range context (typically under one minute), limiting their ability to maintain and reason over longer streams that span several minutes of lecture material. Second, the models lack robust mechanisms for capturing pedagogical intent and causal instructional flow, which are essential for predicting upcoming content or generating coherent summaries.

The authors argue that LEMON fills a critical void left by existing video benchmarks, which often focus on entertainment or short‑clip domains and lack the tightly coupled multimodal and temporal structure of educational videos. By providing a benchmark that combines long‑form streaming, multimodal alignment, hierarchical cognition, and interactive dialogue, LEMON offers a comprehensive testbed for future research. Potential directions inspired by LEMON include developing streaming encoders with persistent memory, enhancing cross‑modal attention mechanisms, and incorporating educational theory‑driven prompts to better model teaching intent.

In conclusion, LEMON demonstrates that even state‑of‑the‑art MLLMs such as GPT‑4o are far from achieving human‑level understanding of instructional videos. The benchmark sets a high bar for multimodal perception, temporal reasoning, and generative capabilities, and is expected to drive the next generation of models toward truly integrated, long‑form, and pedagogically aware AI assistants.

Comments & Academic Discussion

Loading comments...

Leave a Comment