DiSa: Saliency-Aware Foreground-Background Disentangled Framework for Open-Vocabulary Semantic Segmentation

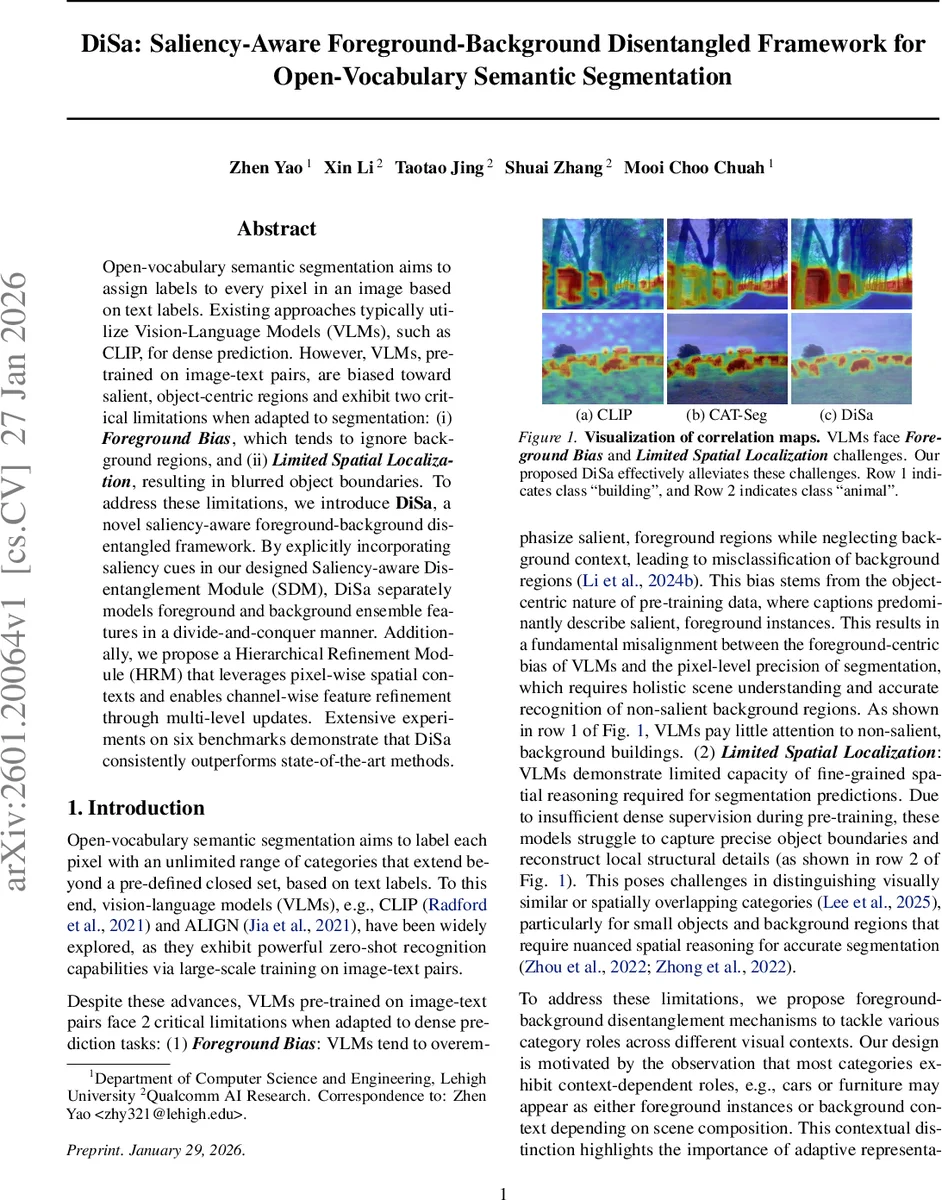

Open-vocabulary semantic segmentation aims to assign labels to every pixel in an image based on text labels. Existing approaches typically utilize vision-language models (VLMs), such as CLIP, for dense prediction. However, VLMs, pre-trained on image-text pairs, are biased toward salient, object-centric regions and exhibit two critical limitations when adapted to segmentation: (i) Foreground Bias, which tends to ignore background regions, and (ii) Limited Spatial Localization, resulting in blurred object boundaries. To address these limitations, we introduce DiSa, a novel saliency-aware foreground-background disentangled framework. By explicitly incorporating saliency cues in our designed Saliency-aware Disentanglement Module (SDM), DiSa separately models foreground and background ensemble features in a divide-and-conquer manner. Additionally, we propose a Hierarchical Refinement Module (HRM) that leverages pixel-wise spatial contexts and enables channel-wise feature refinement through multi-level updates. Extensive experiments on six benchmarks demonstrate that DiSa consistently outperforms state-of-the-art methods.

💡 Research Summary

The paper tackles two fundamental shortcomings of Vision‑Language Models (VLMs) such as CLIP when they are adapted for open‑vocabulary semantic segmentation (OVSS): (1) a foreground bias that causes the model to focus on salient objects while neglecting background regions, and (2) limited spatial localization that leads to blurry object boundaries. To overcome these issues, the authors propose DiSa, a saliency‑aware foreground‑background disentangled framework.

DiSa consists of two main components. The Saliency‑aware Disentanglement Module (SDM) first computes cross‑attention between CLIP’s text embeddings (queries) and image embeddings (keys/values) to obtain an initial attention map. An auxiliary Image‑Text Matching (ITM) loss is introduced, and its gradient is used in a Grad‑CAM‑style re‑weighting to generate class‑specific saliency maps. These saliency maps provide per‑pixel importance scores that are used to split the per‑class visual embeddings into a foreground branch (high saliency) and a background branch (low saliency). Each branch is processed by separate transformer encoders, yielding distinct foreground and background ensemble features.

The second component, the Hierarchical Refinement Module (HRM), refines the disentangled features through three successive stages:

- Pixel‑wise Refinement – spatial convolutions (including dilated kernels) enhance local context and sharpen blurred boundaries.

- Category‑wise Refinement – channel‑level self‑attention and squeeze‑excitation mechanisms enforce consistency among tokens belonging to the same semantic class, allowing foreground and background tokens of the same class to complement each other.

- Semantic‑wise Refinement – a group‑level transformer treats the whole foreground set and the whole background set as two semantic groups, encouraging global coherence and reducing noise, especially for small objects and thin structures.

Each refinement stage is connected via residual links, preserving information while progressively improving detail. The refined foreground and background features are then merged by a weighted aggregation block, producing a unified correlation map that is finally decoded by an up‑sampling decoder into pixel‑wise segmentation masks.

Training jointly optimizes the ITM loss (providing saliency supervision without ground‑truth masks), the standard pixel‑wise cross‑entropy loss, and auxiliary background‑foreground regularization terms.

Extensive experiments on six large‑scale OVSS benchmarks—including ADE20K‑Panoptic, COCO‑Stuff, Pascal‑Context, Cityscapes, LVIS‑Seg, and OpenImages‑V6—show that DiSa consistently outperforms state‑of‑the‑art methods such as CAT‑Seg, SegCLIP, and ESC‑Net. The reported mean Intersection‑over‑Union (mIoU) gains range from 3 % to 5 % points, with particularly large improvements (5–7 % points) on background classes. Ablation studies confirm that both the saliency‑guided disentanglement and the three‑stage hierarchical refinement are essential: removing SDM, HRM, or the ITM loss each leads to a noticeable drop in performance.

The authors acknowledge that generating saliency maps via Grad‑CAM and ITM introduces extra computational overhead, and that in highly complex scenes saliency may become overly concentrated on foreground objects, potentially limiting background utilization. Future work is suggested to explore lightweight saliency estimators, dynamic thresholding strategies, and self‑supervised refinement using multimodal prompts to improve efficiency and generalization.

In summary, DiSa offers a principled solution to the foreground bias and spatial localization problems of CLIP‑based OVSS by explicitly separating foreground and background representations with saliency guidance and then refining them through multi‑level spatial and semantic processing. The framework sets a new performance baseline and provides a clear direction for further research in open‑vocabulary visual understanding.

Comments & Academic Discussion

Loading comments...

Leave a Comment